Xingyu Fu

171 posts

Xingyu Fu

@XingyuFu2

Postdoc Fellow @PrincetonPLI | PhD @Penn @cogcomp. | Focused on Vision+Language | Previous: @MSFTResearch @AmazonScience B.S. @UofIllinois | ⛳️😺

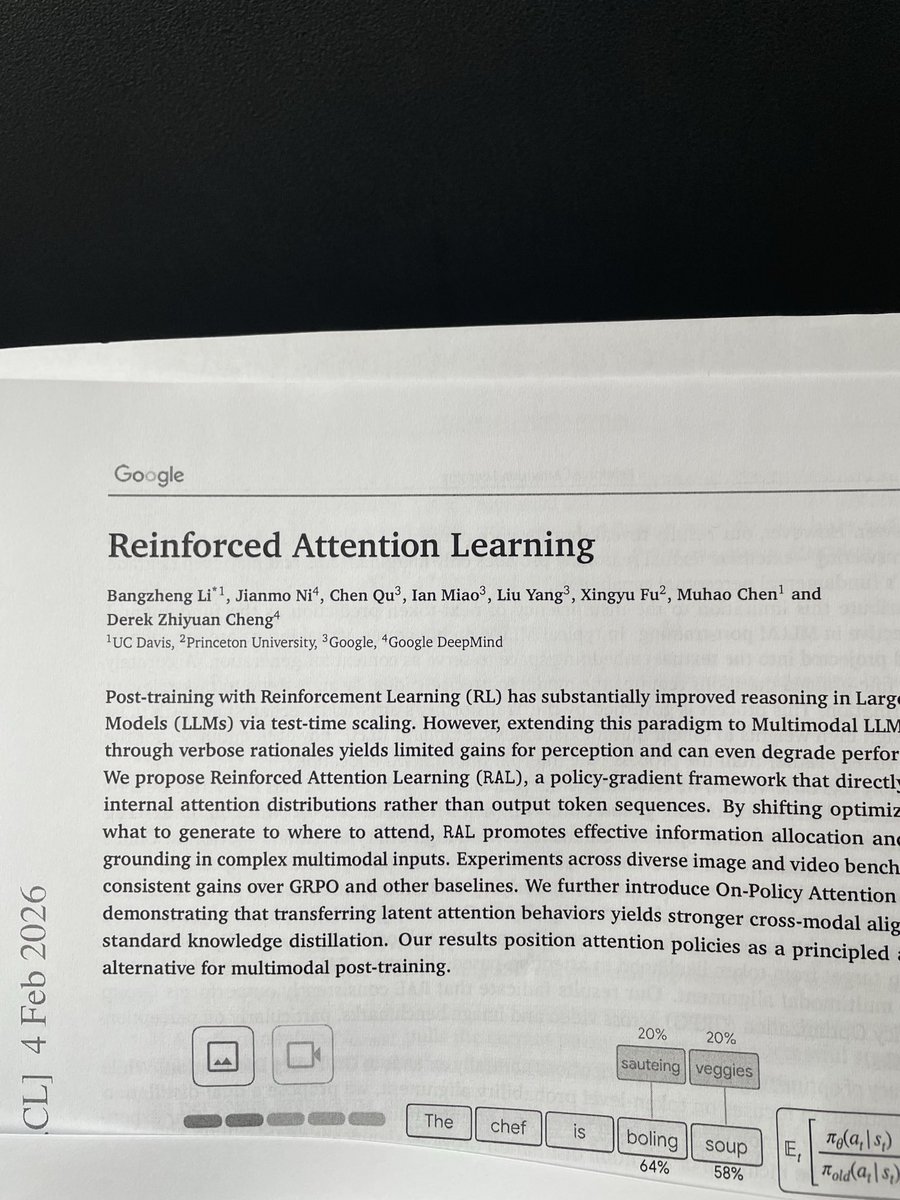

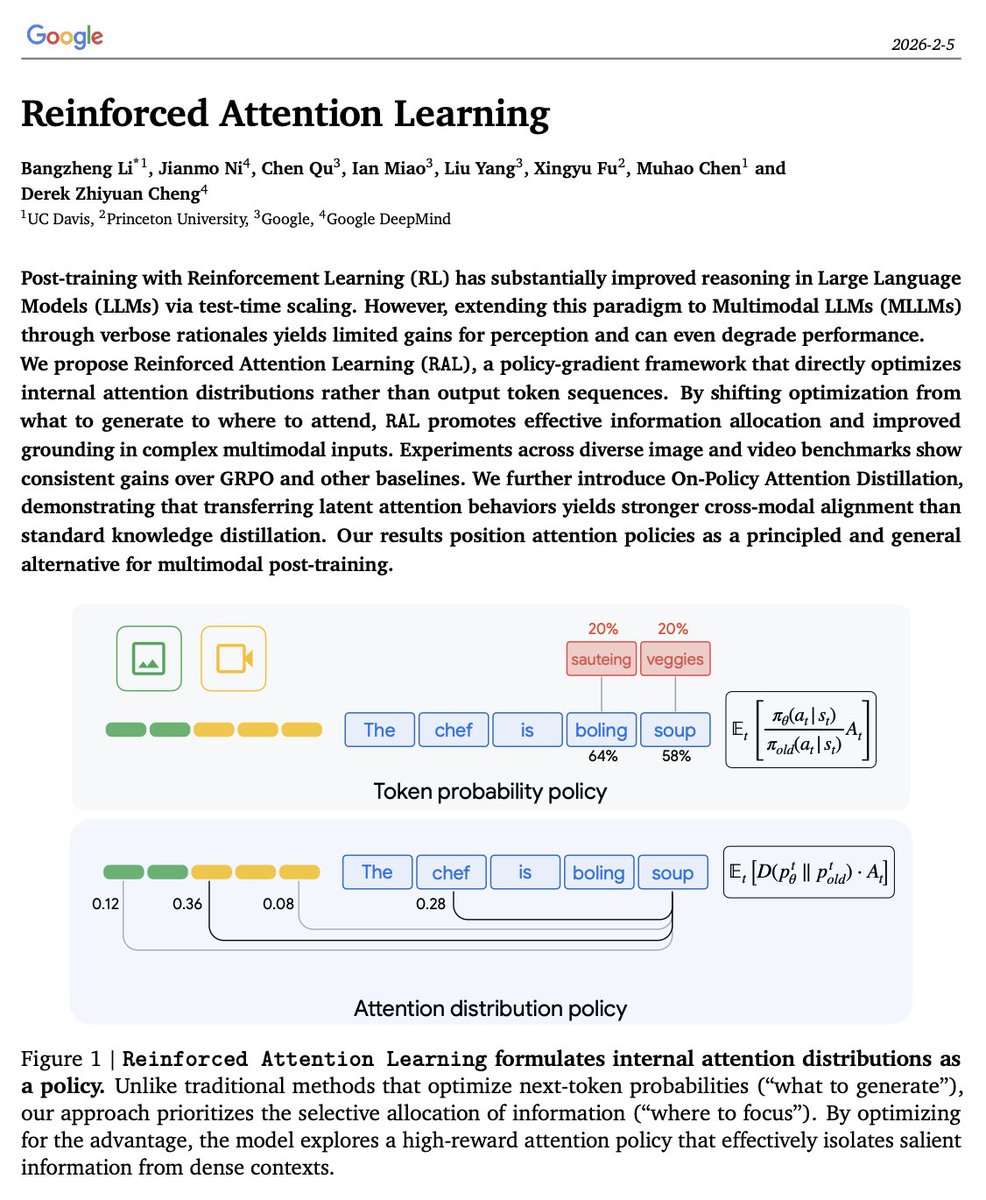

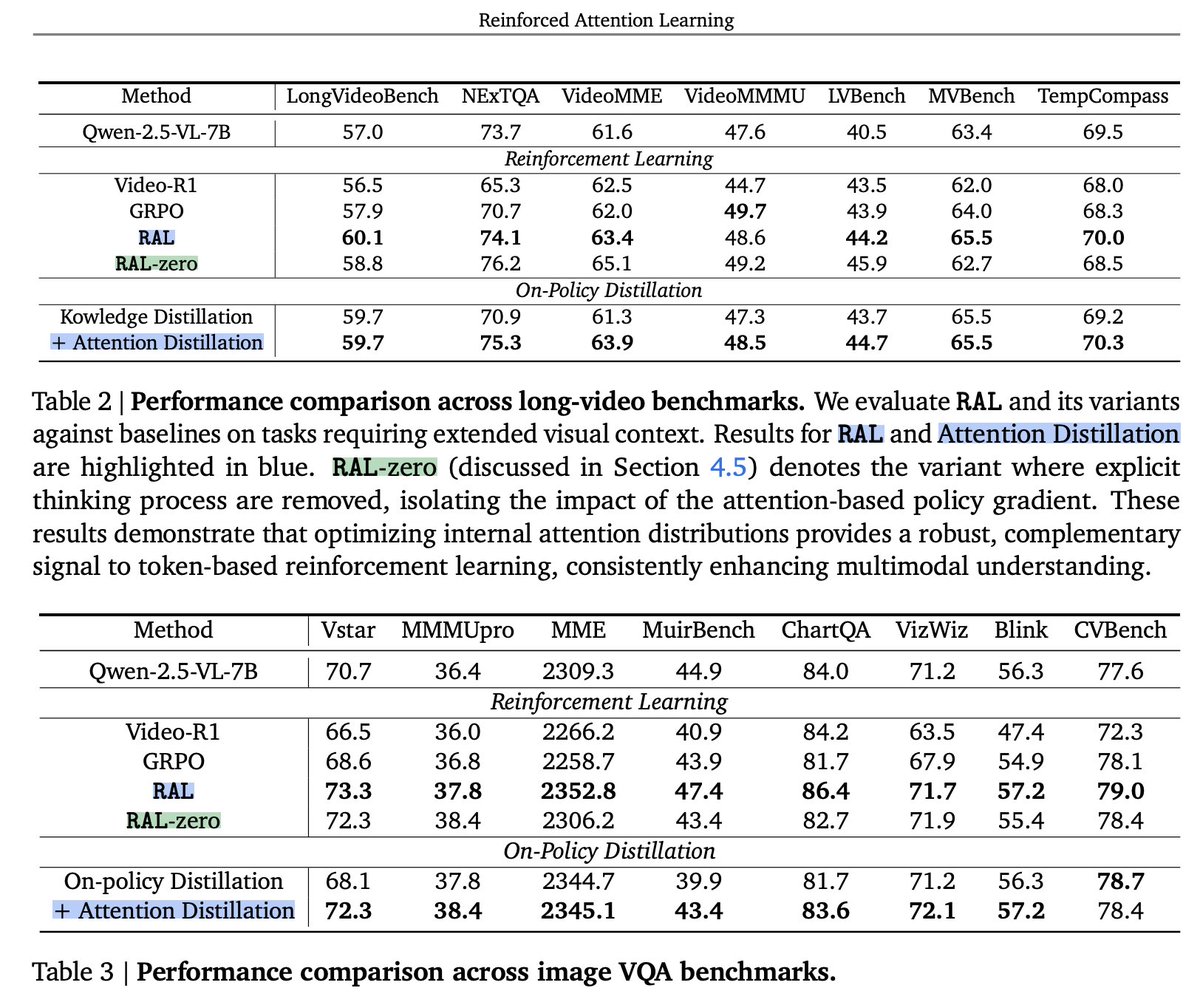

🚨 Tokens are the surface. Attention is the mechanism. What if RL learned the latter? 💡 Introducing Reinforced Attention Learning 🧠 Key idea: Current RL for LLMs shapes "what is generated". We instead optimize "where the model focuses", to influence how it actually reasons. 📰 Paper: arxiv.org/pdf/2602.04884 (with @Google and @GoogleDeepMind) Standard PPO/GRPO perform importance sampling over token distributions, using advantages to up- or down-weight token probabilities. ⏳ The flip: Instead of "what token to generate", we optimize "where to attend" At each generation step, we measure the divergence between attention distributions of the current and old policy—across all previous tokens. - High-reward samples → keep attention close - Low-reward samples → push attention away The policy learns where to allocate computation, not just which surface-level token to generate. ⚙️ Plug-and-play Our attention-level objective pairs with any policy gradient method, including PPO and GRPO. 📊 Results RAL > Vanilla GRPO. We’re seeing consistent accuracy gains across a wide range of image and video QA benchmarks when experimenting on multimodal LLMs. ✨ One more thing Attention can be learned from a teacher model via on-policy attention distillation—going beyond knowledge transfer to inherit latent attention behaviors. This On-policy Attention Distillation takes standard On-Policy Distillation to the next level. Thanks to all my colleagues: @jianmo_ni @Chen_Qu1 @ianmiao @liuyang_irnlp @XingyuFu2 @infolaber🙏

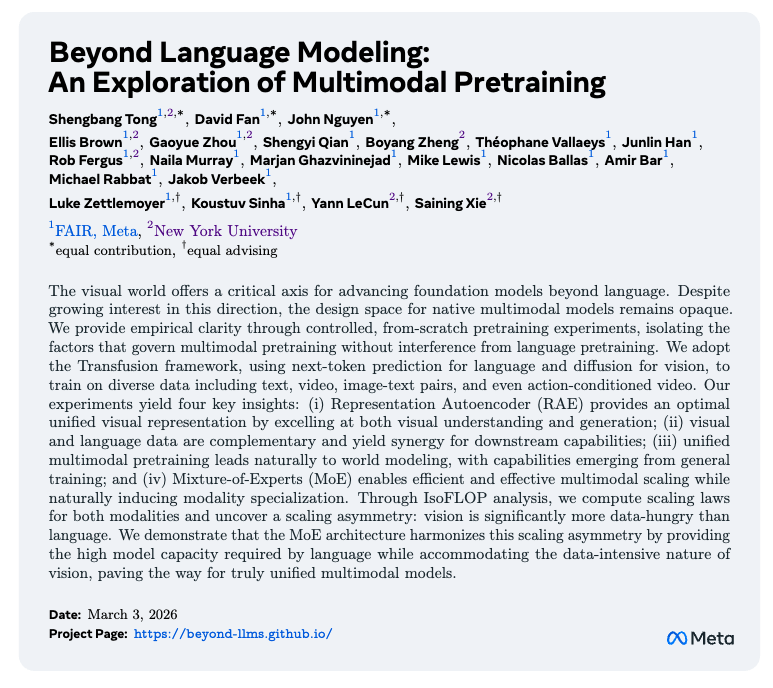

How good are unified models at generating images AND text together? We built UEval to find out. Results: GPT-5-Thinking scores only 66.4/100. Best open-source model (Emu3.5): 49.1. Introducing UEval: A Benchmark for Unified Multimodal Generation.

Excited to work with new PhD students (Fall 2026) on multimodal models, AI for automated scientific research, and foundation model architectures at Princeton. If this resonates with you, please apply to the CS PhD program and mention my name.

Our Goedel-Prover V1 will be presented at COLM 2025 in Montreal this Wednesday afternoon! I won’t be there in person, but my amazing and renowned colleague @danqi_chen will be around to help with the poster — feel free to stop by!

Token compression causes irreversible information loss in video understanding. 🤔 What can we do with sparse attention? We introduce VideoNSA, a hardware-aware and learnable hybrid sparse attention mechanism that scales to 128K context length.