XLLM-Reason-Plan

32 posts

XLLM-Reason-Plan

@XllmReasonPlan

The First Workshop on the Application of LLM Explainability to Reasoning and Planning

I’m actively looking for Summer 2026 internships focused on language model interpretability and methods to improve model reasoning and controllability. I’m also attending @NeurIPSConf —would love to connect! Resume & details: dakingrai.github.io

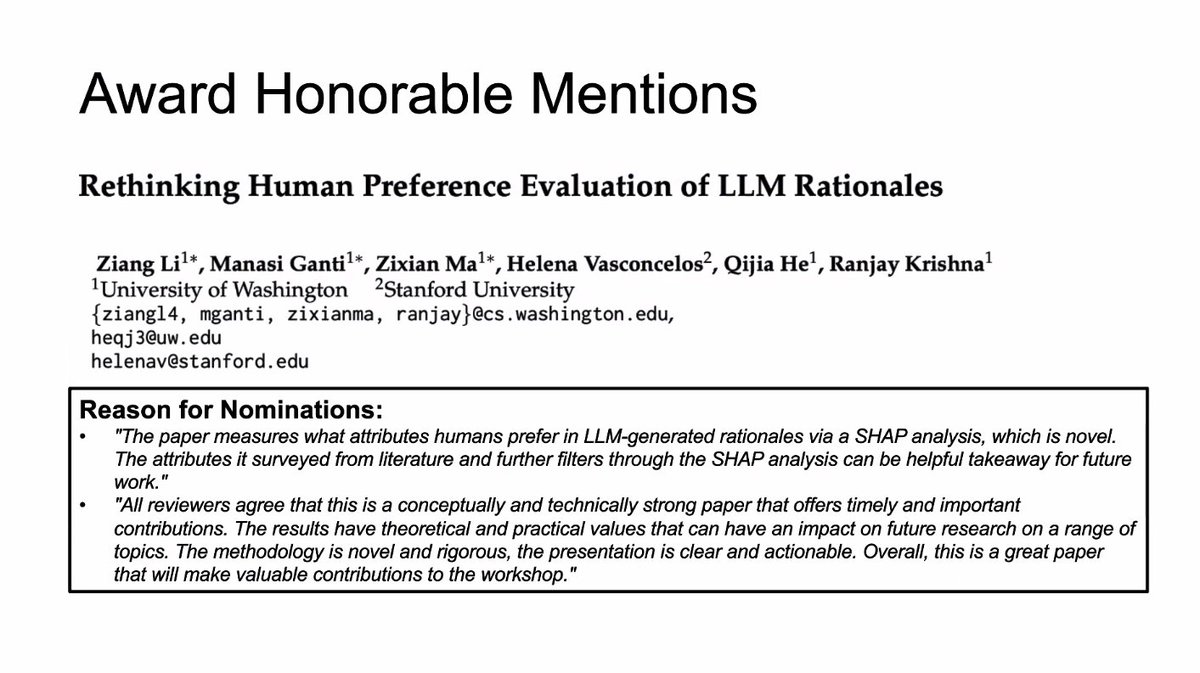

Best Paper Award goes to Li et al., "Attributing Response to Context: A Jensen-Shannon Divergence Driven Mechanistic Study of Context Attribution in Retrieval-Augmented Generation" @liruizhe94

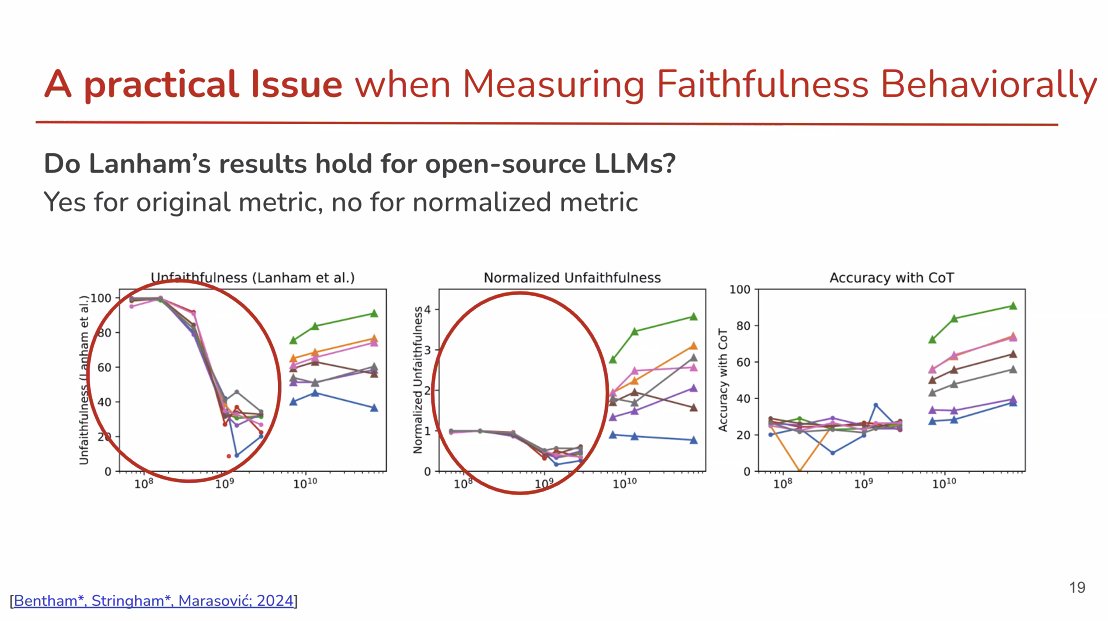

Ever wonder why math reasoning fails without CoT? The bottleneck isn’t “abstraction” but “calculation”. I’ll be presenting at COLM on Friday (11–12) — come chat about reasoning × interpretability! @Mila_Quebec @RBCBorealis 🔗arxiv.org/pdf/2505.23701 #COLM2025 #EMNLP2025