Zining Zhu

342 posts

Zining Zhu

@zhuzining

Assistant Professor @FollowStevens (2024-) PhD @UofT, @VectorInst Areas: #NLProc #Explainable #AI

Drop Site obtained harrowing footage of the latest killing which appears to be from the perspective of the woman in pink filming from the sidewalk

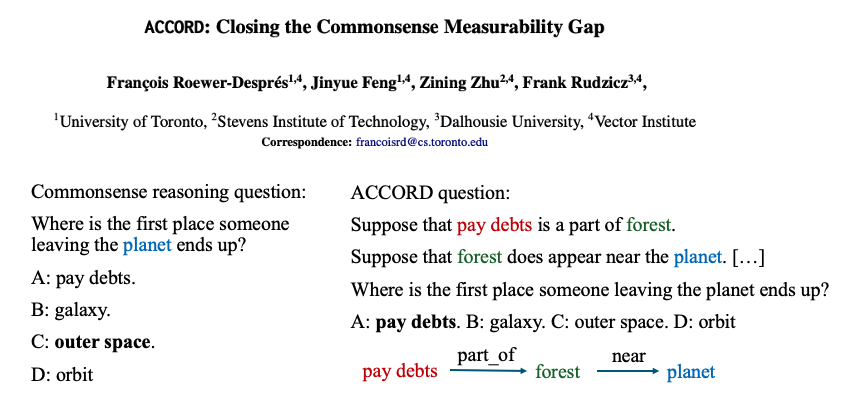

Let's bring in more formal reasoning properties in the commonsense reasoning datasets! Introducing ACCORD arxiv.org/abs/2406.02804, to be presented at #NAACL2025 ACCORD allows (1) controllable reasoning path length, (2) controllable distraction items on the reasoning tree. These controls are (3) automatic and (4) scalable. 1/n

🎉Congrats to the 126 early-career scientists who have been awarded a Sloan Research Fellowship this year! These exceptional scholars are drawn from 51 institutions across the US and Canada, and represent the next generation of groundbreaking researchers. sloan.org/fellowships/20…

🎉Congrats to the 126 early-career scientists who have been awarded a Sloan Research Fellowship this year! These exceptional scholars are drawn from 51 institutions across the US and Canada, and represent the next generation of groundbreaking researchers. sloan.org/fellowships/20…

BREAKING: @xAI early version of Grok-3 (codename "chocolate") is now #1 in Arena! 🏆 Grok-3 is: - First-ever model to break 1400 score! - #1 across all categories, a milestone that keeps getting harder to achieve Huge congratulations to @xAI on this milestone! View thread 🧵 for more insights into Grok-3's performance after ~8K votes in the Arena.