YIFENG LIU

76 posts

YIFENG LIU

@YIFENGLIU_AI

CS Ph.D. student on LLM @ UCLA AGI Lab. Previous works: MARS, TPA, ByteDance Seed models and Kimi-1.5....

Most people think AI helps with research. They’re underestimating it. We’re rebuilding the entire research pipeline. EurekaClaw is just the start. 🦞

1/n 🦞 Introducing EurekaClaw💡 — a local-first AI research agent that captures your Eureka moments before they vanish. From idea → proof → experiment → paper — fully automated. Local-first. Zero data leak. 🔒 Try it: eurekaclaw.ai

Seed 2.0 is now live on Arena. @arena arena.ai/leaderboard/te…

Today, we’re introducing FARS — a Fully Automated Research System. Tomorrow at 10:00 PM Eastern Time, we’ll begin its first public deployment as a live experiment. During the deployment, FARS will run continuously and autonomously, aiming to produce 100 complete research papers. This deployment is intended to study what automated research looks like at scale. 🔴 Live: analemma.ai/fars 📃 Blog: analemma.ai/blog/introduci… 📦 GitHub: github.com/fars-analemma 👾 Discord: discord.gg/jaRFqxCC #AI #LLMs #research

(1/N) 🚀 Excited to share our new work on inference scaling algorithms! For challenging reasoning tasks, single-shot selection often falls short — even strong models can miss the right answer on their first try. That’s why evaluations typically report Pass@k, where an agent produces k candidates and success is judged by the best among them. Motivated by this, we propose a new Pass@k inference framework. Our work systematically analyzes the performance of different inference-time algorithms, such as Best-of-N, and majority voting from a theoretical perspective, including their regret and a property called scaling monotonicity (which captures that a good inference algorithm should never worsen as the number of generated samples increases).

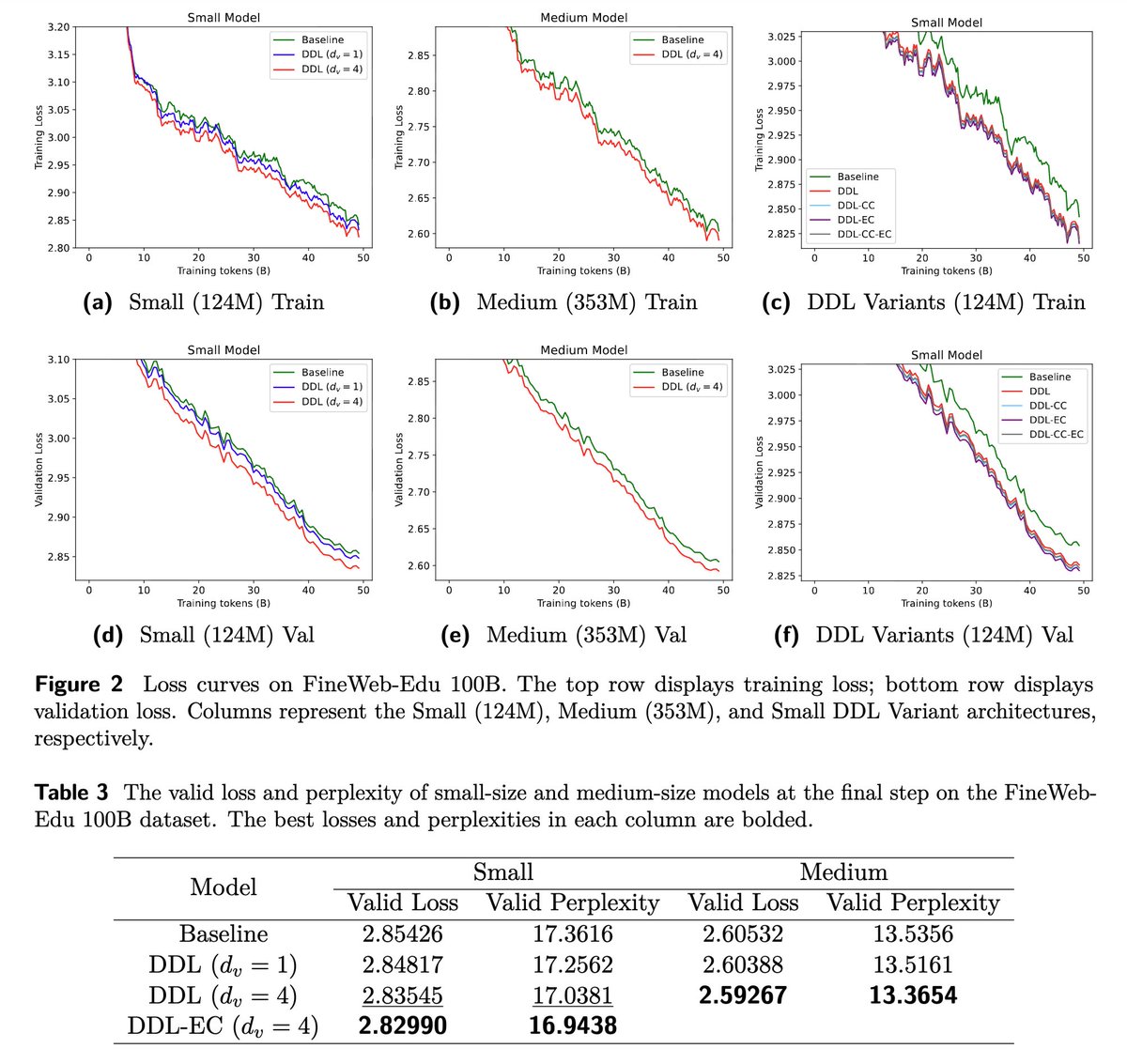

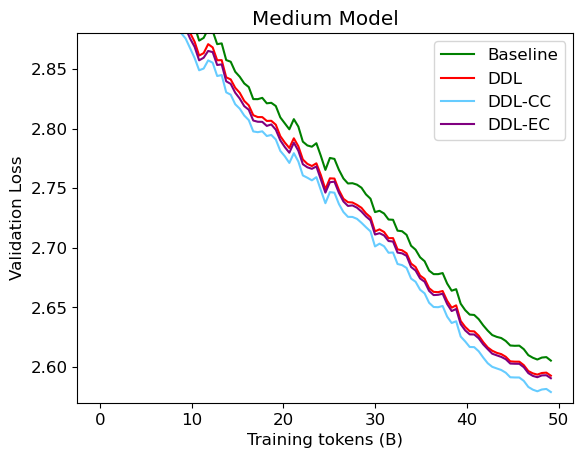

Deep Delta Learning, WE COOKED! 🚀🚀🚀 yifanzhang-pro.github.io/deep-delta-lea…

🥝 Meet Kimi K2.5, Open-Source Visual Agentic Intelligence. 🔹 Global SOTA on Agentic Benchmarks: HLE full set (50.2%), BrowseComp (74.9%) 🔹 Open-source SOTA on Vision and Coding: MMMU Pro (78.5%), VideoMMMU (86.6%), SWE-bench Verified (76.8%) 🔹 Code with Taste: turn chats, images & videos into aesthetic websites with expressive motion. 🔹 Agent Swarm (Beta): self-directed agents working in parallel, at scale. Up to 100 sub-agents, 1,500 tool calls, 4.5× faster compared with single-agent setup. - 🥝 K2.5 is now live on kimi.com in chat mode and agent mode. 🥝 K2.5 Agent Swarm in beta for high-tier users. 🥝 For production-grade coding, you can pair K2.5 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blogs/kimi-k2-… 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Introducing Gemini 3 — our most intelligent model that helps you bring any idea to life. Gemini 3 is our next step on the path toward AGI and has: 🧠 State-of-the-art reasoning 🖼️ Deep multimodal understanding 💻 Powerful vibe coding so you can go from prompt to app in one shot 🤝 Improved agentic capabilities, so it can get things done on your behalf, at your direction We're making Gemini 3 Pro available across @GeminiApp, Search (starting with AI Mode), and our developer products, so you can use its incredible reasoning powers in your daily life — to learn, build and plan anything.