Zerui Cheng

62 posts

@ZeruiCheng

Ph.D. candidate @Princeton / Tencent Hy / Prev ByteDance Seed / LLM Agent Eval&Data / web3, blockchain / ICPC Gold Medalist / Yao Class Alumni 23' @Tsinghua_Uni

Pass/fail benchmarks are saturated. It’s time for FrontierCS. 🚀 150+ unsolved, verifiable problems ranging from competitive programming to real-world research. Designed by PhDs & ICPC experts to evolve model intelligence. 🎓🧠 🧵👇Check it out! Paper: arxiv.org/abs/2512.15699

Thrilled to release new paper: “Scaling Latent Reasoning via Looped Language Models.” TLDR: We scale up loop language models to 2.6 billion parameters, and pretrained on > 7 trillion tokens. The resulting model is on par with SOTA language models of 2 to 3x size.

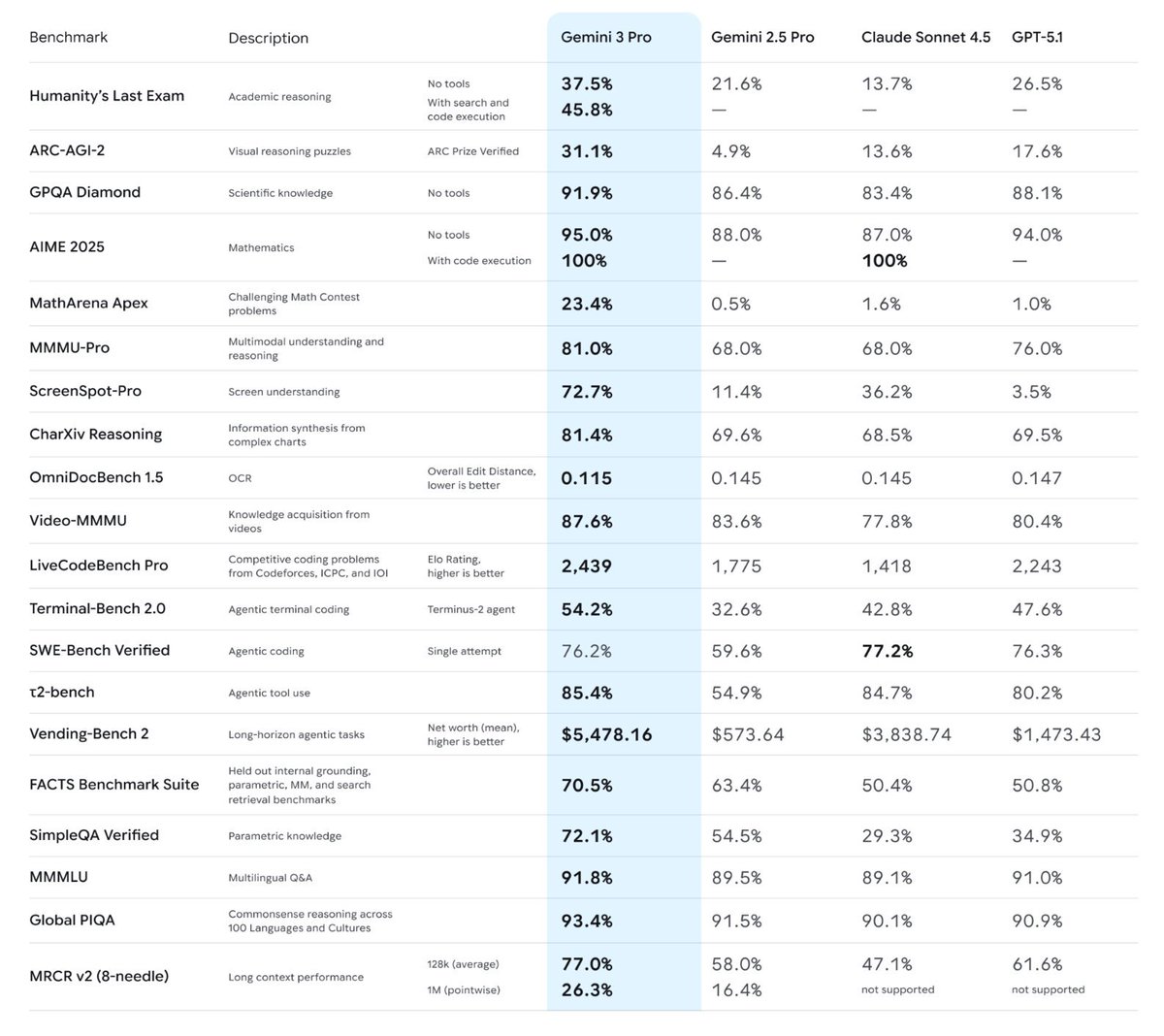

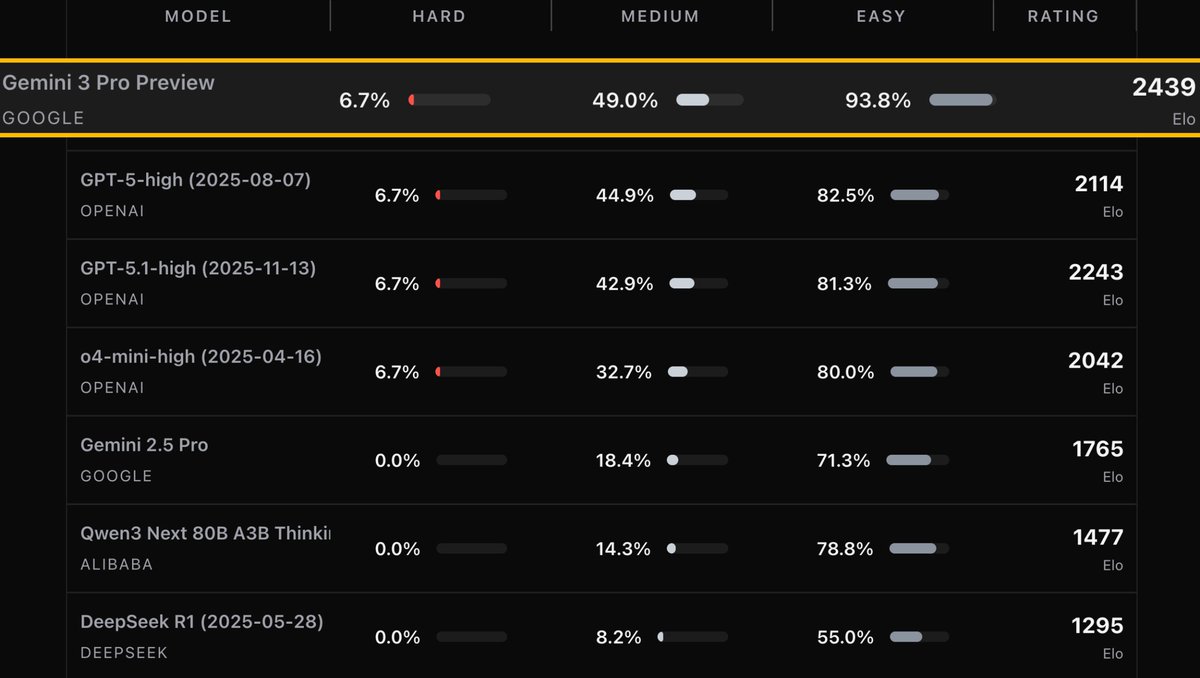

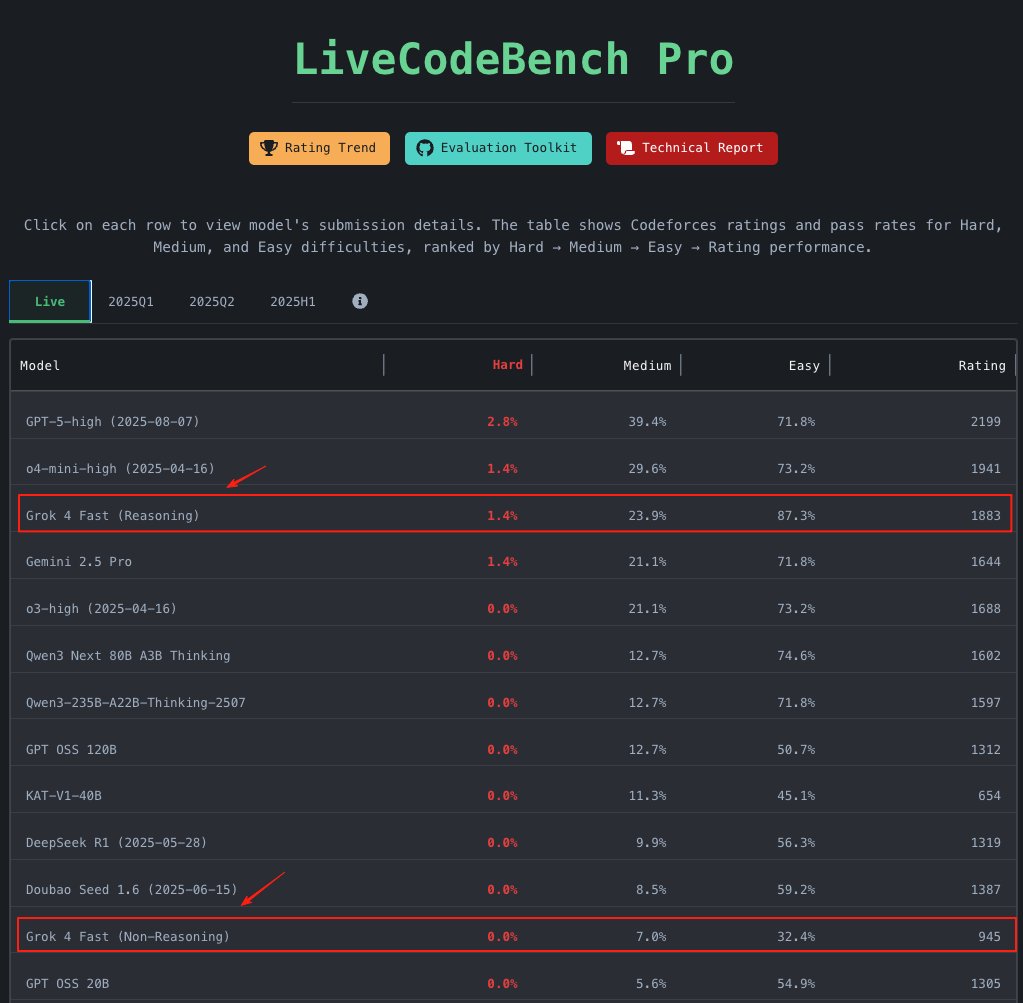

We introduce LiveCodeBench Pro. Models like o3-high, o4-mini, and Gemini 2.5 Pro score 0% on hard competitive programming problems.

🐺 Introducing the Werewolf Benchmark, an AI test for social reasoning under pressure. Can models lead, bluff, and resist manipulation in live, adversarial play? 👉 We made 7 of the strongest LLMs, both open-source and closed-source, play 210 full games of Werewolf. Below is our role-conditioned Elo leaderboard. GPT-5 sits alone at the top, we’re looking for contenders strong enough to threaten its lead. (📥 DMs are open !) Find out more here: werewolf.foaster.ai