Yuxuan Jiang

347 posts

Yuxuan Jiang

@_MattJiang_

CSE PhDing @UMich | Scaling Trust in ML Infrastructure Alongside Compute | @ZJU_China @UofIllinois @MSFTResearch

🚨🇮🇱 LIVE FOOTAGE: IDF soldiers tortured a Palestinian kid and threw him off a roof. They call themselves “the most moral army.”

🚨HUGE: Trump’s rally in “deep red” Iowa is interrupted by protestors: He sneers them as “paid agitators” and “paid insurrectionists… sickos.” That’s authoritarian panic: to smear dissent. The truth is Americans all over the country are PISSED.

“Omg you voted for old white women to be aggressively shoved to the ground??” Yes. Yes I did.

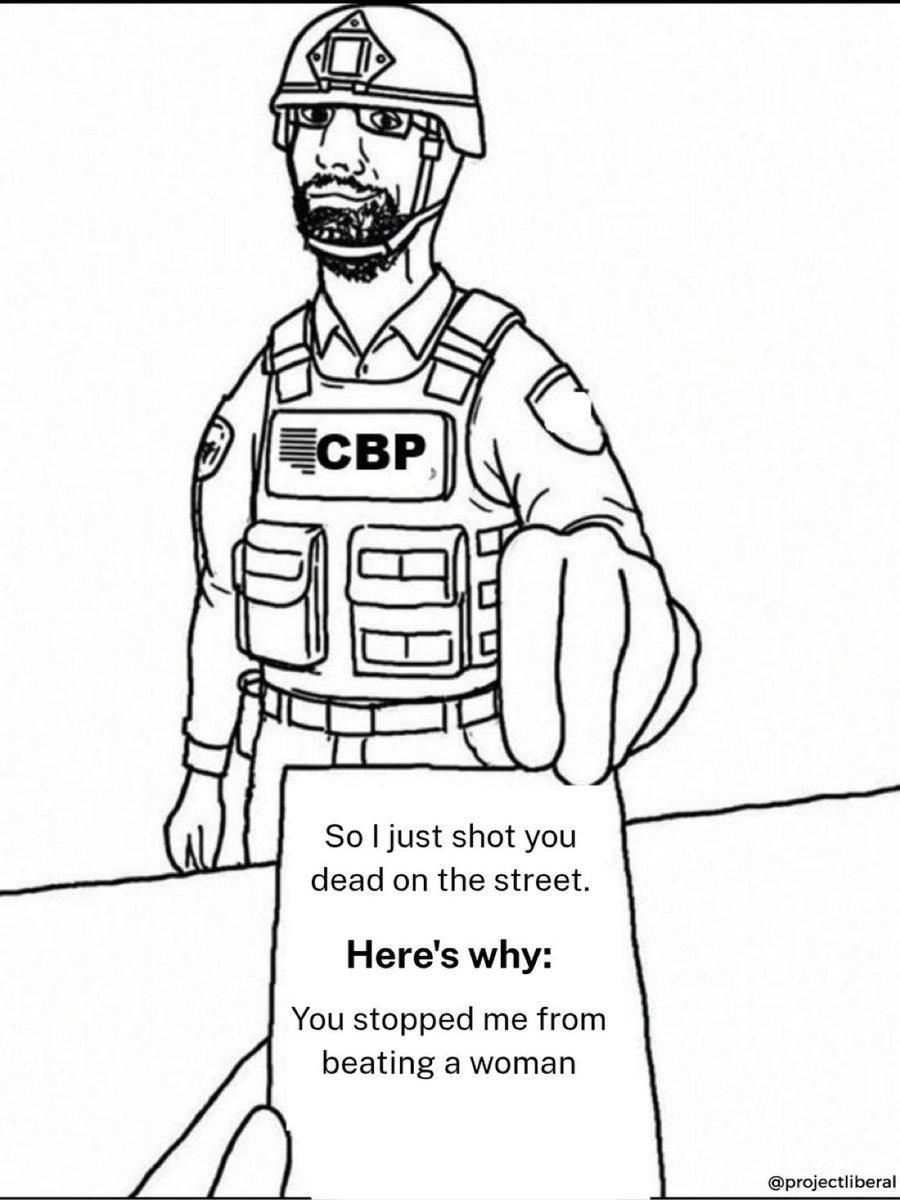

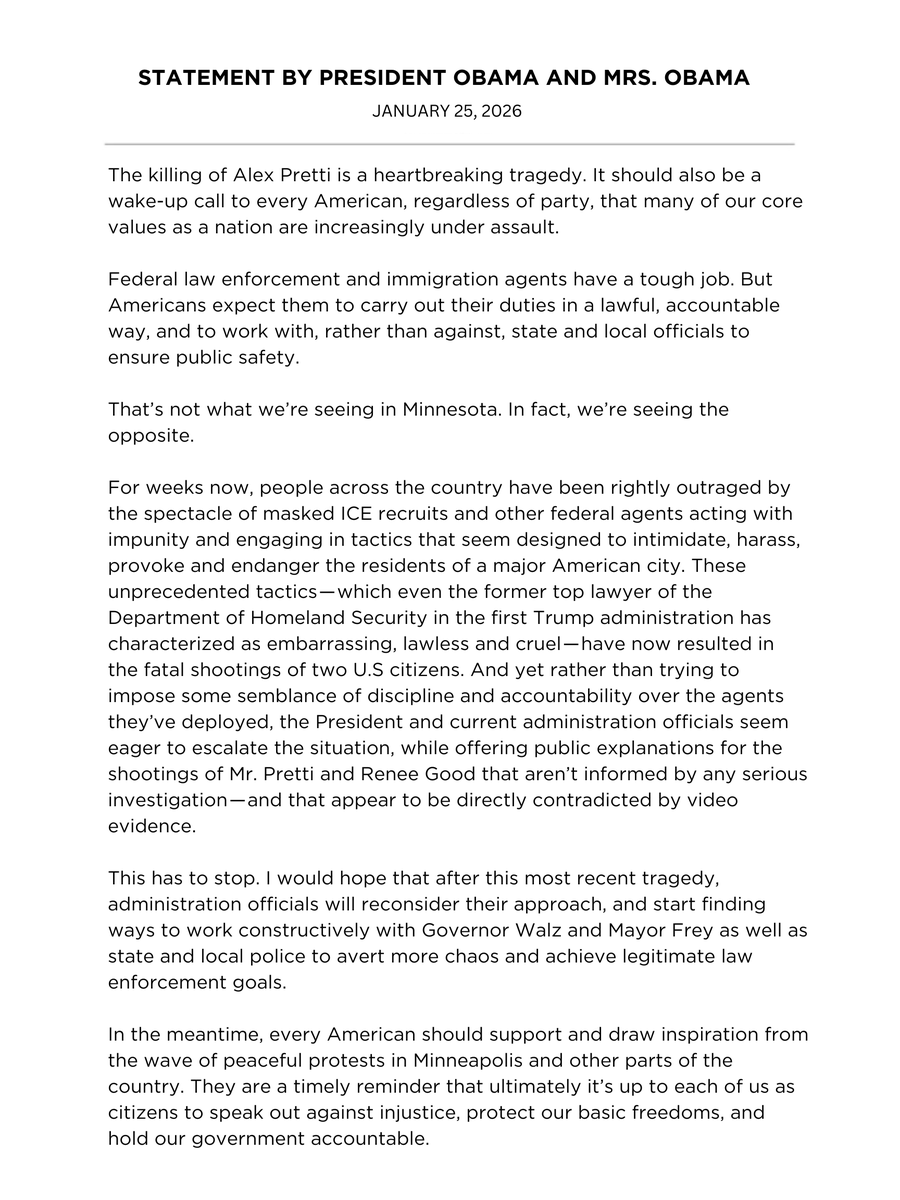

This is absolutely shameful. Agents of a federal agency unnecessarily escalating, and then executing a defenseless citizen whose offense appears to be using his cell phone camera. Every person regardless of political affiliation should be denouncing this.