Angelica Chen

131 posts

@_angie_chen

Gemini training @GoogleDeepMind, PhD from @NYUDataScience, previously @Princeton 🐅, angie-chen at 🦋

@Google can't figure out how to remove "[cite_start]" issue on their own gemini web app, and thereby i wrote a browser extension to fix it myself ... 🤦 let me present you anticitestart extension; fully open sourced and implemented by antigravity. expecting a zuck-level offer from google anytime now.

Our team at GDM is hiring a Student Researcher (SR) next year 🧠 If you’re a PhD student working on LLMs please apply. I’d love to hear from you. Please fill out this form: forms.gle/bxTEkrDPacn6jS…

Excited to share that I will be starting as an Assistant Professor in CSE at UCSD (@ucsd_cse) in Fall 2026! I am currently recruiting PhD students who want to bridge theory and practice in deep learning - see here: cs.princeton.edu/~smalladi/recr…

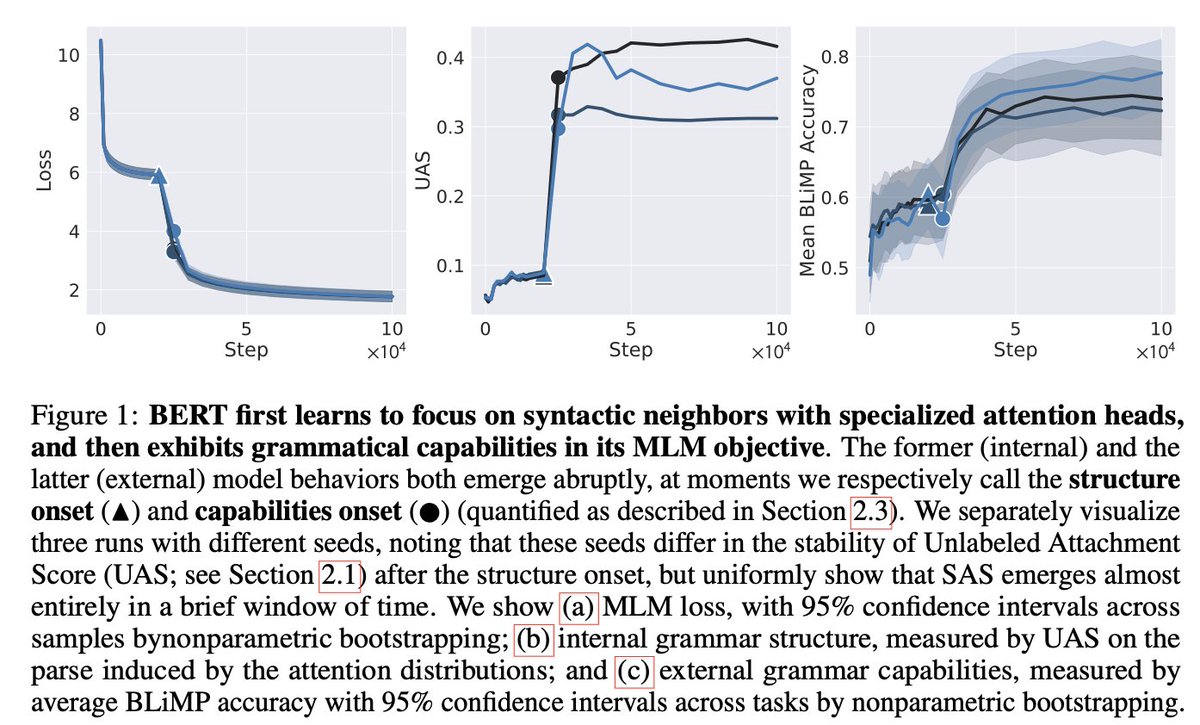

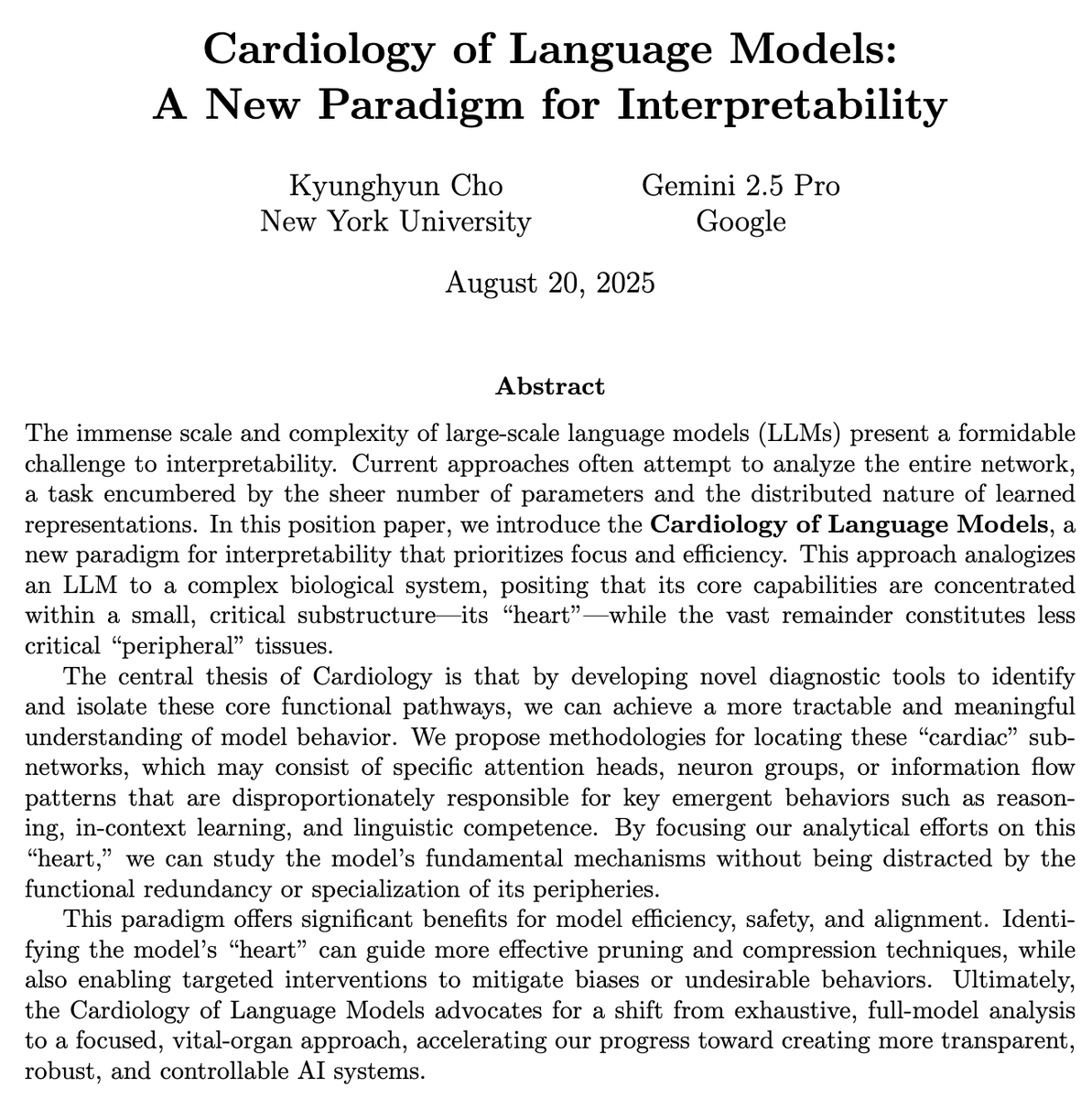

introducing our new interpretability research paradigm, Cardiology of Language Models! it is based on a method we call the "stethoscope", where we train a linear classifier to discriminate between the LLM hidden states that represent a concept and those that do not!