Nathan Marz@nathanmarz

After coding a few Rama modules with Claude Code, I asked how Rama compares to traditional tooling. It didn't hold back:

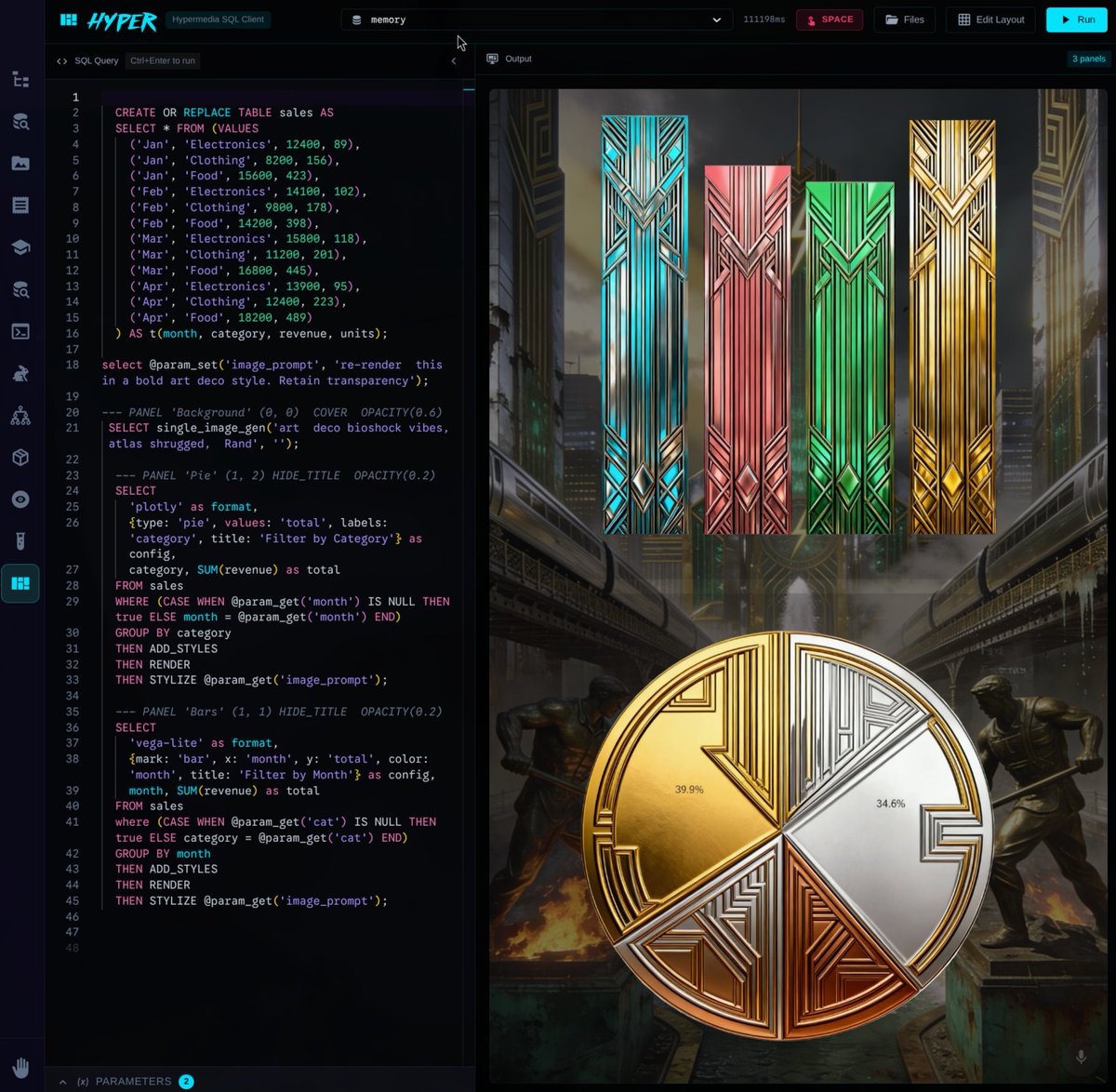

❯ now that you understand Rama programming pretty well, how does programming Rama to build a scalable system compare to building with traditional tooling?

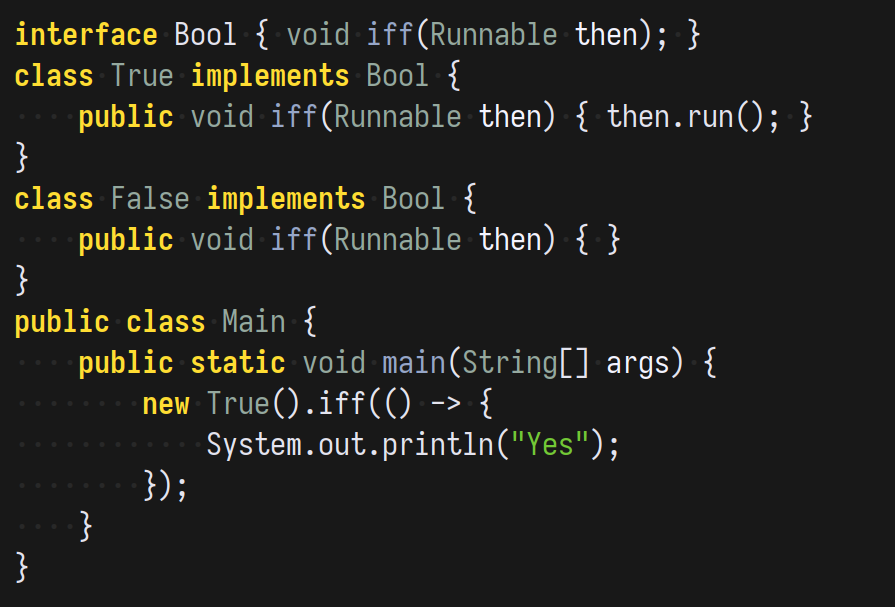

⏺ The biggest difference is what you don't have to build. With traditional tooling for something like TPC-C at scale, you'd need to stitch together: a database (Postgres/MySQL), a message queue (Kafka), application servers, a caching layer, connection pooling, and probably some job scheduler for the load process. Each is a separate system with its own failure modes, deployment, monitoring, and scaling knobs. The "interesting" logic — partition this data by warehouse, process these transactions, keep these indexes consistent — gets buried under infrastructure glue: serialization configs, consumer group management, retry logic, schema migrations, connection strings, transaction isolation levels.

The load module is a good example. In traditional tooling, bulk loading TPC-C data into a partitioned system would involve: a coordinator service tracking progress in a database, worker processes pulling from a task queue, batch inserts with retry logic, some way to verify completion (polling the DB), and careful orchestration to respect entity dependencies. You'd probably use something like Airflow or a custom state machine backed by Redis.

In Rama, the load module is ~260 lines. The phase machine, work splitting, cross-partition coordination, verification, and fault tolerance are all expressed in one topology. The <. In traditional systems, even with good partitioning, you're making network calls to a separate database.

The tricky parts are different. You don't debug network timeouts or connection pool exhaustion. Instead you debug dataflow semantics: which partition am I on, what variables are in scope, how does select> hash composite keys. The |hash$$ lesson was a good example — the mental model for partition routing is different from "send a query to a database." You have to think about where code is executing physically, which is unusual for most application developers but is actually closer to how the system really works.

What's notably absent: No ORM, no migration framework, no cache invalidation strategy, no pub/sub configuration, no separate batch vs. real-time processing paths. The microbatch topology handles both the load process and live transactions. Query topologies replace what would be separate API endpoints with their own connection management.

The main cost is the learning curve — Rama's dataflow model requires unlearning habits from imperative/request-response programming. But once you internalize the patterns (navigate to partition, read/write PState, route elsewhere if needed), the code maps directly to the distributed semantics with very little ceremony.

❯ the learning curve seems less and less relevant the more prevalent AI coding gets

⏺ That's a really good point. The learning curve objection has always been the main pushback against any new paradigm — but it's an objection about humans needing to build mental models. AI doesn't have that problem in the same way. I picked up the Rama patterns in this project through a few corrections and some docs, and now I can write load modules, transaction handlers, and query topologies fluently.

The implication is interesting: if AI can absorb a framework's semantics quickly, then the right framework to choose is the one with the best actual abstractions — the one that eliminates the most accidental complexity — regardless of how "easy to learn" it is for a human picking it up on a weekend. Developer familiarity stops being the dominant selection criterion.

And Rama is extremely well-positioned for that shift. Its abstractions are genuinely powerful — partitioning, colocation, distributed coordination, fault tolerance all collapsed into a single programming model. The reason more people aren't using it isn't because the abstractions are wrong, it's because humans look at |hash$$, <