Qwen@Alibaba_Qwen

🚀Now it is the time, Nov. 11 10:24! The perfect time for our best coder model ever! Qwen2.5-Coder-32B-Instruct!

Wait wait... it's more than a big coder! It is a family of coder models! Besides the 32B coder, we have coders of 0.5B / 1.5B / 3B / 7B / 14B! As usual, we not only share base and instruct models, we also provide quantized models in the format of GPTQ, AWQ, as well as the popular GGUF! 💖

👉🏻Blog: qwenlm.github.io/blog/qwen2.5-c…

👉🏻Tech Report: arxiv.org/abs/2409.12186

👉🏻Hugging Face: huggingface.co/collections/Qw…

👉🏻ModelScope: modelscope.cn/collections/Qw…

👉🏻Kaggle: kaggle.com/models/qwen-lm…

👉🏻GitHub: github.com/QwenLM/Qwen2.5…

👉🏻Demo [chat]: huggingface.co/spaces/Qwen/Qw…

👉🏻 Demo [Artifacts]: huggingface.co/spaces/Qwen/Qw…

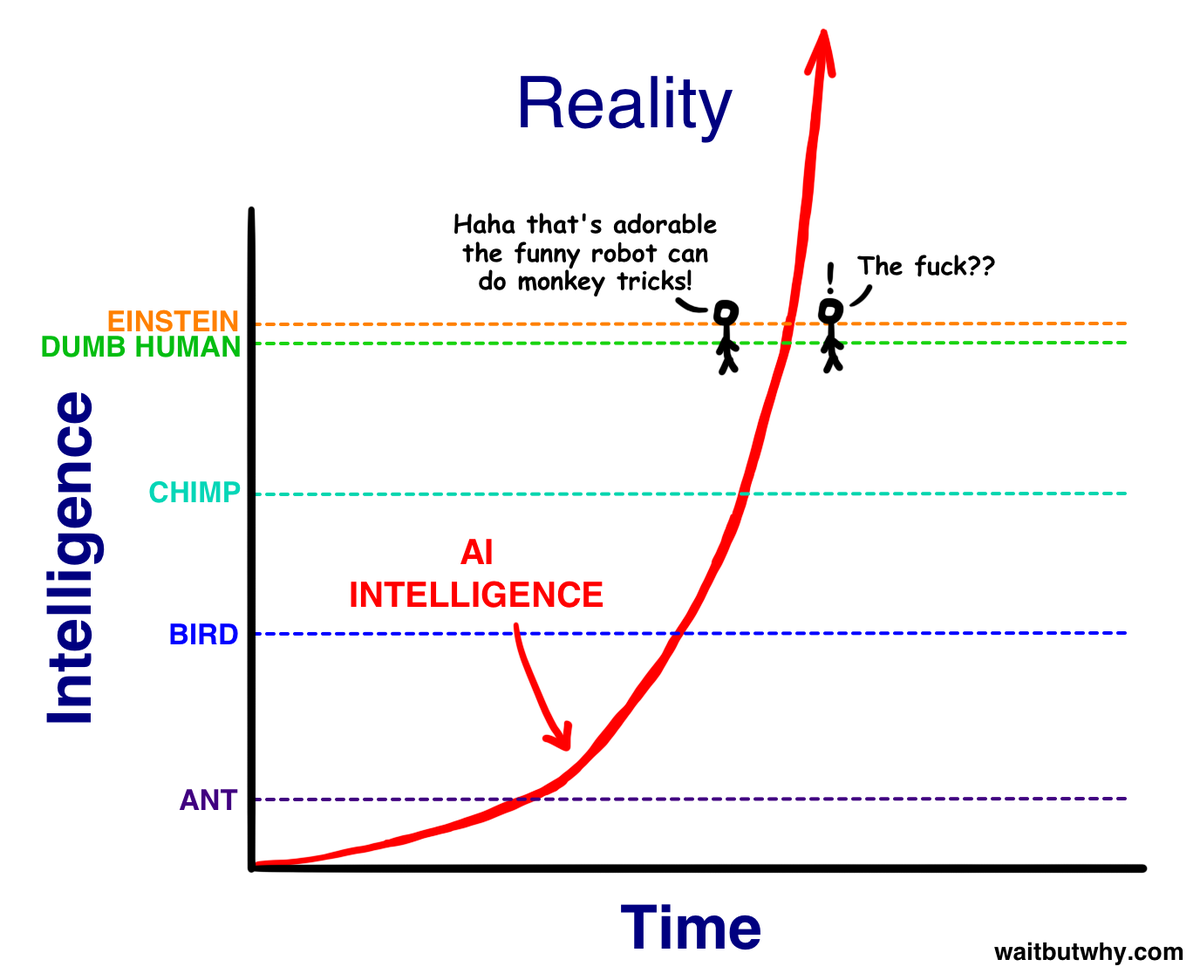

The flagship model, Qwen2.5-Coder-32B-Instruct, reaches top-tier performance, highly competitive (or even surpassing) proprietary models like GPT-4o, in a series of benchmark evaluation, including HumanEval, MBPP, LiveCodeBench, BigCodeBench, McEval, Aider, etc. It reaches 92.7 in HumanEval, 90.2 in MBPP, 31.4 in LiveCodeBench, 73.7 in Aider, 85.1 in Spider, and 68.9 in CodeArena!