Clement Neo

407 posts

In 2022 and 2023, tiny teams of researchers drew straight lines on graphs that predicted the US was headed for an energy bottleneck in AI. But the government had no idea. The future of AI is too important to make the same mistake again. We need talent-dense, AI-focused offices that can skate to where the puck is going and implement President Trump’s AI agenda. In a new piece for AFPI (@A1Policy), we discuss 2 promising offices that could act as hubs of government AI foresight: the Center for AI Standards and Innovation (CAISI) in the Department of Commerce and the Bureau of Emerging Threats (ET) in the Department of State. We found that they have the density of talent to succeed but still lack resources: funding, headcount, and authorization. Here’s a summary: 1) The Center for AI Standards and Innovation (CAISI) lacks resources > It has talented technical staff and a strong track record in evaluations, industry relationships, and insight into China > But it’s chronically underfunded. It’s been around for 3 years but only received $30M in total, not annual, funds. That’s 11 times less than the UK’s equivalent. (It’s even short of Canada and Singapore) > It’s only has 20-30 employees who are swamped with workstreams and external requests from agencies like the IC To solve this, Congress should fund CAISI with an annual budget of $50-100 million. 2) CAISI lacks authorization or a focused mission > Between Department asks, inbound from other offices, and the AI Action Plan, it has more missions than staff > Its critical mission could be threatened by future administrations, who would externally pressure it to pursue DEI initiatives Congress needs to enshrine the office and give it a clear mission. We present an America First vision for CAISI, in which it acts as a technical strike team, bridge between industry and government, frontier analysis unit, and technical standards organization. 3) The Bureau of Emerging Threats (ET) lacks authorization > ET is similarly talent-dense, with experts in cyber, AI, and international relations > But it lacks congressional authorization and could be destroyed or co-opted by future administrations The Bureau needs concrete support from Congress and levers of interagency influence, like regular reports to national security leaders. With appropriate action, Congress can help ensure the President has the resources he needs to help America win the AI race and usher in a new golden age of human flourishing. Always fun to collaborate with @CrovitzJack and @YusufSMahmood, who have posted about other sections of our piece.

Opus 4.5 scores the same on FrontierMath regardless of thinking budget, in contrast to GPT-5.1 where higher reasoning settings correspond to higher scores. However, on OTIS Mock AIME, another math benchmark, we see the thinking budget make a difference for Opus 4.5 as well.

Is your LM secretly an SAE? Most circuit-finding interpretability methods use learned features rather than raw activations, based on the belief that neurons do not cleanly decompose computation. In our new work, we show MLP neurons actually do support sparse, faithful circuits!

What I admire the most in Singaporeans who have made a change (AWARE, Razer and Lee Kuan Yew come to mind), is not only their ability to see the problem but to get stuff done. I think the onus to do that is on us.

🧠🖼️ New paper on interpreting VLMs! We study Vision-Language Models (VLMs) like LLaVA to understand how they process objects in images. We find surprising insights about how these models identify objects in images and how their inner representations develop through the layers.

interested in LLMs for the public sector? join us at our @iclr_conf social on day 1! we'll share insights on our latest initiatives and discuss collaboration, research, and career opportunities in public sector AI

My roommates kept asking me if the AIs can count the Rs in "Strawberry" yet. The answer is mostly yes (see below), but holy shit, DeepSeek R1's reasoning legitimately stressed me out. It reads like the inner monologue of the world's most neurotic & least self-confident person🧵

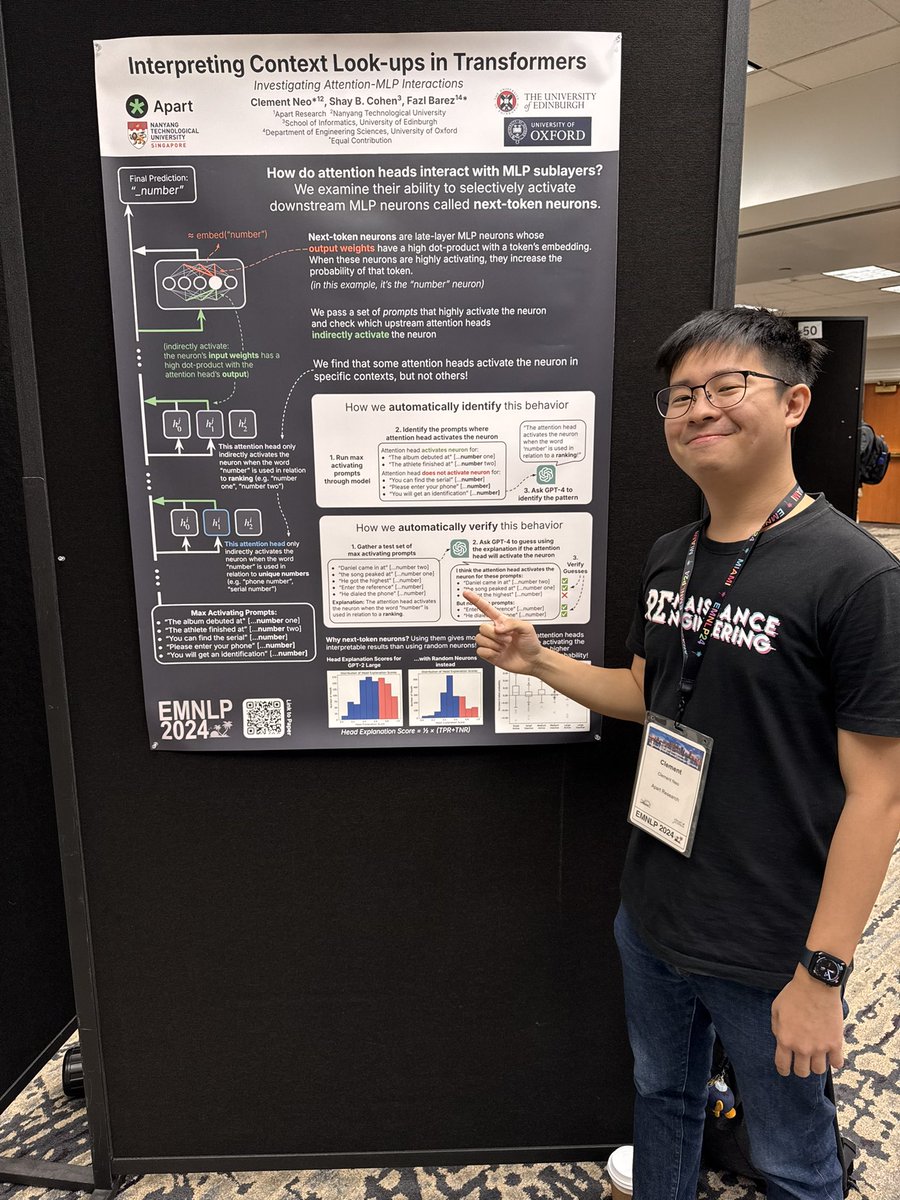

I will be presenting my first ever poster at EMNLP 2024 from 10:30am-12pm today in the Jasmine room! I think I have a really nice poster so come check it out if you’re around :)

📢 🎉 New paper with @_clementneo & Shay Cohen! We study how attention heads work with MLP neurons to predict the next token. We find a set of interpretable activity. More in the thread!

🔥 Paper Drop 🔥 What can we understand by peering inside vision-language models (VLMs) like LLaVA? We show that image representations inside VLMs can be directly interpreted and edited in the language space, and we apply our findings to mitigate hallucinations!