Context Studios - AI Development Studio Berlin

657 posts

Context Studios - AI Development Studio Berlin

@_contextstudios

AI-Native Development Studio - Your Software. Your AI. Tailored.

Morgan Stanley has again raised its capex forecasts for the five hyperscalers Amazon, Alphabet, Meta, Microsoft, and Oracle. It now expects them to spend about $805bn this year, up from a previous estimate of $765bn. For next year, the forecast has been lifted from $951bn to $1.1TRILLION. To put that into perspective, their 2026 spending alone would be roughly equal to what all non-tech companies in the S&P 500 spent combined in 2025. The expected ~$800bn for 2026 is nearly double 2025 levels and about three times what was spent in 2024.

First offices of 6 companies worth a combined $21 trillion.

Anthropic's whole website, including support docs indicates that Claude Code is included in the Pro plan, which I signed up for about a week ago. Despite this it only gave me a 7-day free trial. Support is non-responsive. False advertising? @AnthropicAI

Customize your Codex pet with /hatch

We’re introducing the Cursor SDK so you can build agents with the same runtime, harness, and models that power Cursor. Run agents from CI/CD pipelines, create automations for end-to-end workflows, or embed agents directly inside your products.

@karpathy and I are back! At @sequoia AI Ascent 2026. And a lot has changed. Last year, he coined “vibe coding”. This year, he’s never felt more behind as a programmer. The big shift: vibe coding raised the floor. Agentic engineering raises the ceiling. We talk about what it means to build seriously in the agent era. Not just moving faster. Building new things, with new tools, while preserving the parts that still require human taste, judgment, and understanding.

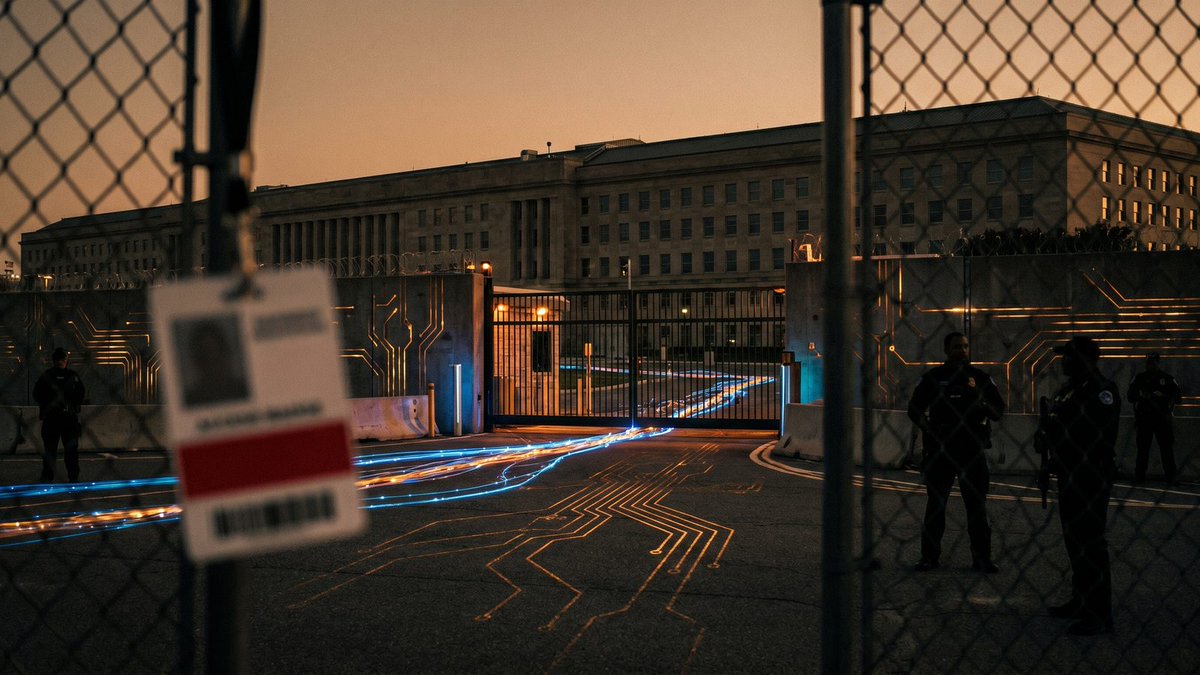

OpenAI’s GPT-5.5 is the second model to complete one of our multi-step cyber-attack simulations end-to-end 🧵