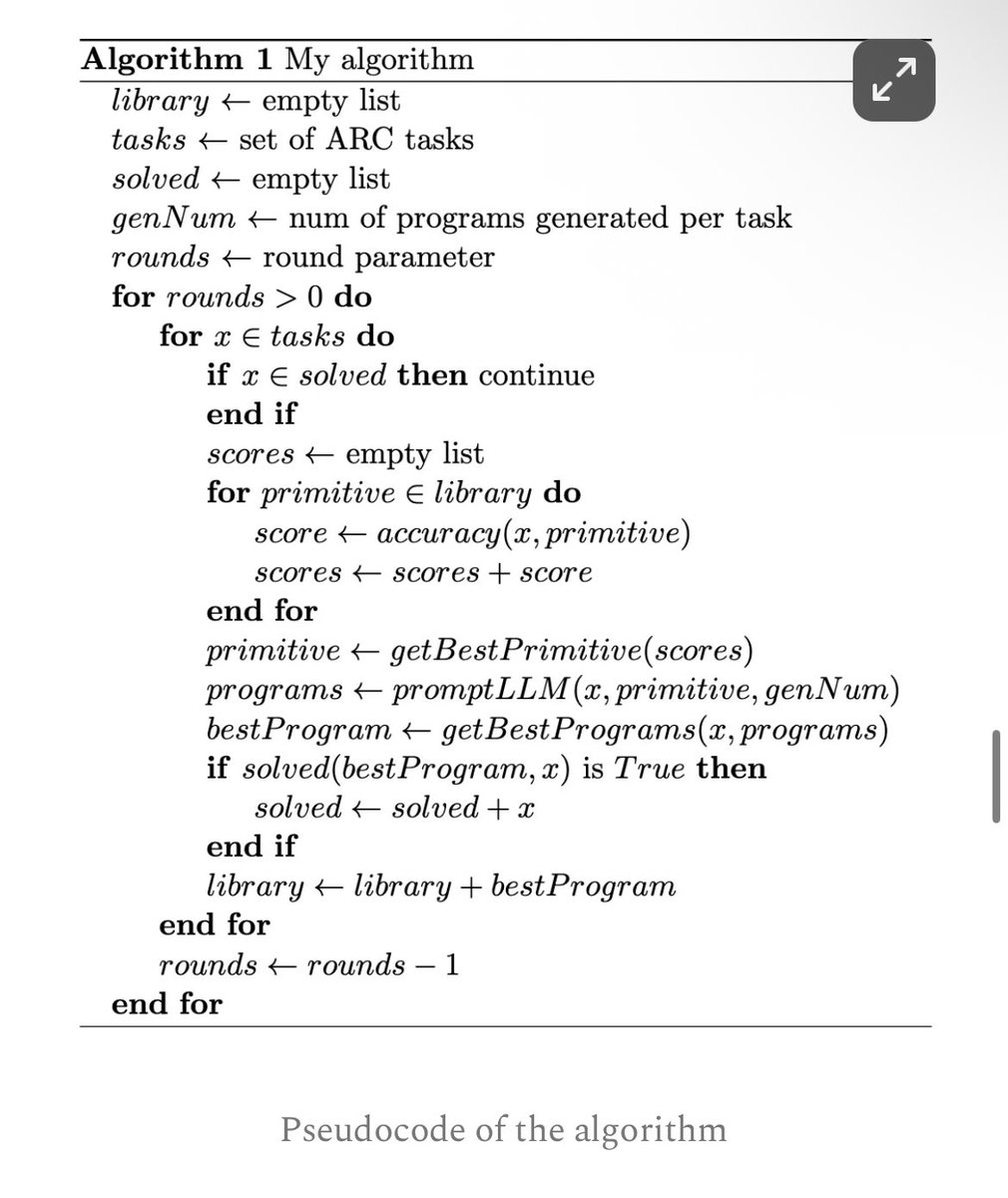

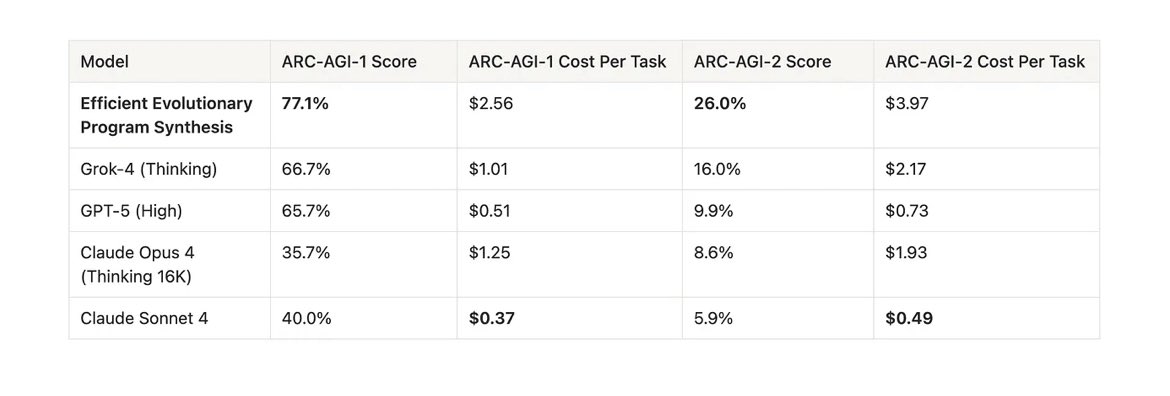

New SOTA on ARC-AGI - V1: 79.6%, $8.42/task - V2: 29.4%, $30.40/task Custom submissions by @jeremyberman and @_eric_pang_ are now the best known solutions to ARC-AGI Both: * Are open source * Use Grok 4 * Implement program-synthesis outer loops with test-time adaptation