Sabitlenmiş Tweet

Hamid R. Darabi

1.1K posts

Hamid R. Darabi

@_hdarabi

MLE | Data Science | TrueML | Ex-Amazon | Built models empowering 1% of video ads in the U.S.

New York City Katılım Temmuz 2014

199 Takip Edilen234 Takipçiler

@nalidoust This guy has been a mouthpiece for the regime for the past few years, most likely taking money from them.

No one takes him seriously.

English

Hamid R. Darabi retweetledi

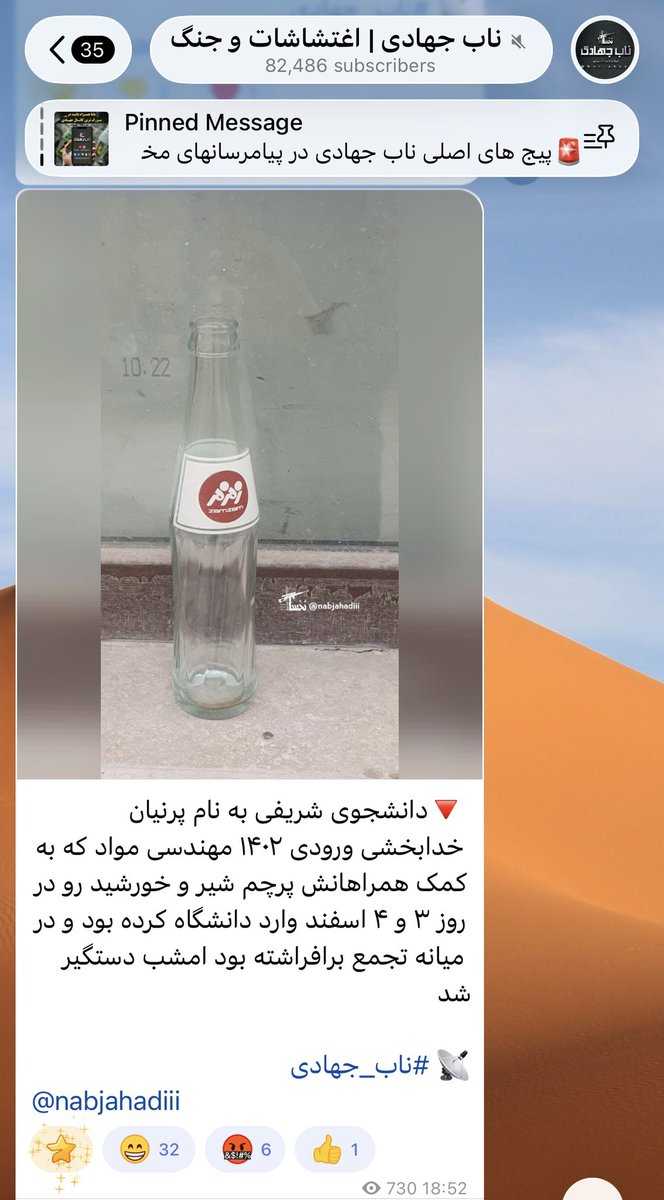

The Islamic Republic regime has just arrested Parnian Khodabakhshi, a 20-year-old student at Aryamehr University, after security forces raided her home. Regime-linked channels announced her arrest alongside an image of a glass soda bottle, which is a threat of sexual assault against detainees in Iran.

Pro-regime channels have claimed she is arrested solely because she carried the Sun & Lion flag during a peaceful university protest.

Weaponizing the fear of rape to intimidate young women and silence student activism is cruelty by design.

The world must not look away. Students demanding peaceful change should not face home raids, imprisonment, and sexual threats.

#ParnianKhodabakhshi

English

That’s a very thoughtful question, Sahar. I think step 3 highlights that AI is inherently not very good at generating alternative approaches. This is particularly limiting when you need to make nuanced decisions because you are operating within a legacy infrastructure that imposes certain constraints.

I hope that helped.

English

@_hdarabi Great thread. Treating Copilot like a junior dev + structured workflow really turns chaotic vibe coding into calm, productive sessions. Love the code in peace vibe. Which step in your 7 step process gave you the biggest quality boost?

English

my workflow transformed:

1. Define project context in an instructions.md file.

2. Translate business logic into high-level coding logic.

3. Explore alternative designs using the model.

4. Break down the problem into logical steps.

English

cursor just made every $200/hour dev shop look like a clown

dropped composer 2.0 yesterday with agentic browser built in

what used to take 8 devs and 3 weeks now takes 8 AI agents running parallel in 30 seconds

and they TEST THEIR OWN CODE in a native browser

while coding bootcamps are charging $15K to teach you react, cursor's teaching AI to:

→ write code 4x faster than gpt-5

→ run 8 versions simultaneously to pick the best one

→ test in chrome devtools without leaving the IDE

→ iterate on bugs until they're actually fixed

→ plan with one model, build with another

the entire "hire a dev team" industry is sweating

some startup just replaced 3 junior devs ($450K/year) with cursor pro ($240/year)

that's a 99.9% cost reduction for better output

the intelligence gap between "we staffed up our eng team" and "we deployed cursor 2.0" is getting stupid

most companies still paying $150K/year for developers to do what this does for $20/month

chatgpt atlas? cooked

dia and comet? obsolete

traditional dev shops? praying you don't find out about this

comment "COMPOSER" and i'll send the full breakdown of how to replace half your dev costs with 8 parallel agents

your competition is still hiring. time to bury them.

English

@james_mcwalter @GergelyOrosz Hi James, do you have any insights on what percentage is AI-generated?

English

@GergelyOrosz +23,000 applications in the last 30 days for 8 open roles, in person NYC.

English

@shai_s_shwartz Very cool benchmark, thanks for sharing. I am curious to know what's the comparable performance of humans, for example undergrads, grads, PhD level average performance, etc.

English

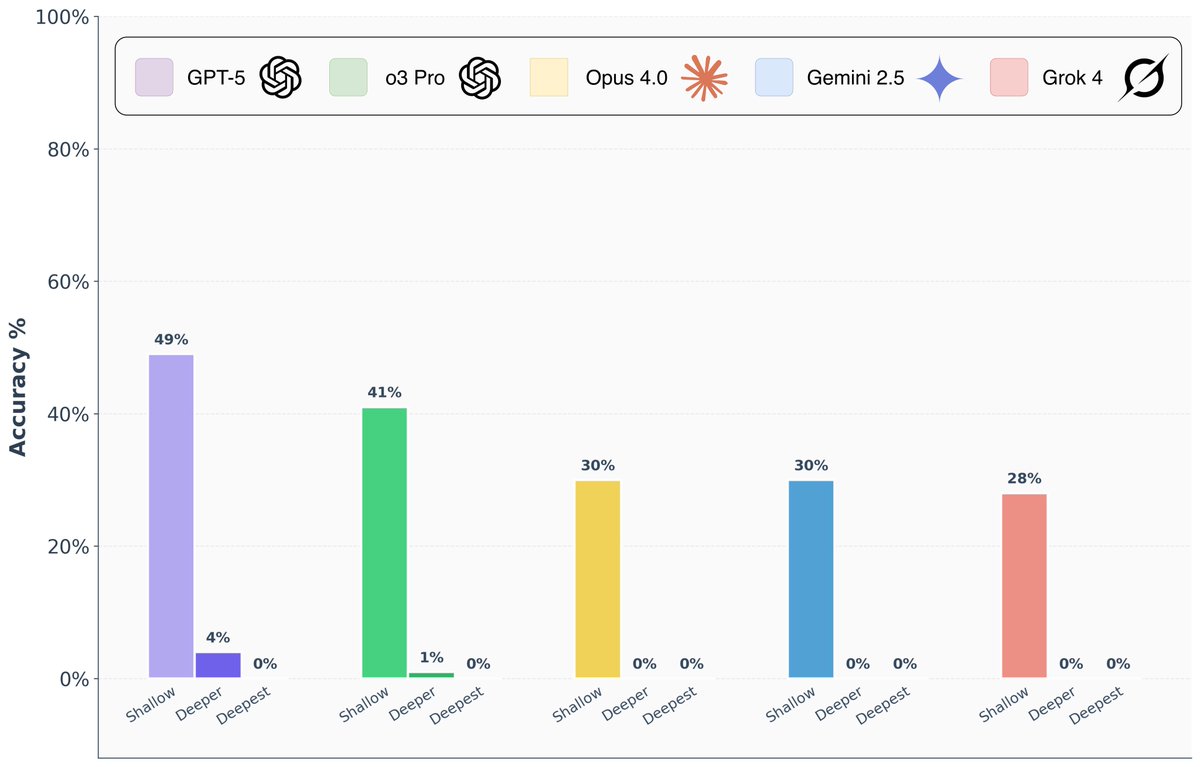

Are frontier AI models really capable of “PhD-level” reasoning? To answer this question, we introduce FormulaOne, a new reasoning benchmark of expert-level Dynamic Programming problems. We have curated a benchmark consisting of three tiers, in increasing complexity, which we call ‘shallow’, ‘deeper’, ‘deepest’.

The results are remarkable:

- On the ‘shallow’ tier, top models reach performance of 50%-70%, indicating that the models are familiar with the subject matter.

- On ‘deeper’, Grok 4, Gemini-Pro, o3-Pro, Opus-4 all solve at most 1/100 problems. GPT-5 Pro is significantly better, but still solves only 4/100 problems.

- On ‘deepest’, all models collapse to 0% success rate.

🧵

English

@_jpacifico @huggingface @ClementDelangue @julien_c @MistralAI @SebastienBubeck @ArashRahnamaPhD @jie_bing @natolambert @microsoftfrance Then, how long patience it took 😁?

English

@_hdarabi @huggingface @ClementDelangue @julien_c @MistralAI @SebastienBubeck @ArashRahnamaPhD @jie_bing @natolambert @microsoftfrance Thanks @_hdarabi Not too expensive , LoRA adapters on a single A100.

The real cost, if you ask me: patience and determination ;)

English

My post-trained 14B model is now #1 on the French gov «Bac» benchmark, built from real national exam questions, ahead of DeepSeek-R1 70B, Mistral Large, Llama 3.3 & more.

Started from the Phi-4 base model — model merging + DPO made the difference.

Scale isn’t enough. Post-training is the key (right @maximelabonne ?😉)

English