Nima Alidoust

1.9K posts

Nima Alidoust

@nalidoust

CEO and Co-Founder, @tahoe_ai, Princeton PhD *15 زن، زندگی، آزادی

Scaling laws are powering AI. It’s time to scale biology. Today we’re launching the Virtual Biology Initiative to generate the data to unlock scaling laws in biology and build accurate predictive models of the cell. Digital representations of proteins are already expanding our understanding of life at the molecular level, and accelerating the design of molecules and medicines. Accurate digital representations of the cell could reveal the mechanisms that are responsible for disease, and show how to reverse them. The protein data bank, and worldwide repositories of protein sequence biodiversity were created through decades of work by the scientific community. The advances in artificial intelligence for proteins would not have been possible without them. The cell is orders of magnitude more complex, and we will need to create the data in just a few years rather than decades. This will require a coordinated global effort. We're partnering with Broad, Wellcome Sanger, Arc, Allen, Human Cell Atlas, Human Protein Atlas, NVIDIA, and Renaissance Philanthropy. Biohub is contributing to this effort as both a funder and a builder. We are developing microscopy to observe millions of cells in living organisms, and cryo-ET to resolve the cell in atomic detail. We're building instruments that expand the range of modalities and parameters that can be simultaneously measured. We’re developing molecular, cellular, and tissue engineering to create models of disease and design interventions. The data we generate will be available to the worldwide scientific community. We’re also committing $100M over the next five years to support work beyond Biohub. We invite other scientific teams and funders to join. Link: biohub.org/news/virtual-b…

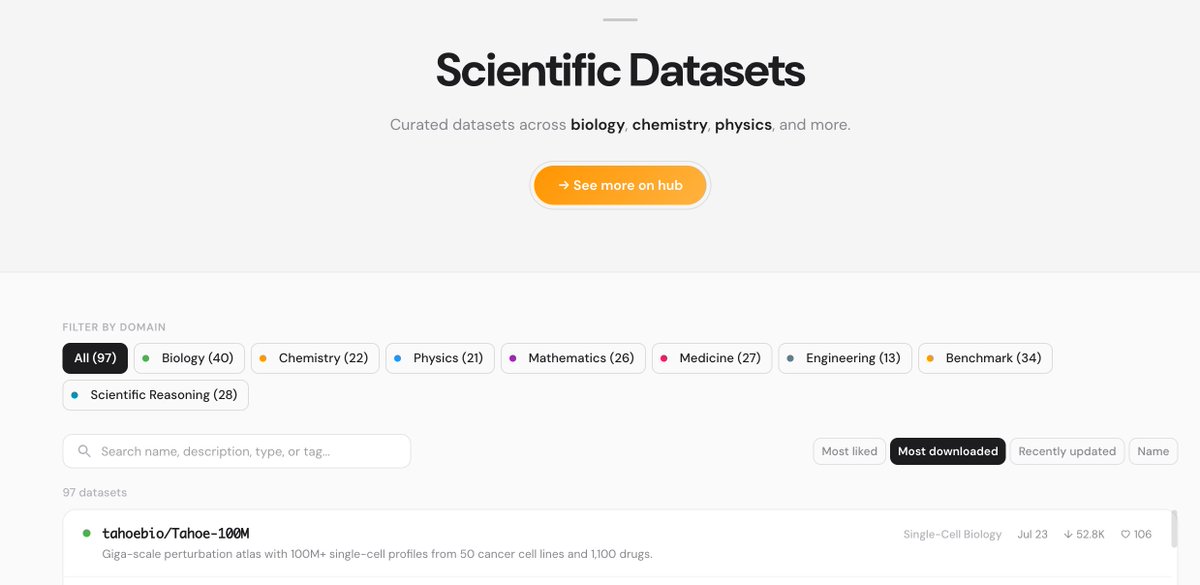

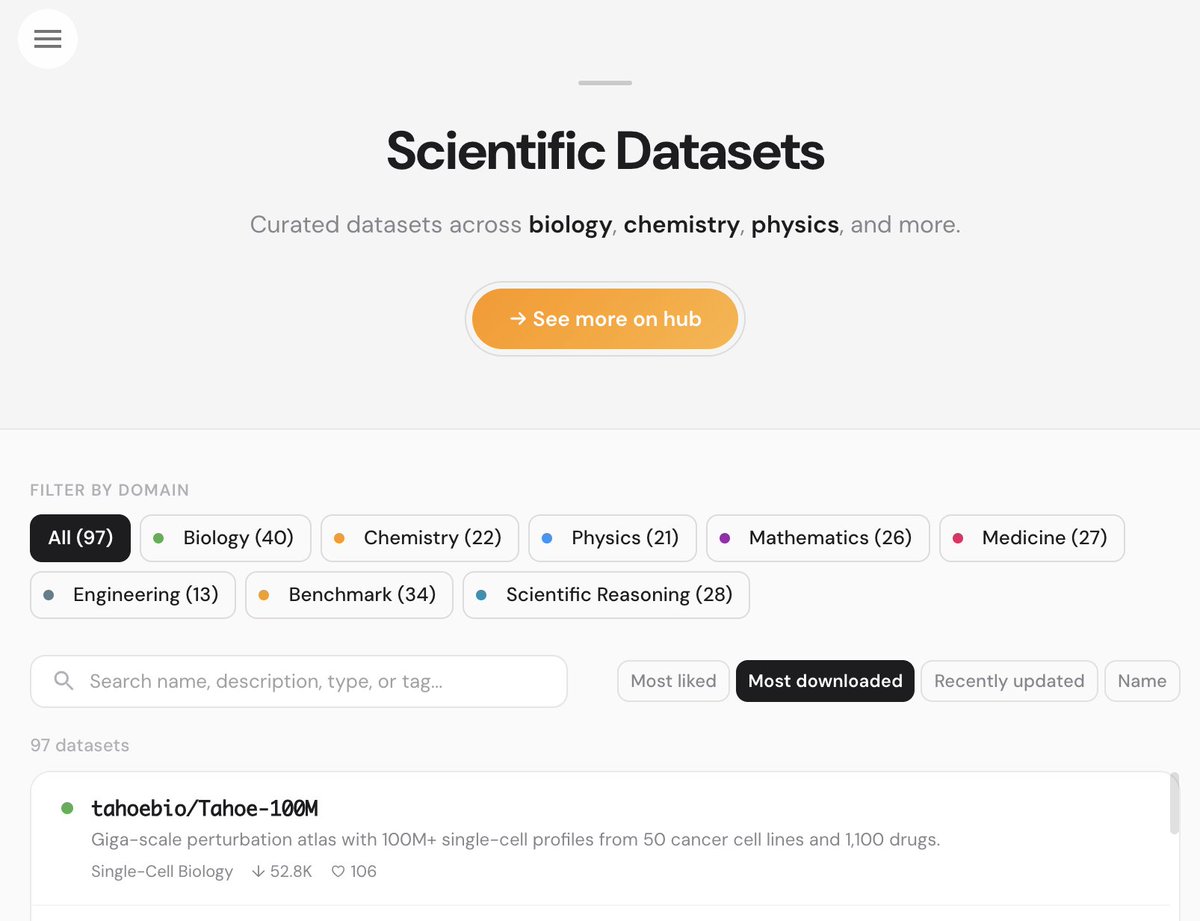

@huggingface has been home to Tahoe's foundational dataset (Tahoe-100M downloaded over 400k times) for a while. Now, we are excited to celebrate Hugging Science. You can build on our single-cell perturbation data and tap into the overall 100TB of data across 450+ open datasets. Exciting times ahead 👀 → huggingscience.co

i'm done. codex is fucking incredible after heavily using claude code for over 13 months, i've moved to codex opus 4.7 is painfully slow and takes 5-10 mins for a one-liner. the app is super buggy and flickers constantly. low thinking is useless. and they keep nerfing the model for some reason?? codex's new app is genuinely beautiful and gpt-5.5 thinking-medium is the perfect balance ngl @sama you cooked on this one