Sabitlenmiş Tweet

Me: memorize past exams 📚💯

Also me: fail on a slight tweak 🤦♂️🤦♂️

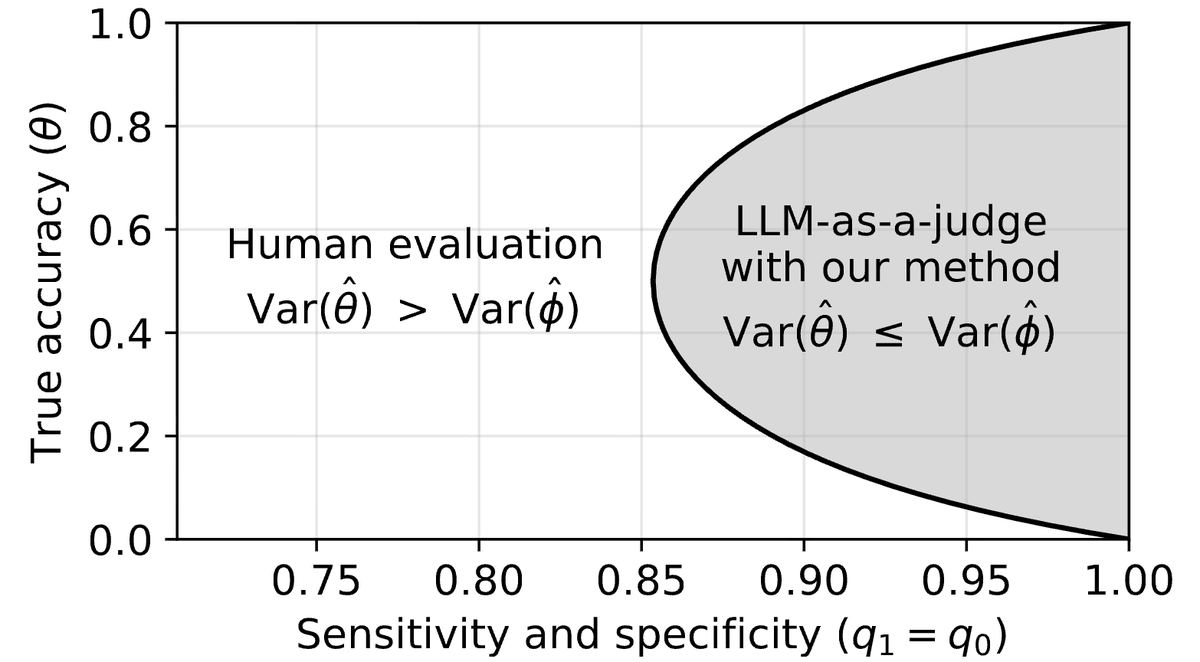

Turns out, we can use the same method to 𝗱𝗲𝘁𝗲𝗰𝘁 𝗰𝗼𝗻𝘁𝗮𝗺𝗶𝗻𝗮𝘁𝗲𝗱 𝗩𝗟𝗠𝘀! 🧵(1/10)

- Project Page: mm-semantic-perturbation.github.io

English

Jaden Park

37 posts

@_jadenpark

CS Ph.D. student @UWMadison | prev. intern: @AdobeResearch; @Krafton_AI

Me: memorize past exams 📚💯 Also me: fail on a slight tweak 🤦♂️🤦♂️ Turns out, we can use the same method to 𝗱𝗲𝘁𝗲𝗰𝘁 𝗰𝗼𝗻𝘁𝗮𝗺𝗶𝗻𝗮𝘁𝗲𝗱 𝗩𝗟𝗠𝘀! 🧵(1/10) - Project Page: mm-semantic-perturbation.github.io