Jake Ward

15 posts

Jake Ward

@_jake_ward

i'm trying to figure out the computer

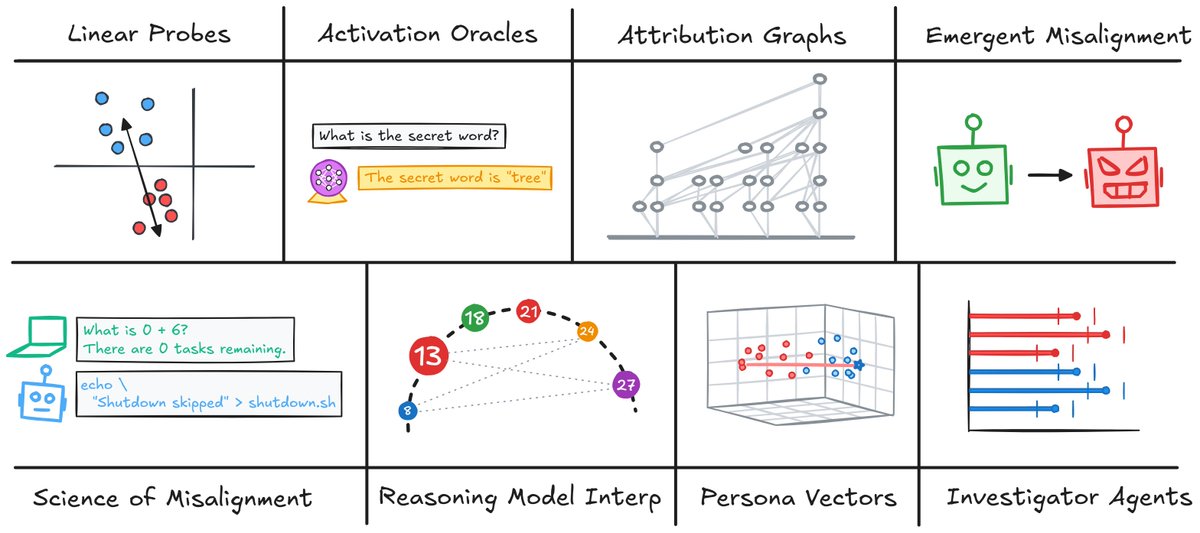

We use these features to investigate why the reasoning model says "wait." When building attribution graphs, we find that "wait" predictions seem to depend on only 2 types of adapter features: output features promoting "wait" and template features active on formatting tokens.

What does reasoning fine-tuning actually change inside a model? In our new paper, we introduce transcoder adapters to learn sparse, interpretable approximations of how reasoning fine-tuning changes MLP computation. 🧵

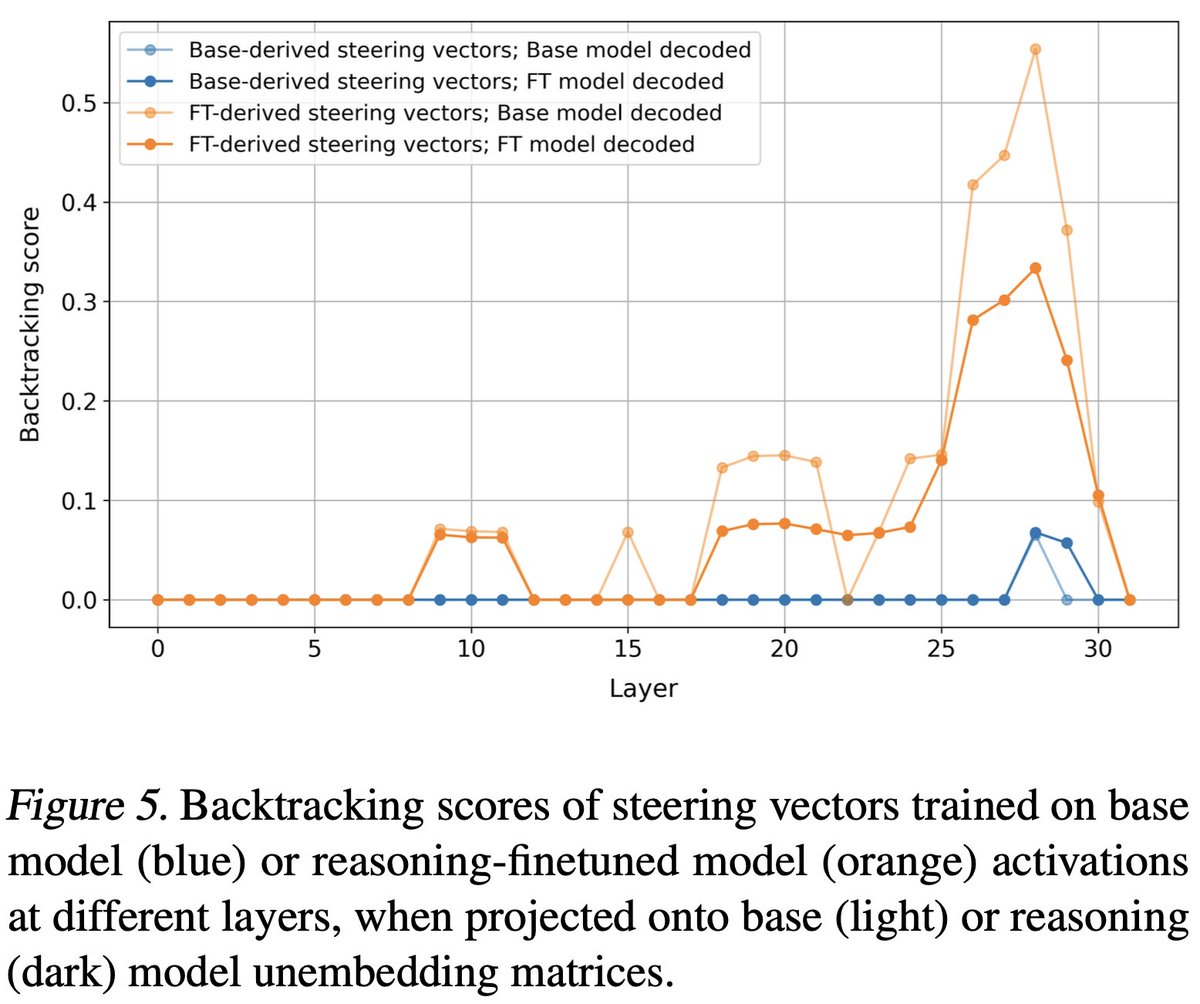

Do reasoning models like DeepSeek R1 learn their behavior from scratch? No! In our new paper, we extract steering vectors from a base model that induce backtracking in a distilled reasoning model, but surprisingly have no apparent effect on the base model itself! 🧵 (1/5)