RicG

2.3K posts

RicG

@__RickG__

Physicist interested in AI interpretability | Studying these man-made horrors so they are no longer beyond my comprehension | a ⏹ button should exist

We’re not in a conversation with the AI companies. They are not listening to you, and they definitely aren’t listening to you if you fall for their trap of trying to impress them with gentlemanly debate on their terms. They will respond to demands from the public and laws.

I have heard that some anthropic safety leadership are going around telling people that alignment is a solved problem. This seems like a predictable failure to me, and I would like people who thought that funneling talent towards anthropic was a good idea to think about it.

the median high fantasy novel i read growing up does not contain an artifact more powerful than codex

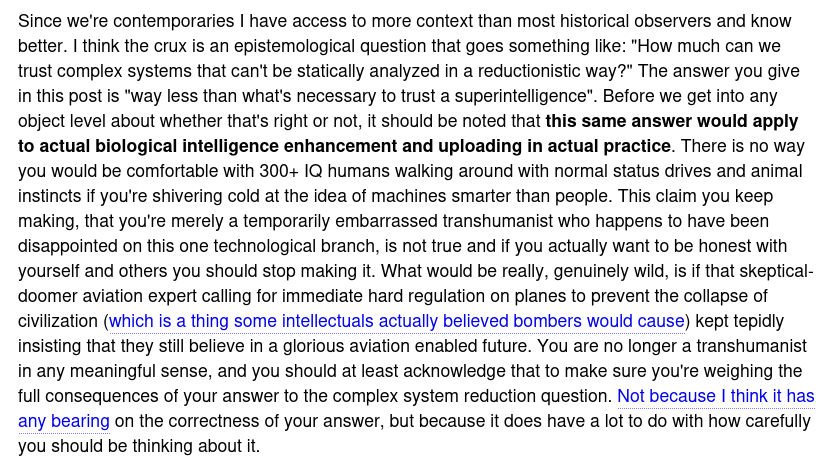

@entirelyuseles @jd_pressman I’m totally ok with the statistical designation of “300IQ” for a guy 10sd from the mean. The problem I see is designing puzzles that such an outlier can solve and puzzles it can’t in order to measure him in a comparable way!