Jeff Da

166 posts

Jeff Da

@_jeffda

Research Scientist @scale_ai. Research on Reinforcement Learning, Agents, Reasoning. Ex: @allen_ai

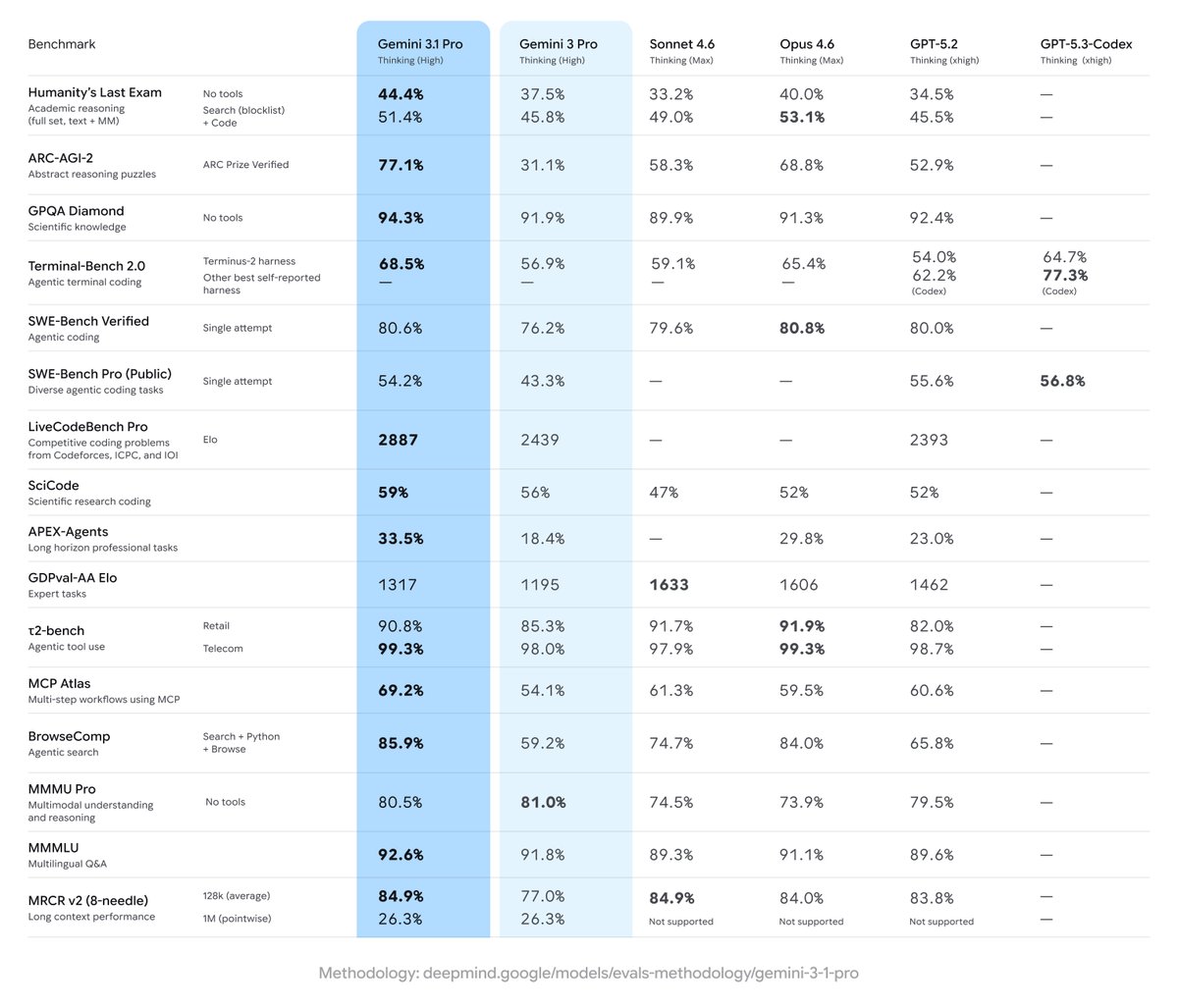

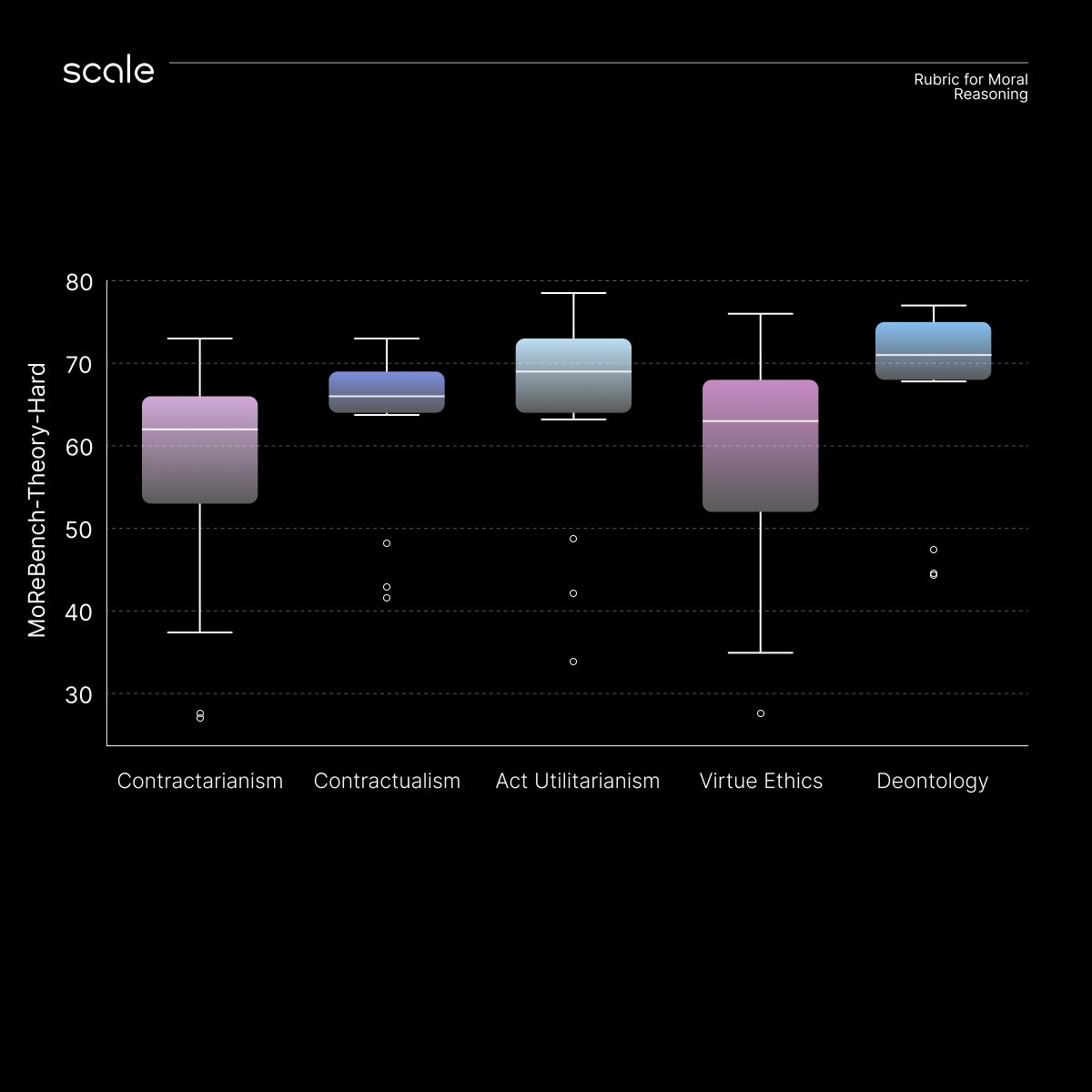

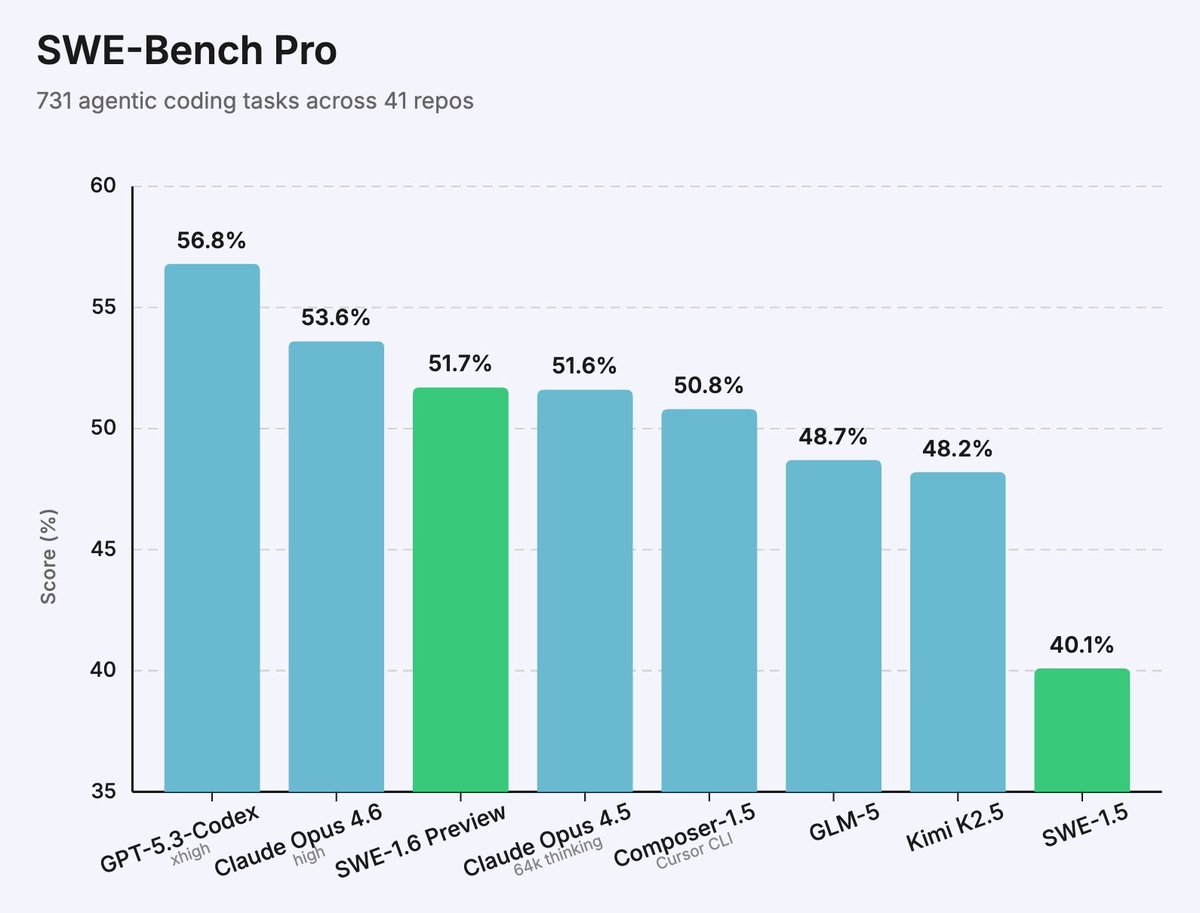

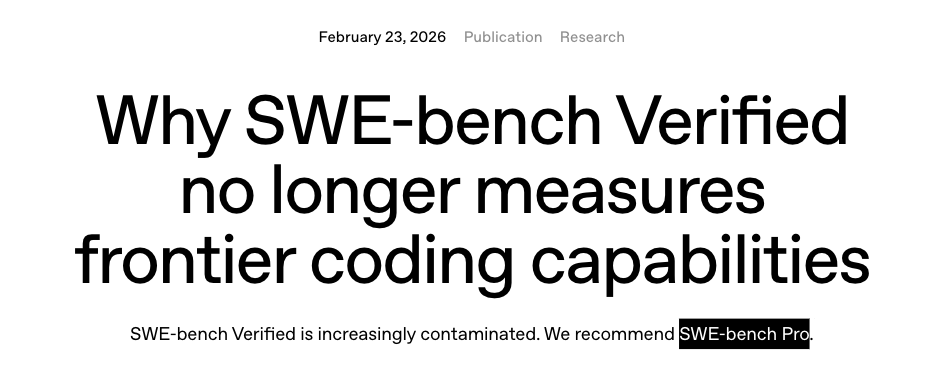

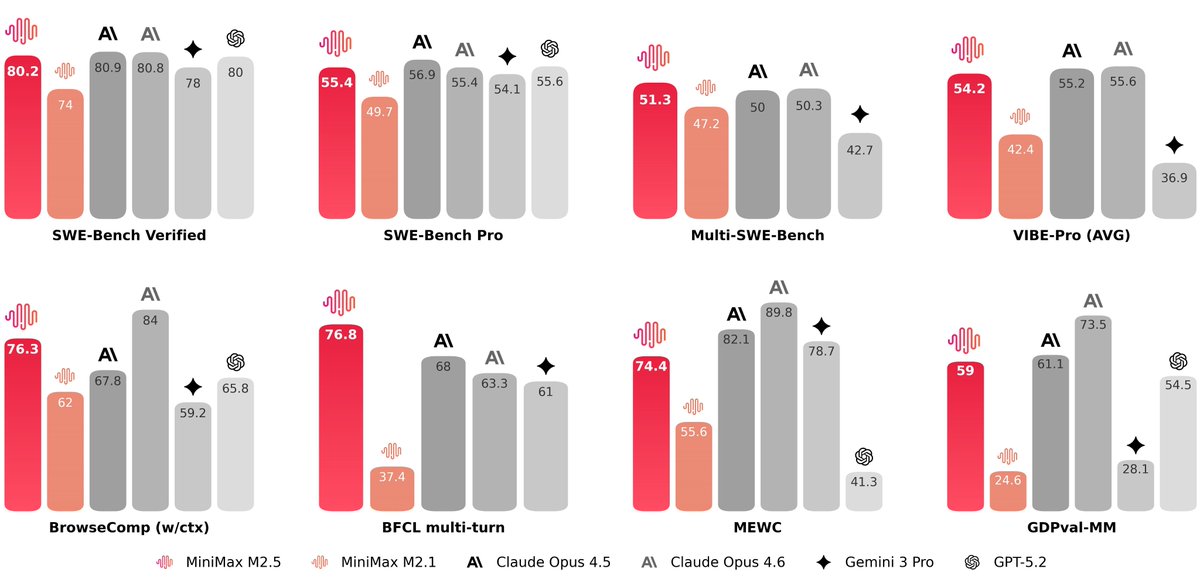

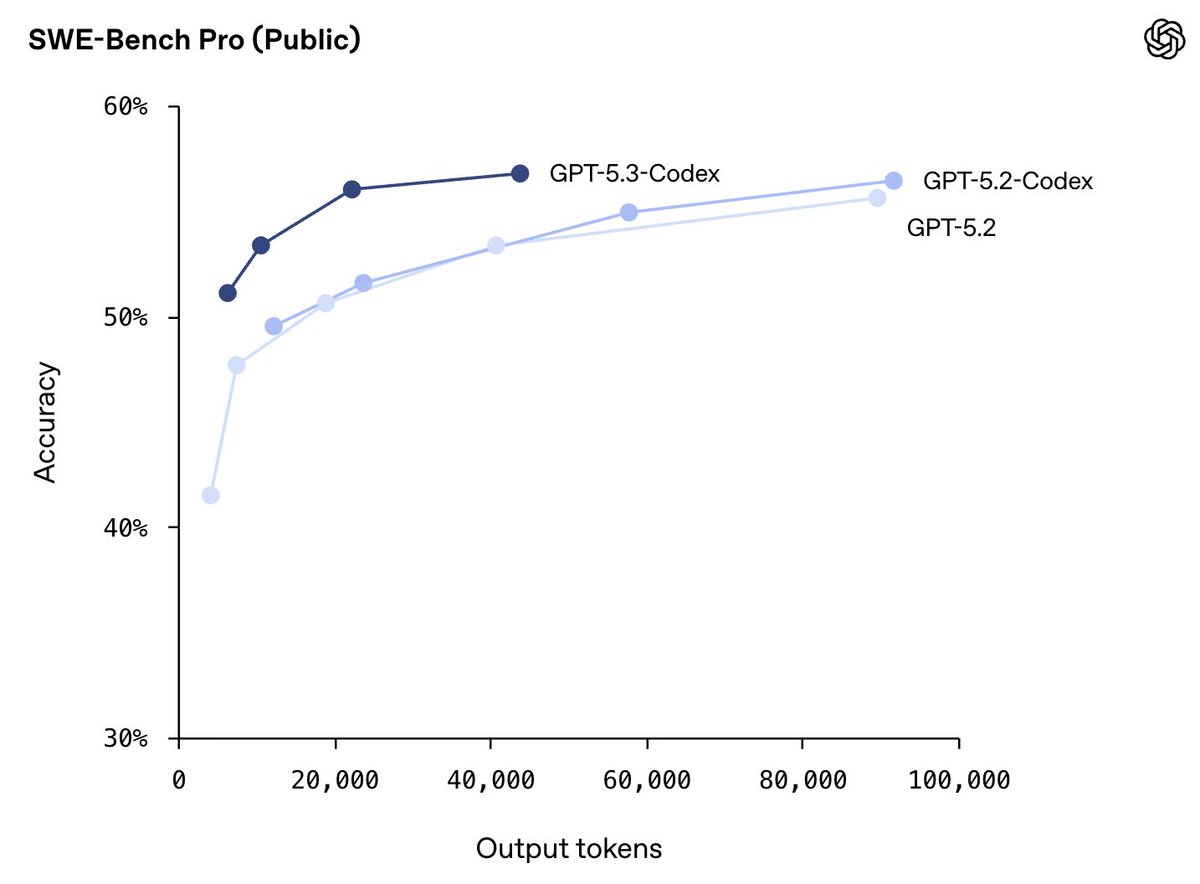

Excited to share that 4 papers from the @scale_AI research team have been accepted to ICML 2026 🎉 Building on our recent work at ICLR and ACL, this continues our push on eval and RL research grounded in real-world tasks. 🛠️ SWE-Bench Pro: Can AI Agents Solve Long-Horizon Software Engineering Tasks? arxiv.org/abs/2509.16941 Coding benchmark on enterprise-grade SWE tasks. It contains 1,865 tasks across 41 repositories (public & private), focusing on long-horizon tasks that require multi-file reasoning and patches. The tasks are contamination resistant by design. Key finding: performance drops sharply with task complexity. The biggest gap is not syntax or APIs, but deep codebase understanding and cross-file reasoning. 🔬 SciPredict: Can LLMs Predict the Outcomes of Scientific Experiments in Natural Sciences? arxiv.org/abs/2604.10718 We introduce a benchmark of 405 post-cutoff experimental results across 33 subdomains in physics, chemistry, and biology. Models perform near chance, but more concerningly, they are severely miscalibrated, often expressing high confidence in incorrect predictions. This highlights a fundamental gap between knowledge retrieval and true scientific reasoning. 📋 Online Rubrics Elicitation from Pairwise Comparisons arxiv.org/abs/2510.07284 Static rubrics break as models improve. We propose OnlineRubrics, a method that dynamically elicits new rubric criteria during RL training by contrasting policy outputs with a reference model. Instead of fixing the reward upfront, the rubric evolves with the model, capturing reward hacking behaviors, missing dimensions like transparency and causal reasoning, and failure modes that only emerge mid-training. This points toward a more adaptive approach to evaluation and reward design. 🤖 Imitation Learning for Multi-turn LM Agents via On-policy Expert Corrections arxiv.org/abs/2512.14895 We propose On-policy Expert Corrections (OEC), a data generation method that addresses covariate shift in multi-turn agent training. Instead of fine-tuning purely on offline expert trajectories, OEC starts rollouts with the student model and switches to the expert mid-trajectory, exposing the model to its own error states. On SWE-bench, OEC yields a 13-14% relative improvement over standard imitation learning across 7B and 32B model sizes. More to come!

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

GPT-5.3-Codex is here! *Best coding performance (57% SWE-Bench Pro, 76% TerminalBench 2.0, 64% OSWorld). *Mid-task steerability and live updates during tasks. *Faster! Less than half the tokens of 5.2-Codex for same tasks, and >25% faster per token! *Good computer use.

🚀 Introducing Qwen3-Coder-Next, an open-weight LM built for coding agents & local development. What’s new: 🤖 Scaling agentic training: 800K verifiable tasks + executable envs 📈 Efficiency–Performance Tradeoff: achieves strong results on SWE-Bench Pro with 80B total params and 3B active ✨ Supports OpenClaw, Qwen Code, Claude Code, web dev, browser use, Cline, etc 🤗 Hugging Face: huggingface.co/collections/Qw… 🤖 ModelScope: modelscope.cn/collections/Qw… 📝 Blog: qwen.ai/blog?id=qwen3-… 📄 Tech report: github.com/QwenLM/Qwen3-C…

🚀 Introducing Qwen3-Coder-Next, an open-weight LM built for coding agents & local development. What’s new: 🤖 Scaling agentic training: 800K verifiable tasks + executable envs 📈 Efficiency–Performance Tradeoff: achieves strong results on SWE-Bench Pro with 80B total params and 3B active ✨ Supports OpenClaw, Qwen Code, Claude Code, web dev, browser use, Cline, etc 🤗 Hugging Face: huggingface.co/collections/Qw… 🤖 ModelScope: modelscope.cn/collections/Qw… 📝 Blog: qwen.ai/blog?id=qwen3-… 📄 Tech report: github.com/QwenLM/Qwen3-C…

JUST ADDED: @MiniMax_AI 2.1 just joined our SWE-Bench Pro leaderboard. Check out the updated rankings: scale.com/leaderboard/sw…

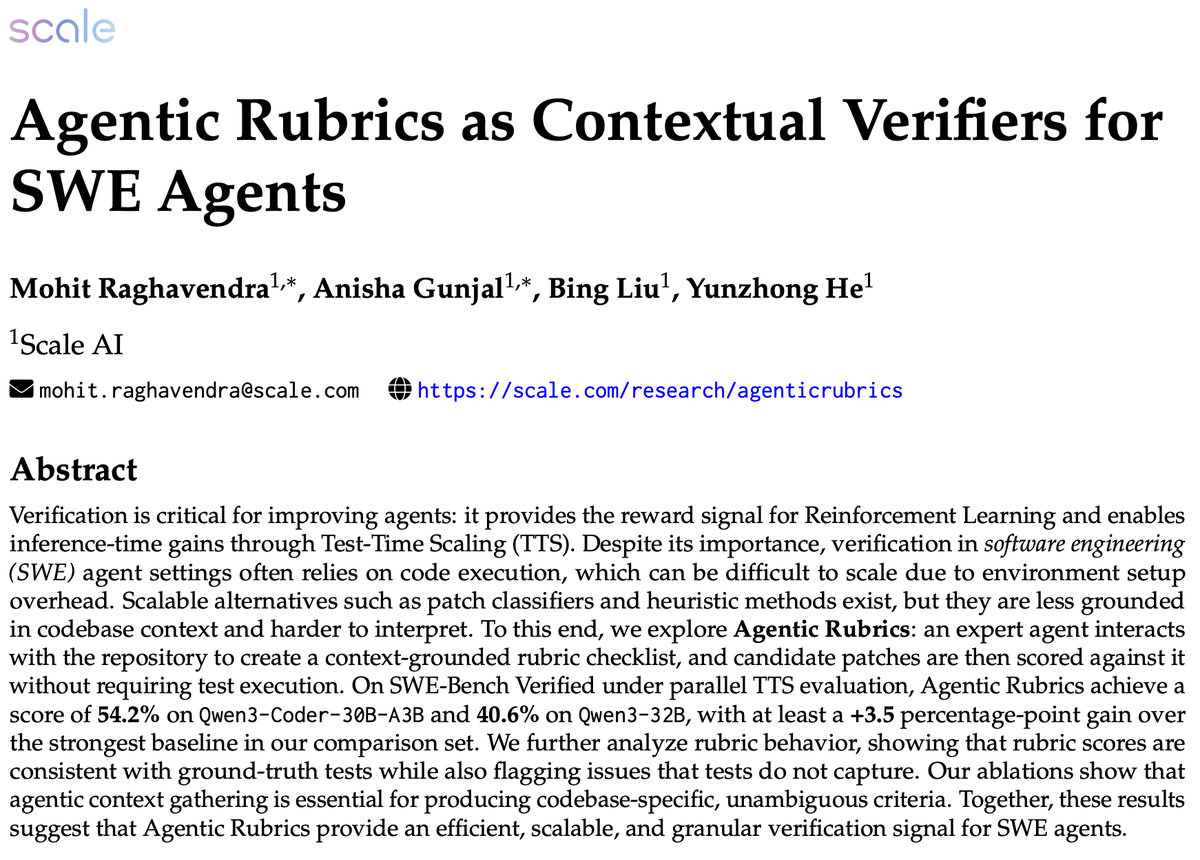

🚀New @scale_AI research: Verifiers for SWE Agents have traditionally used unit tests or simple, execution-free classifiers. But can we get verifiers that are more expressive, repository-grounded, and still execution-free at scoring time? We explore Agentic Rubrics to fill this gap 💡 Agentic Rubrics are repo-grounded, execution-free verifiers for SWE agents. We generate a checklist of concrete, codebase-specific criteria using an Agentic Harness, and then score patches against it. 🧑💻

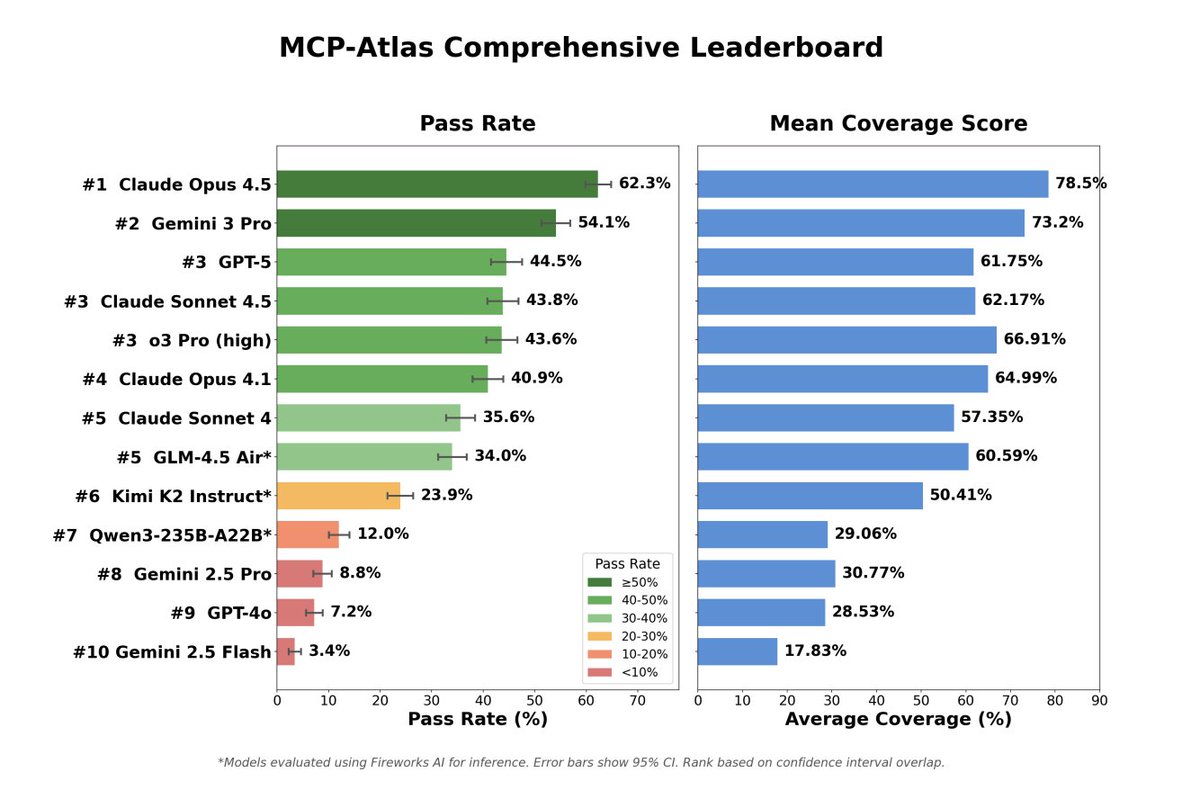

🚀 Today we’re open-sourcing MCP Atlas — a large-scale, real-server benchmark for agentic tool use, which has been used in the recent GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash model releases! 🧠 Key insight: realistic agentic tool use is not a function-calling problem. It requires tool discovery, orchestration, and recovery in real environments. 🔧 MCP Atlas evaluates agents on real MCP servers (36 servers, 220 tools, 1K human-written tasks). Models must find the right tools, call them correctly, chain them together, and handle failures. 📉 What we found: • Agents fail more often at tool interaction than at reasoning • Performance drops sharply with real-world tool friction • Scaling models helps unevenly, robustness remains hard • Claims-based eval reveals how agents fail, not just if they finish Check it out! 📄 Paper: static.scale.com/uploads/674f4c… 🌍 Environment: github.com/scaleapi/mcp-a… 📂 Dataset: huggingface.co/datasets/Scale… 📊 Leaderboard: scale.com/leaderboard/mc… #AgenticAI #ToolUse #LLMEval #Benchmarks #MCP