JP Hwang

1K posts

JP Hwang

@_jphwang

Developer educator and advocate. Sometimes writes bad jokes poorly.

Sources: Starbucks shut down an AI program for automating inventory counts, nine months after deploying it, after it frequently miscounted and mislabeled items (@waylon_wc / Reuters) (Visit Techmeme dot com for the link and full context!)

@bettersafetynet I suspect the erosion of humans will not progress in a linear fashion, rather it will be a step-wise sort of deletion of jobs interspersed with period of hyperbolic replacement.

“Sure, a robot can lift 600 pounds much more easily than I can — but that doesn’t much help me if I’m trying to work out. The same goes for the thinking exercise of education.”

Tomorrow is our next meetup. This time we're collaborating with @Cloudflare, @upsundotcom and @Microsoft to share all about MCP. Looking forward to seeing you there! 👋

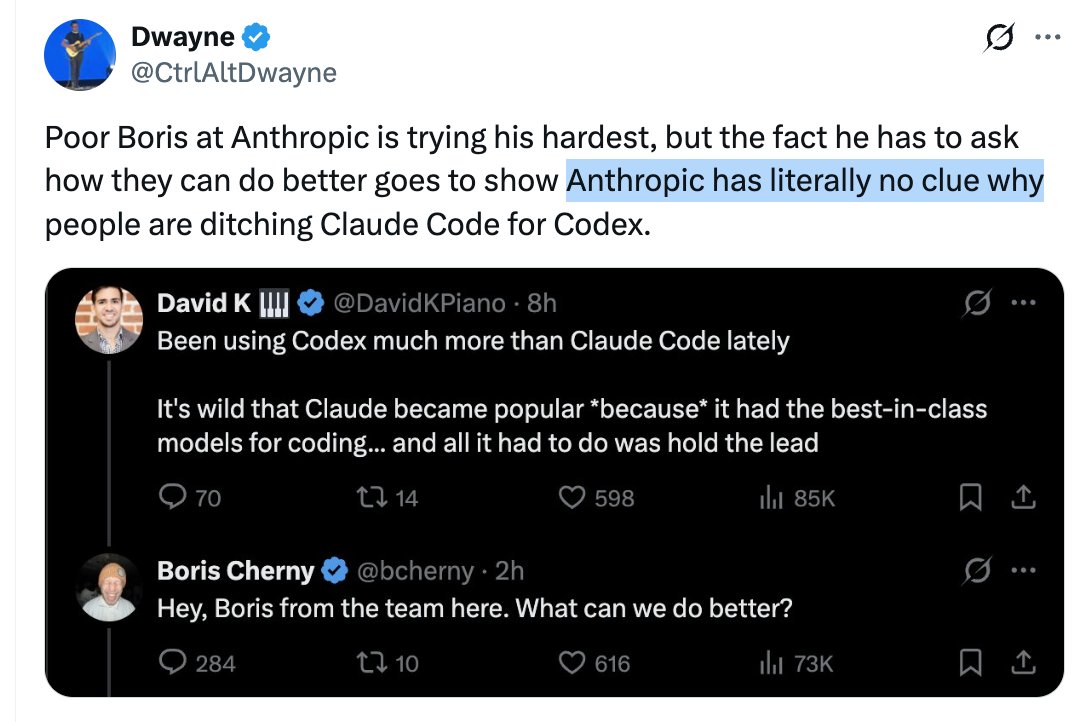

Poor Boris at Anthropic is trying his hardest, but the fact he has to ask how they can do better goes to show Anthropic has literally no clue why people are ditching Claude Code for Codex.

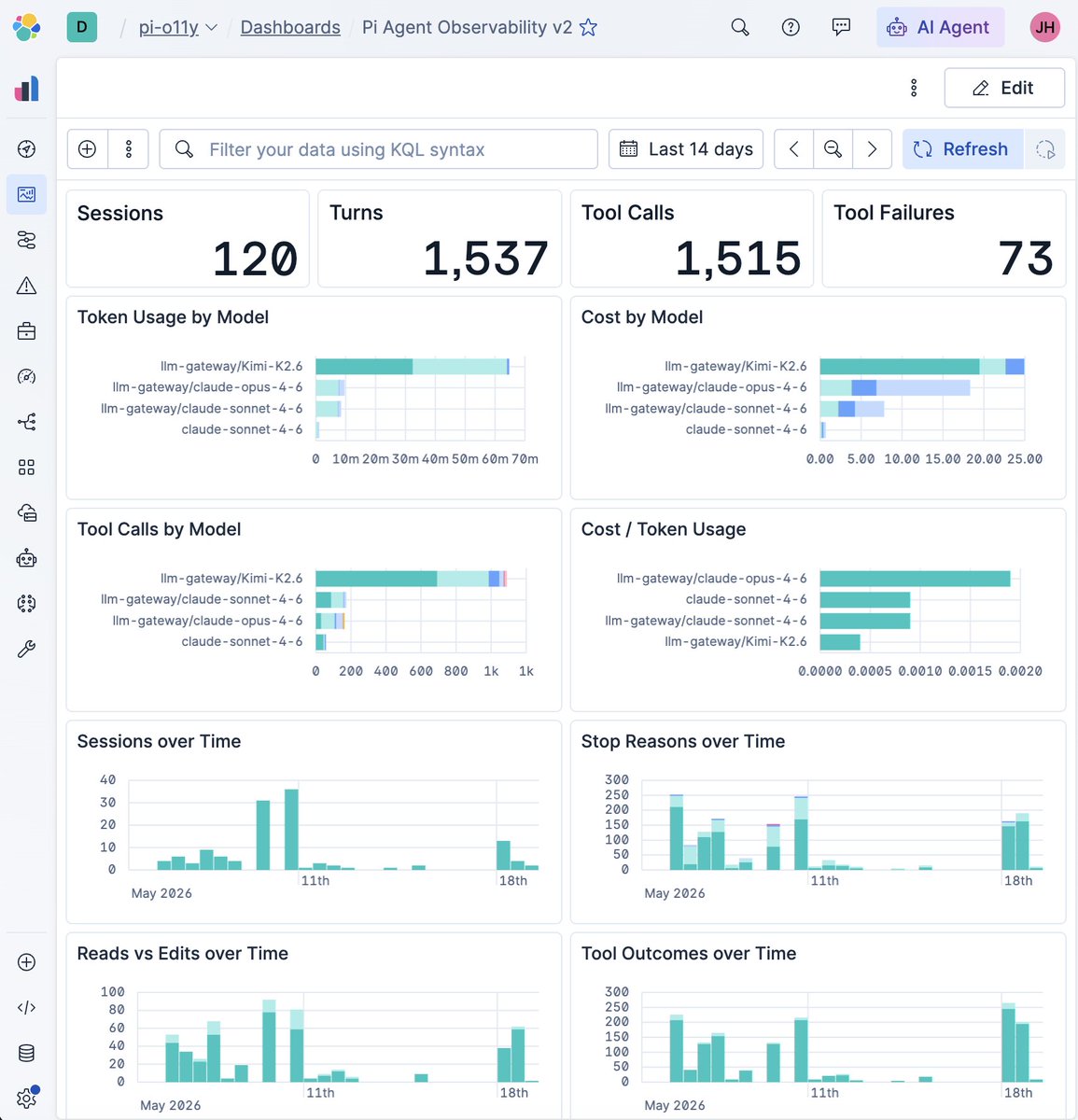

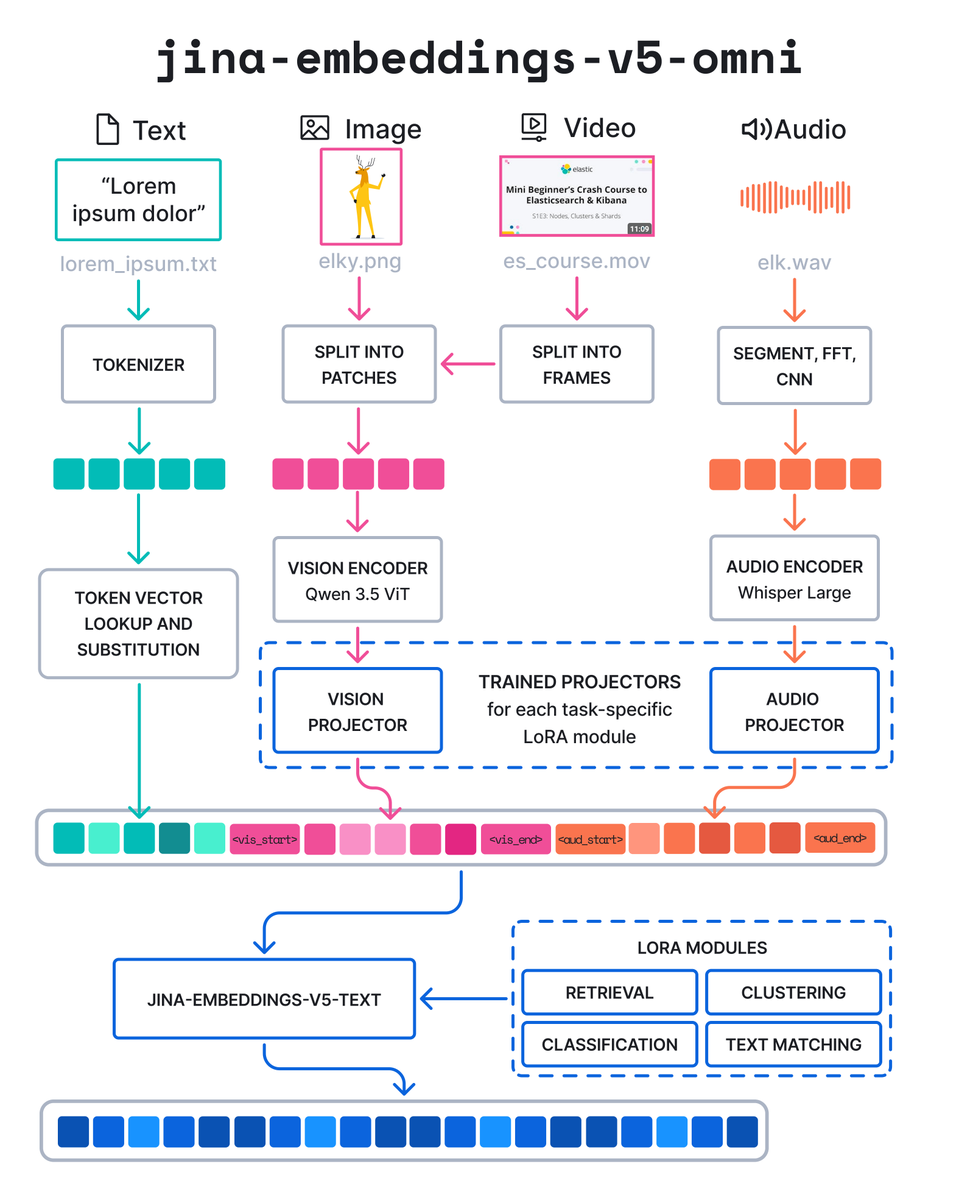

jina-embeddings-v5-omni is now on Elastic Inference Service. Text, images, audio, video. One index, one query. • Best-in-class visual understanding under 1B parameters • Beats models 20x its size on multilingual visual tasks • Beats ByteDance Seed 1.6 on video (55.57 vs 29.30 on Charades-STA) • BBQ quantization: 93% storage reduction, under 3% accuracy loss • nano runs on commodity hardware without GPU Introduction below with @florianhoenicke

Coding with agents is a trap, and we all fell for it.