@KyleRayKelley @rahuldave @zeddotdev Feels like it's becoming a habit...

The first lines I write in any new language absolutely need to be a PR for @KyleRayKelley to review 🤣

Last time it was JS/CoffeScript this time Rust 🦀😎

English

Lukas Geiger

83 posts

@_lgeiger

deep learning scientist | astroparticle physicist | software engineer

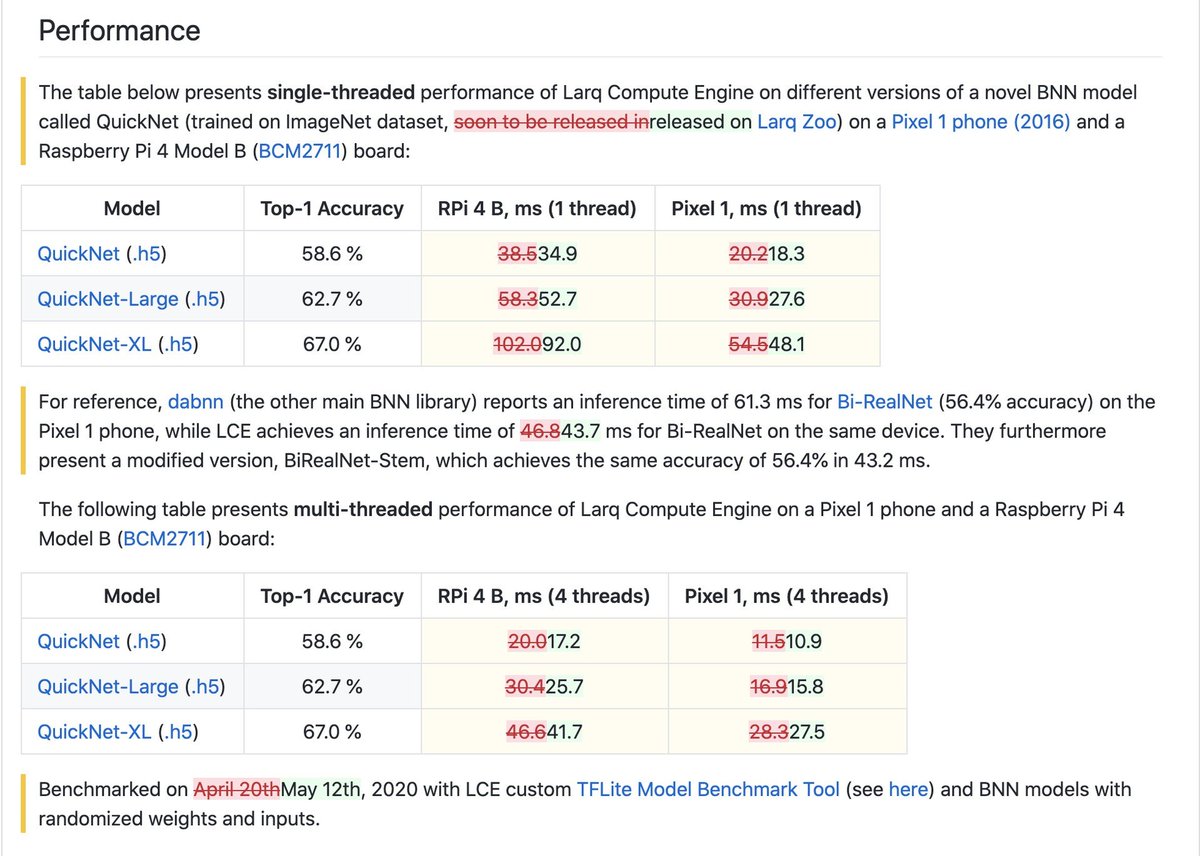

With this approach, getting an improved—higher-accuracy, lower-latency—version of a BNN implemented with the Larq stack will be as easy as updating your pip packages!