Deep Learner

3K posts

@_vision2020_

Angel Investor | Alternative Income Freak | Tech Veteran

Claude code rarely runs for longer than 15m without stopping and asking for input from me. How do all these stories of people letting agents run overnight work? Custom harnesses? Yelling at Claude in all caps to keep going no matter what?

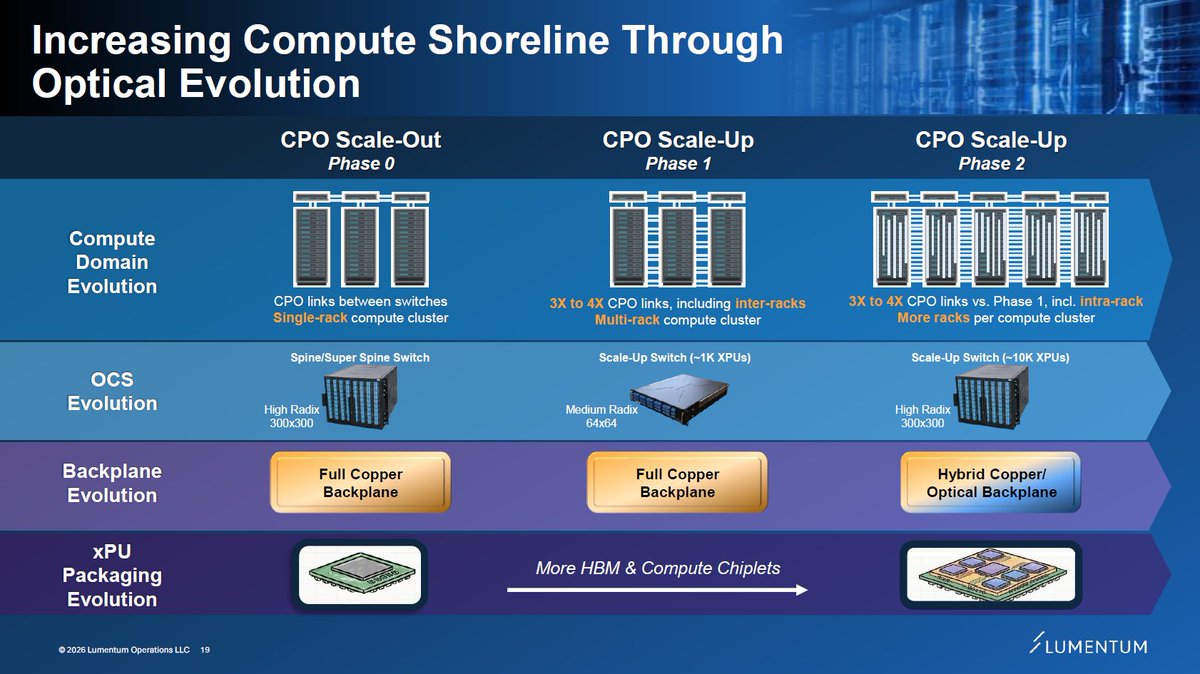

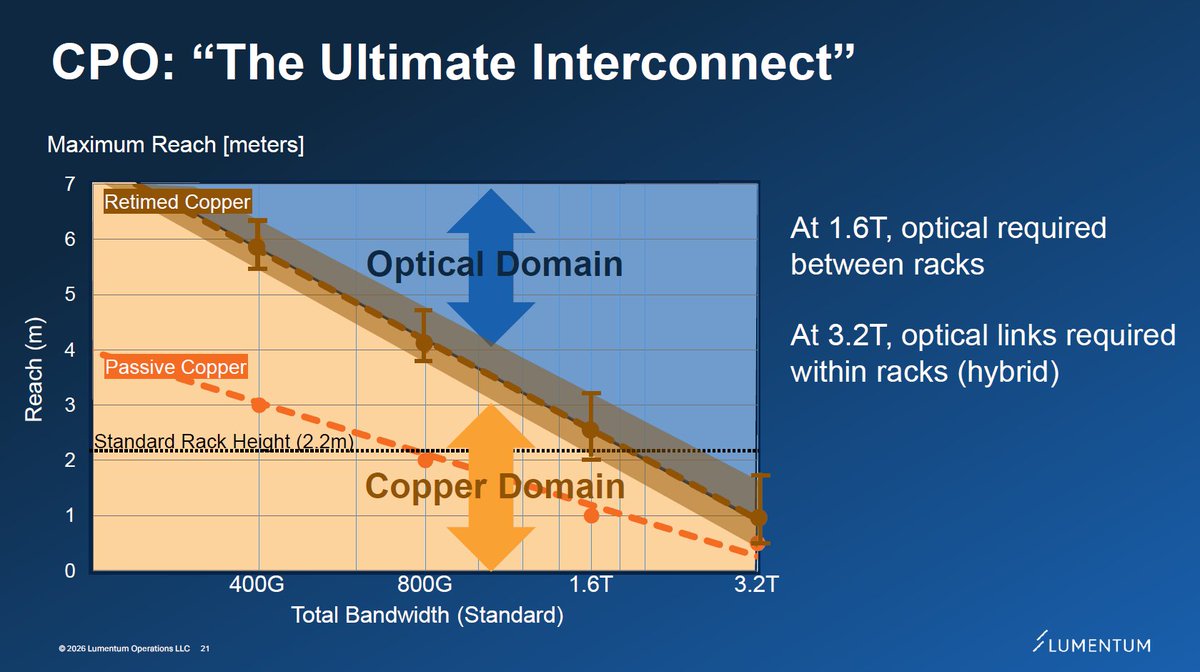

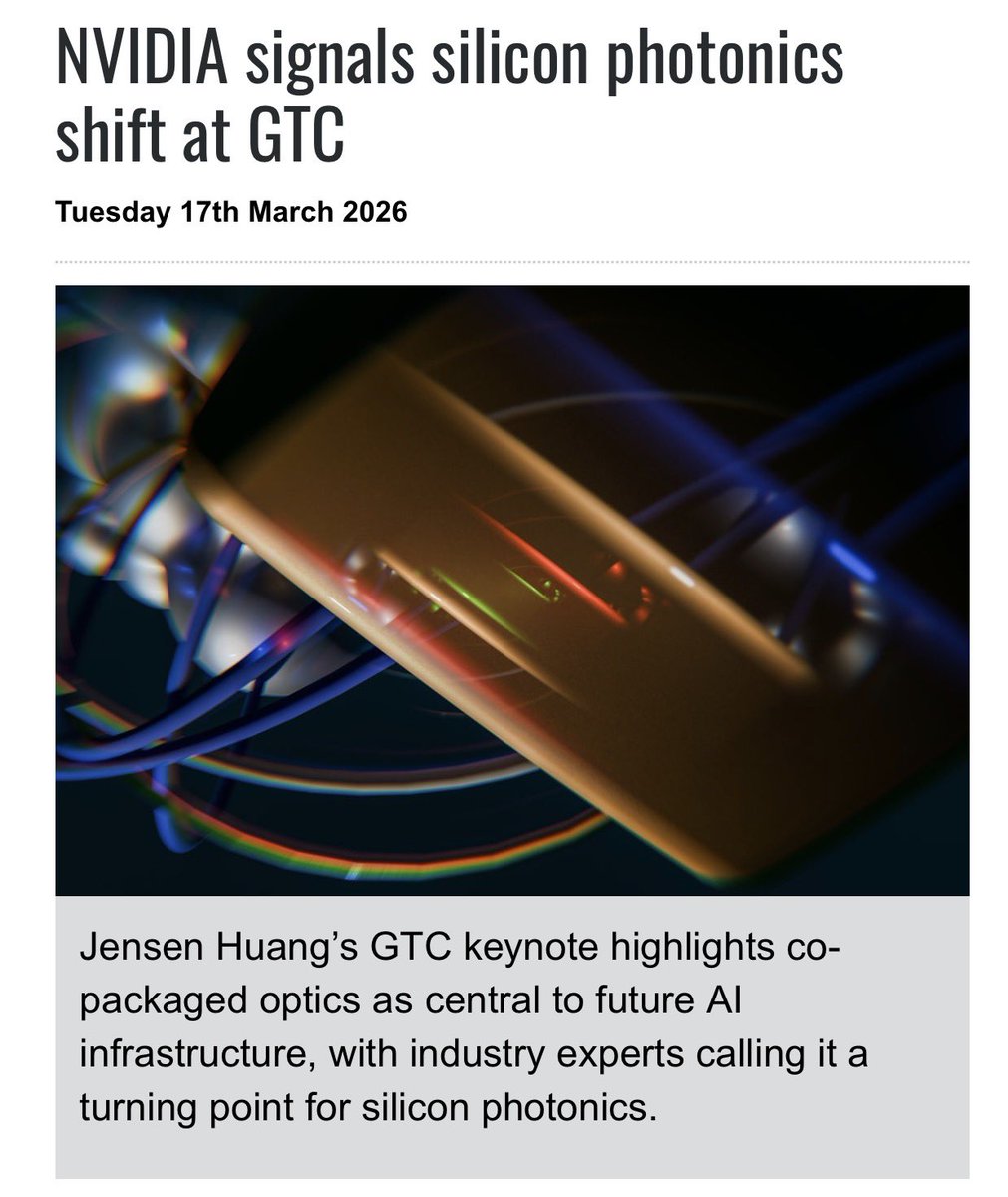

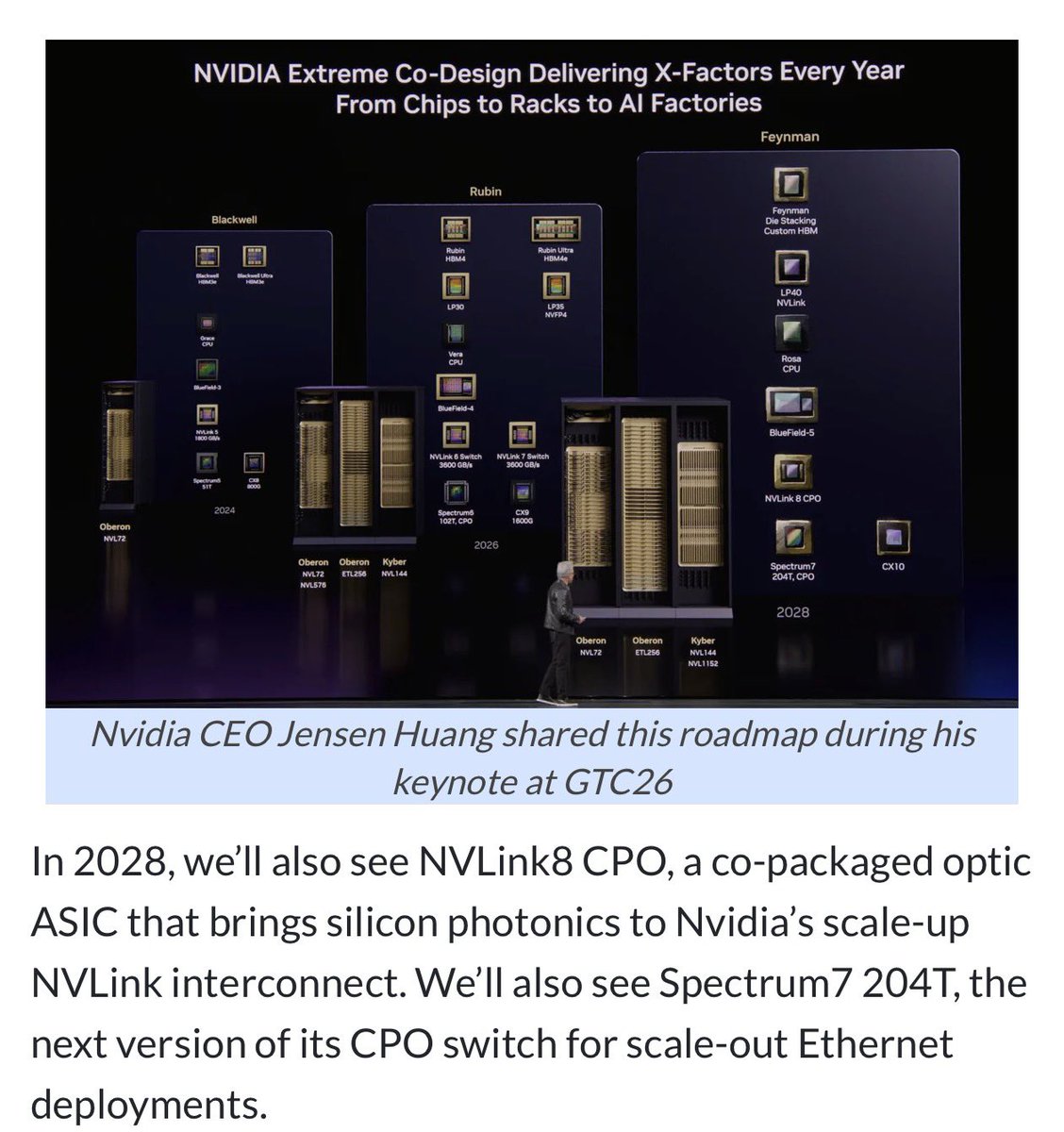

The AI data center has a problem nobody is talking about enough. The GPU is no longer the bottleneck. The wire is. Copper cannot move data fast enough to keep up with what Nvidia is building. It consumes too much power. It generates too much heat. As AI clusters get denser and models get larger, the physical limits of copper become a hard ceiling on what AI can do. The solution is light. Optical interconnects transmit data faster, cooler, and at a fraction of the power consumption of copper. Co-packaged optics, where the laser is packaged directly onto the GPU chip itself, reduces power consumption in AI clusters by up to 40%. That market is growing from under $400 million today to nearly $3 billion by 2032. Two companies own this transition. $LITE controls roughly 50-60% of the specialized laser chip market that powers these systems. Q2 revenue just came in at $665 million, up 65% year over year. Q3 guidance is $780-830 million, implying over 85% growth. Their backlog of optical circuit switches is sold out through end of 2027. They are targeting a $2 billion quarterly revenue run rate within two years. The CEO said they are at the starting line. Rosenblatt price target $900. $COHR just reported data center revenue up 36% year over year. A 20-year relationship with Nvidia just got formalized into a full strategic partnership covering next-generation silicon photonics, ultra-high power lasers, and priority capacity rights. Zero sell ratings on the Street. Rosenblatt price target $375. Both just got added to the S&P 500. Every index fund on earth is now a permanent forced buyer. Mizuho named $LITE a top AI pick for 2026 alongside Nvidia and Broadcom. The GPU era gave us Nvidia. The photonics era is giving us $LITE and $COHR.

DEVELOPING: Chinese entrepreneur boasts receipt of 200 NVIDIA H200 GPUs in Beijing despite US export ban, explains how he circumvents export ban 🧵

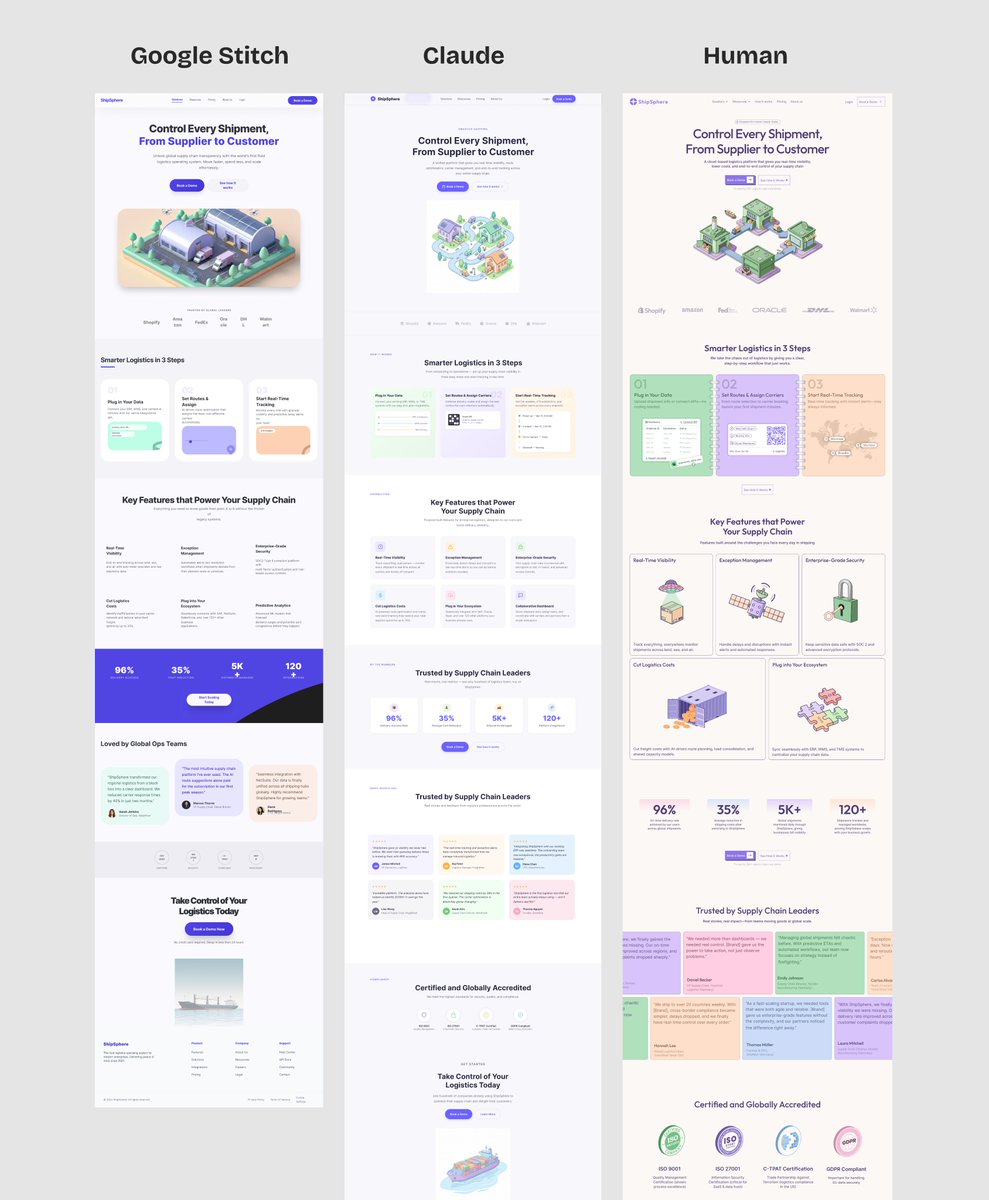

Meet the new Stitch, your vibe design partner. Here are 5 major upgrades to help you create, iterate and collaborate: 🎨 AI-Native Canvas 🧠 Smarter Design Agent 🎙️ Voice ⚡️ Instant Prototypes 📐 Design Systems and DESIGN.md Rolling out now. Details and product walkthrough video in 🧵

Introducing the new @stitchbygoogle, Google’s vibe design platform that transforms natural language into high-fidelity designs in one seamless flow. 🎨Create with a smarter design agent: Describe a new business concept or app vision and see it take shape on an AI-native canvas. ⚡️ Iterate quickly: Stitch screens together into interactive prototypes and manage your brand with a portable design system. 🎤 Collaborate with voice: Use hands-free voice interactions to update layouts and explore new variations in real-time. Try it now (Age 18+ only. Currently available in English and in countries where Gemini is supported.) → stitch.withgoogle.com

I might be 70% there in understanding CPO scale-up opportunity through Feynman, but some technical clarifications plus coming supplier datapoints should take that to 80-90%? Inter-rack optical scale-up for NVL576, as mentioned earlier, appears confirmed for Oberon. But that's only a first step. Scale-out near term is well understood. Orders received to date by $LITE or $COHR and their high level TAM statements seem roughly consistent. Jensen is often imprecise during presentations, but that often leads to opportunities. This is one of many AI technologies where fortunes could be made or lost over the next years.

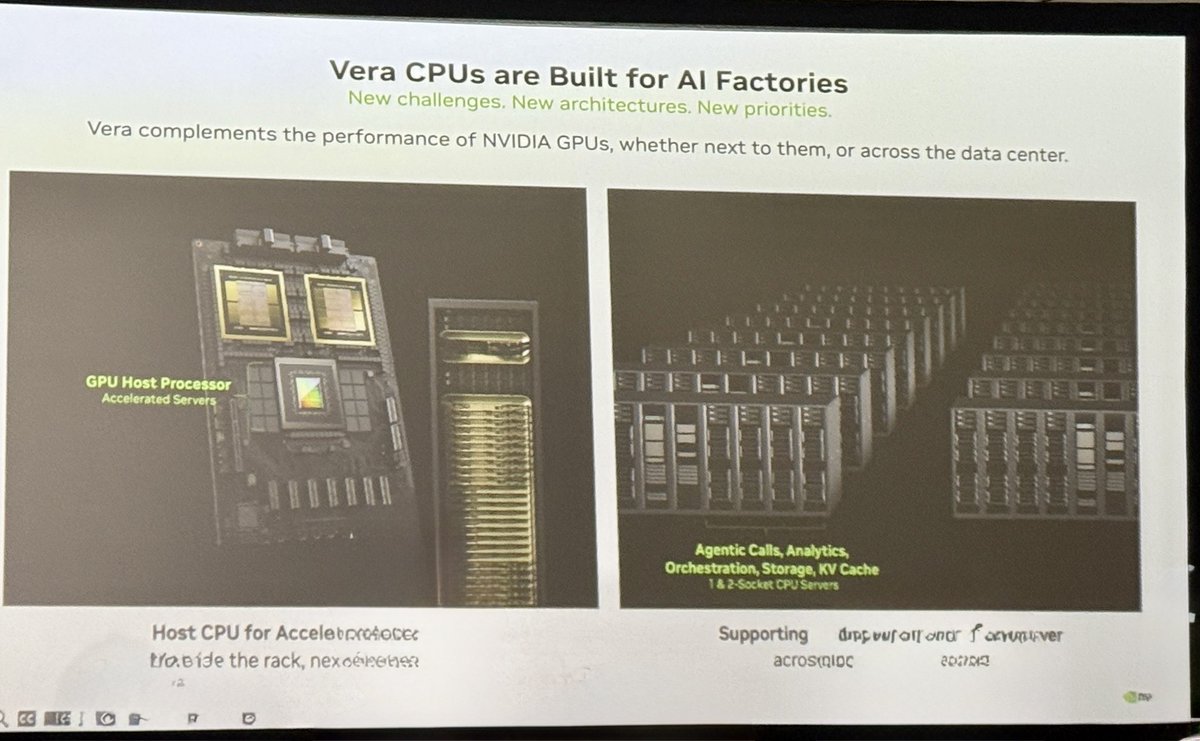

$NVDA DESK NOTE - NVIDIA Vera CPU: The Amdahl Argument for a Purpose-Built AI Factory CPU atlaspeakresearch.com/report/1683d7 Bottom Line: Vera is best understood as a purpose-built AI-factory CPU designed to compress the serial fraction of reasoning, tool-use, and reinforcement-learning workflows so that GPU capital does not sit idle behind CPU-bound orchestration. Its differentiation comes from five interacting features: unusually high single-thread ambition for control-heavy code, unusually high memory bandwidth per active core, deterministic full-socket behavior, coherent CPU-GPU memory via NVLink-C2C, and rack-scale power efficiency. Against that, AMD and Intel remain stronger on universality, x86 software inertia, memory capacity, and standards-based flexibility. Vera therefore looks less like a broad x86 killer and more like a specialized control-plane and environment processor that becomes highly compelling precisely where Amdahl's Law makes the CPU impossible to ignore.

The upcoming CPO / Silicon Photonics Bottleneck Cheat Sheet: $SIVE, Sumitomo, $LITE, $COHR, $AVGO, $MTSI, $AAOI - Light Source (CW DFB Lasers) $TSEM, $GFS, $UMC, $TSM, $INTC - SiPh foundry $NOK, $CIEN, $CSCO, $COHR - DCO $HIMX, FOCI (3363.TWO) - Micro-lens + Fiber Arrays $POET - Optical Interposers $SOI, $AXTI, Shin-Etsu - Substrates $FN, $ASX, Innolight, Eoptolink - Optical Packaging and Assembly $MTSI, $SMTC, $MRVL, $MXL - Analog/Mixed-Signal ICs $LWLG - Speculative Modulator Materials. $GLW, $APH, $TEL, $FIT, Fujikura - Connectors and Fibers $FORM, $KEYS, $VIAV, $AEHR- Test & Measurement $BESI, $SMHN, $ONTO, $CAMT - Advanced Packaging & Hybrid Bonding Many are private companies from Lightmatter, Ayar, Ranovus and others. Now... Everyone is asking... How do you profit? If you look at the forecast for CPO TAM, it's a straight line up, and next year is inflection point for CPO mass deployment. The alpha is capturing the rotation: From the current EML bottlenecks ( $LITE, $COHR type) to SiPh / CW DFB architectural winners for CPO. Highest upside potential are the ones that aren't included in current cycles. But that are in the next. Companies like $SOI, $SIVE, or $AEHR are perfect examples. Ride the current pluggable bottleneck like $AAOI. But the alpha is frontrunning institutions with the next CPO bottleneck. The capital rotation is inevitable.

wait NVL1152??? what? 1152?