Aarjav

12.7K posts

Aarjav

@aarjav

Founder, CEO at @Ridecell⚡️Intelligent Autonomous Optimization ☁️ 🚛🚗 leasing by @Toyota @BNPParibas Merchants 💪🏼 YC @Initialized @BMW @GTcomputing🐝

Reports from Iran: To choose a new Supreme Leader, by law the members of the Assembly of Experts need to meet in person. It seems they are in the process of changing the law to allow online meeting and online vote. Considering that the members of these assembly are old, it would also take a few days to get them connected and set up Zoom.

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

Feels like a milestone moment for AI * I’m returning from reviewing the incredible excitement around the impact of @Ridecell’s AI deployments in production in two major European countries, at one of the largest companies in our space, in an industry not known to move fast. @Karpathy, one of most “reality” focused AI researchers, who has shipped more scalable AI products than most, says the days of coding by typing code into a computer ended in December 2025. WSJ reports a sharp drop in stock prices driven by a viral doomsday study that credibly claims that we are underpricing the impact of AI disruption, and it’s going to arrive faster than markets are pricing. wsj.com/finance/stocks…

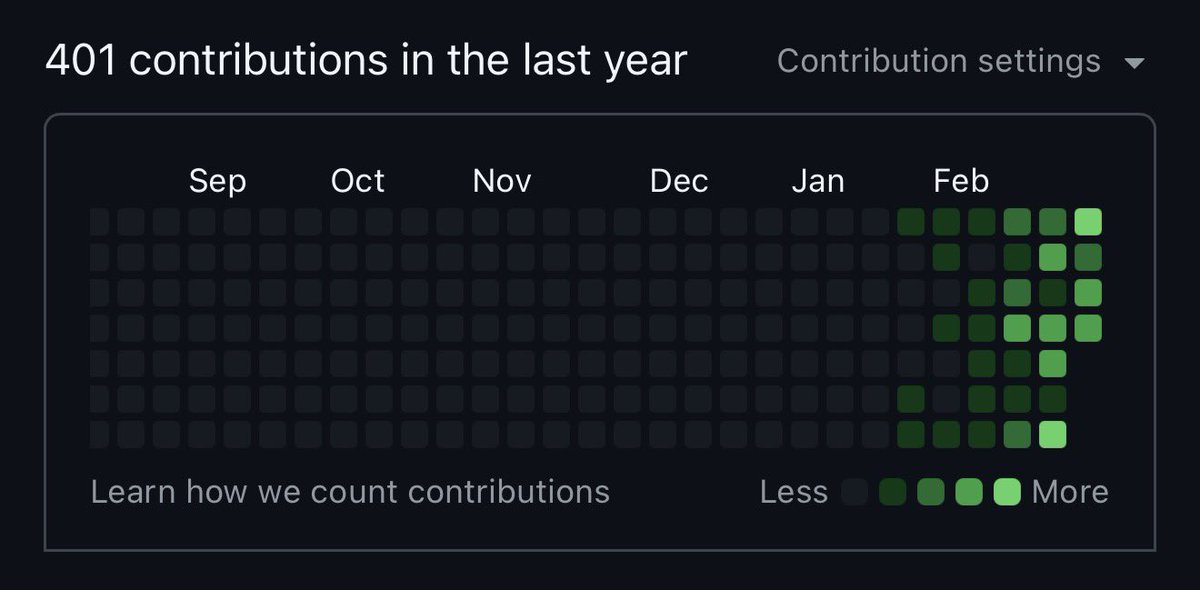

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow. Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes. As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now. It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

Someone asked what's the most underappreciated quality in startup founders. I realized I could answer this by asking what's the most underappreciated aspect of startups. That's easy: how hard they are. So the most underappreciated quality in founders is sheer toughness.