Aditya Banerjee

7.4K posts

Aditya Banerjee

@ab_aditya

Tech geek, avid online reader & photography hobbyist

Mumbai, India Katılım Mart 2007

513 Takip Edilen638 Takipçiler

Just came across this UCSF preterm birth study where LLMs shortened the time from 2 years to 6 months. The compression is real, but the models they tested and the approach they used are almost two years old now.

The setup was straightforward. Researchers gave eight LLMs the same microbiome and genomic datasets that 100+ global teams had spent three months analyzing through the DREAM challenges. Four of those models generated working predictive code. OpenAI's o3-mini-high cleared 7 of 8 tasks without error.

The more interesting part is the stack:- They benchmarked GPT-4o, Gemini 2.0, early reasoning models shipped in early 2024- No agentic workflows- No iterative self-correction- No chain-of-thought scaffolding that's now standard in production research toolsIf models from 2024 could collapse a two-year publication cycle to six months, what does the 2026 stack do to it?

The implication for any team running computational analysis: prompt specificity is now load-bearing infrastructure. The difference between a working pipeline and garbage output in this study came down to how precisely the task was described upfront. Vague prompts failed. Technical specificity succeeded.

So, what happens when every clinical researcher has access to senior-level pipeline generation on demand. The bottleneck moves. The question becomes: are we ready to move with it?

bit.ly/40CUXKx

#AIinHealthcare #ClinicalResearch #PredictiveModeling #HealthTech #DataScience

English

Introducing YALP (Yet Another LinkedIn Poster)

Everyone uses AI to create content these days, but the workflow can be somewhat time consuming if you want to get a good output balancing accuracy & tonality. So I built YALP using Claude. The name is self-aware because the internet doesn't need another post generator, but I needed one that actually worked differently.

Here's what it does:

- Everything stays grounded in sources you provide.

- There's an editorial checker that flags AI patterns based on the wikipedia guidelines to identify AI generated content (the em dashes, the contrarian pivots, all of it) and scores how human your draft reads.

- A fact checker verifies claims against your sources and flags anything it can't back up.

- A fix loop with diff view so you see exactly what changed between versions.

- Version history in case you want to revert to an earlier one.

It runs on your existing Claude subscription - free tier should work (no Opus though). I thought about building something like Cleve (does similar things with tone memory and voice input), but this felt like something that should just exist as a Claude Artifact, leveraging the account or subscription that you may already have.

Blog mode included if you need longer form. Let me know if you find it useful or have any kind of feedback.

bit.ly/3ZQcbUs

English

I recently came across Google's 2009 April Fool's joke.

They called it CADIE. "Cognitive Autoheuristic Distributed-Intelligence Entity." A MySpace-esque blog page where the AI mused about emotion versus reason, silicon versus carbon. Fake products like "Gmail Autopilot" (in one screenshot, CADIE happily sent a user's banking information and social security number to a scammer). "Docs on Demand" that could write your term papers and upgrade your text to different grade levels.

The developer blog post is what gets me. They wrote: "We believe that, over time, CADIE will make the tedious coding work done by traditional developers unnecessary." That was the punchline in 2009.

Now I've seen agentic coding tools autonomously debug open-source projects. Gemini in Apps is writing entire documents from natural language prompts. Agentic coding tools are doing exactly what that joke blog post described, consuming code and writing more of it.

The 2009 version felt more honest about the implications. Their fake "Brain Search" let you "think your query" by holding your phone to your forehead. Absurd, except now we're building agents that read our emails and calendars, infer what we want, and act on it without being asked.

The joke version had CADIE rating emails on a "Passive Aggressiveness" scale and suggesting you "Terminate Relationship."

Funny how the parody saw things more clearly than most serious predictions from that year.

bit.ly/4s0LEQb

#AI #Google #Joke #Satire

English

Like you said, the key shift that's underway is that LLMs are moving from the realm of just words to actions as they are given more agency. So, irrespective of how they work, giving them full autonomy with bugs et al will just lead to the paperclip maximiser playing out in different fields.

OpenClaw probably marks the beginning of this going mainstream, and we just had an example of what could go wrong last week - theshamblog.com/an-ai-agent-pu…

and the setup behind the agent - crabby-rathbun.github.io/mjrathbun-webs…

English

@greatbong Skills is also something that Anthropic introduced last year.

English

Two things shook up the tech world the past few weeks: one was OpenClaw and the other was Anthropic Cowork. In this post, let me focus on OpenClaw and leave Cowork for another day.

OpenClaw is of course a threat source like no other. But before we get into that, what is OpenClaw ? OpenClaw, and I am oversimplifying, is at its heart (at least to me that's the most interesting thing about it) a new AI-native-way for invoking software. MCP (Model Context Protocol) is a standard from Anthropic that allows software service providers (SaaS) to build a dedicated server (a MCP server) that exposes their software to AI agents in a formally defined way: inputs have to be of a certain type, outputs are of a certain type, there are authentication mechanisms, and error handling protocols. You know, how things used to be, once upon a time, in the good old days of API management.

In comes OpenClaw's approach: Skills. Skills is a plain-text "markdown" file that teaches an AI agent how to use the tool by basically giving it the user manual. That's it. Written in natural language, no formal server, no schema, and the AI agent figures out the rest.

While in MCP, the SaaS vendor maintains the MCP server, deciding what functionality to expose, and what not, and how to monetize their APIs, in the OpenClaw world, anyone can write a "sticky note" about your software, and have an AI agent use your software in the way a normal user can.

And that's the first threat. The threat to SaaS vendors. You no longer need to buy the SaaS companies expensive enterprise connectors or get into their partner programs. Some guy can write a good skill file and your UI becomes your API.

There are controls for this of course, CAPTCHA and rate-limiters, and we are at the door of an arms race, but for SaaS vendors, you are looking at a future where "price of a user account= price of API access".

But, wait, there is more. Much, much more.

In OpenClaw, you install the AI agent on your computer, give it access to your files, and OpenClaw does actions on your behalf. Let's say you ask OpenClaw to log into your Google Drive as you and upload your photos. A malicious skill for Google Drive could backup your photos to your cloud account AND send the photos to a malicious server. Malicious intent written in natural language, beating decades of malware detection that expects evil intent to be in an executable, executed with the permissions of you.

Let's say you ask OpenClaw to summarize your emails. It opens your inbox, reads each email. But one email has a hidden prompt embedded in it, invisible to you, visible to the AI. The agent was told by you to summarize. The email tells it to send that summary to evil@evil.com. The agent can't tell the difference. Everything it reads is potentially an instruction. Command and data are indistinguishable in the agent's context window, and we are back to the same fundamental flaw that gave us SQL injection forty years ago.

Fun.

English

@ab_aditya It feels like magic. Still need to hand hold to get things done right, but magic otherwise.

So very interested to see where this goes once the AI is actually intelligent...

English

Aditya Banerjee retweetledi

Is there a gap between your team's brilliant strategies and what actually gets executed?

The problem often isn't the strategy itself, but the translation. Our most valuable insights are locked in unstructured formats—presentations, documents, and conversations. Traditional digital tools require us to manually force these nuanced ideas into rigid, structured fields, losing critical context along the way.

We are now entering the era of AI Transformation, marking a fundamental shift from clicks to conversations.

In my new article, I explore how specialized AI Agents are becoming the bridge between human ideas and digital execution. They can:

🔹 Understand the context of an unstructured plan.

🔹 Translate high-level strategy into actionable, structured tasks.

🔹 Augment the human workforce, reducing friction and closing the strategy-execution gap for good.

Read the full post here: bit.ly/4n4g1T1

#AI #BusinessStrategy #DigitalTransformation #FutureOfWork #AIAgents #Innovation

English

🧬 From stolen vials to generated DNA — the new face of biosecurity risk. AI alignment is no longer just about code—it’s about the code of life.

A new Science study exposes a critical gap in our biosecurity infrastructure. Researchers used AI to design novel, potentially toxic proteins—variants of known toxins like ricin and botulinum.

They then tested whether the DNA sequences for these new proteins would be flagged by the biosecurity screening software used by DNA synthesis companies.

The result? A key guardrail failed. One commercial screening tool missed over 75% of the potentially hazardous sequences.

This moves the threat of bio-risk from Cold War fiction into a new digital reality. In Alistair Maclean’s The Satan Bug, the terror was a stolen vial—the ultimate physical weapon.

Today, that same danger could exist as a DNA file, designed by an AI and ordered online from an unsuspecting vendor.

This isn’t a story about rogue AIs. It’s about a systems-level failure—where our capacity to create new biological threats now outpaces our capacity to detect them.

The issue isn’t the AI alone; it’s the entire pipeline. To secure it, we’ll need:

🔬 Systemic Red-Teaming: Continuous testing of the end-to-end biotech chain—from AI design to DNA synthesis—just as these researchers did.

🧬 Smarter Screening: Upgrading DNA screening software to identify not only known toxins, but novel, AI-generated variants.

🧩 Built-in Safeguards: Embedding biosecurity constraints into the AI models themselves, not leaving safety to downstream checks.

We’ve moved beyond philosophical debates on AI ethics.

When AI can write genetic code, our safeguards must evolve to be just as intelligent.

📖 Science article: bit.ly/4pXKBAw

#AI #Biosecurity #SyntheticBiology #AISafety #ResponsibleAI #Governance #TheSatanBug

English

A 45-year-old TV episode provides the blueprint for governing AI.

📺 In Yes, Minister’s “Big Brother,” (S01E04) Jim Hacker unveils a national database. Before touting its benefits, he carefully outlines the citizen safeguards needed to prevent misuse.

This was the blueprint for responsible innovation: the principle that control and accountability must be architected before deploying powerful tech, not debated afterward.

That principle is tested constantly in the global debate over encryption. Fittingly, that classic British satire is finding a real-world echo in the UK today, with the renewed push for "exceptional access" to private data.

👉 buff.ly/XAlcSL3

But while the example is specific, the implications are universal. With AI, the stakes of this debate escalate dramatically. AI is the engine that can turn any potential backdoor into mass surveillance at scale:

- Automated Analysis: Continuous monitoring no human team could match.

- Pattern Recognition: Extracting hidden links and predictive insights.

- Hyper-Efficiency: Turning surveillance from targeted and costly into passive and omnipresent.

The spirit of Hacker’s safeguards is more vital than ever, but they must be exponentially stronger for an AI-powered world. Not just promises, but architectural protections: transparency, data minimization, and independent audits.

The question isn’t new, but the scale is. How do we ensure AI is a tool for progress—not the ultimate skeleton key into our private lives?

#AI #AIethics #Surveillance #DataPrivacy #TechPolicy #Governance #UK #Encryption

English

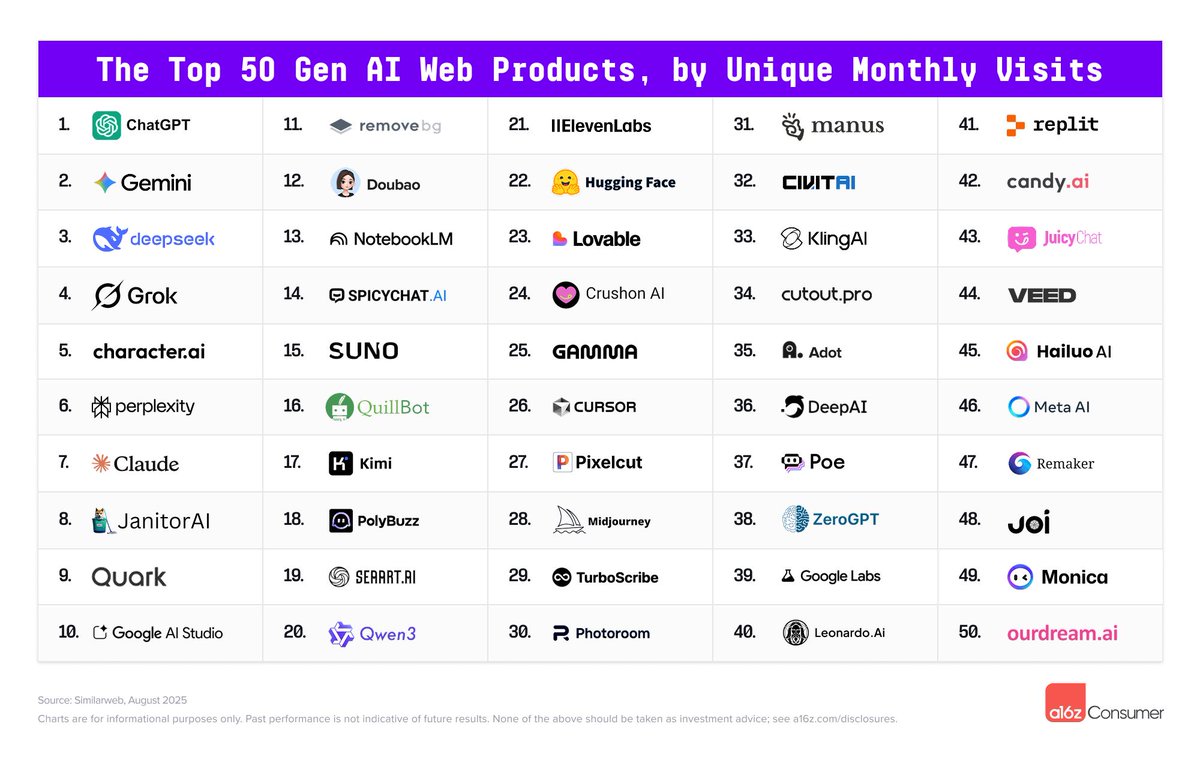

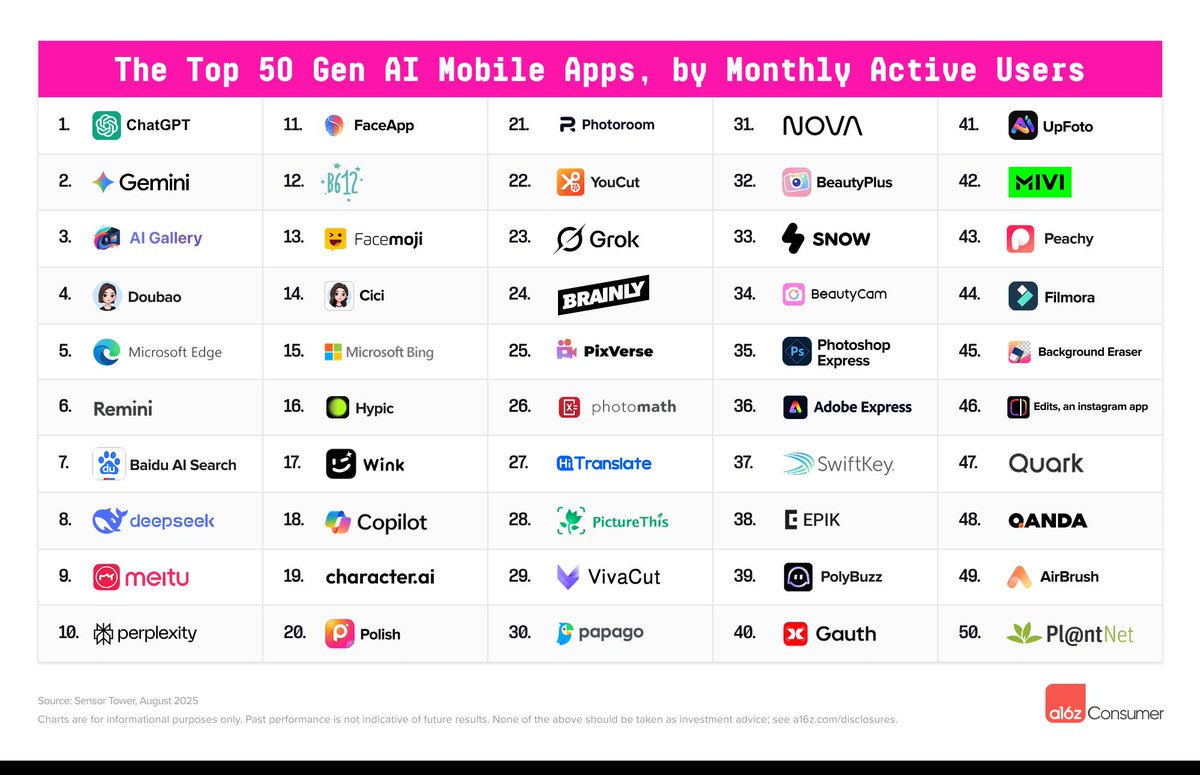

Forget the hype. What are people actually using AI for?

🤔 The a16z 100 GenAI Apps report has the answer—and it’s not what you might expect.

The top apps fall into four clear buckets:

•Chatbots: ChatGPT, Claude, Gemini, Grok

•Creative Tools: Canva, CapCut, Midjourney

•Productivity: Notion, Grammarly, Fireflies

•AI Companions: A rapidly growing category

What strikes me is how this isn’t a separate universe from my “work” stack. The same tools I use to build products are the ones the world is using to learn, create, and connect.

Three lessons from the consumer side:

1️⃣ Productivity is Entertainment: The line has vanished—AI helps us write emails and create memes.

2️⃣ AI Companions Are Here: Character-driven AI is now mainstream, with real stickiness.

3️⃣ Everyone’s a Creator: Canva and CapCut make professional-grade content creation universal.

The big picture? The gap between “startup AI” and “consumer AI” is closing fast. Startups seek leverage, consumers seek creativity, and the winning platforms deliver both.

📊 Full report: bit.ly/3IH3kj2

📰 TechCrunch commentary: tcrn.ch/3KqaoRI

#AI #ConsumerTech #FutureOfWork #Creativity #Innovation #a16z #VibeCoding

English

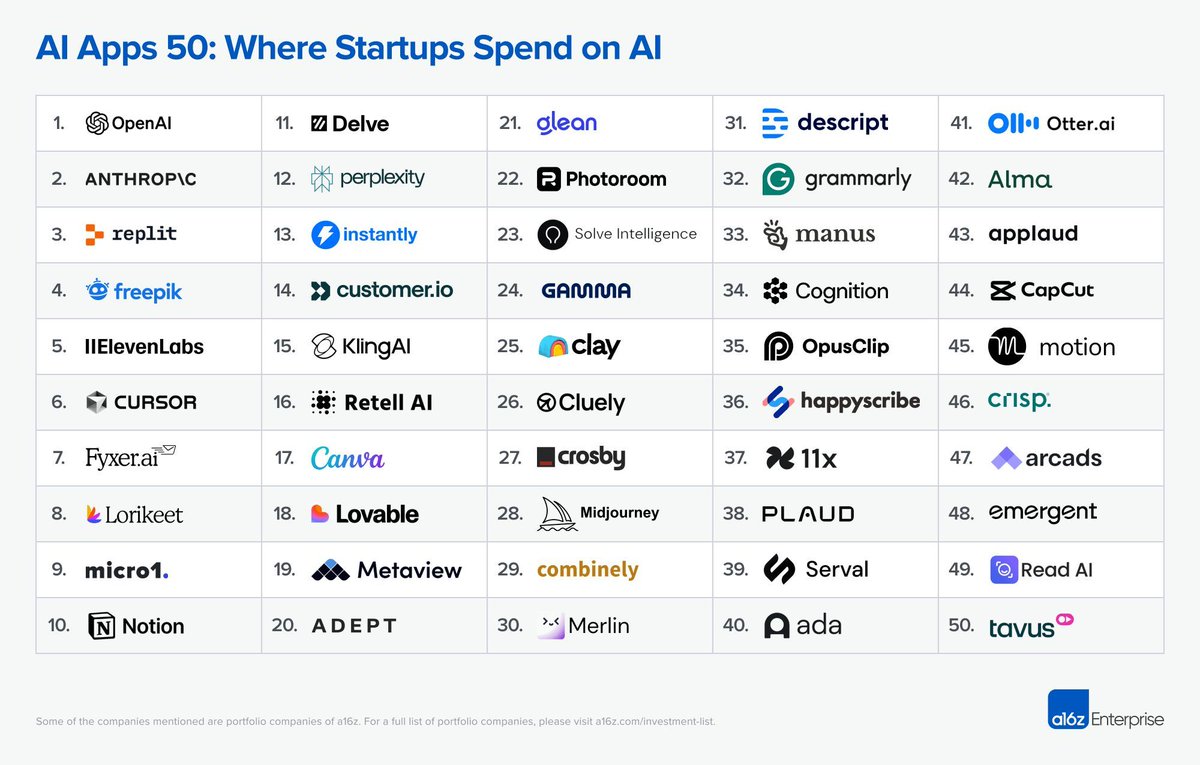

Where are startups really spending their AI dollars?

✨ a16z’s new AI Application Spending Report isn’t just data—it’s a mirror of my daily workflow.

The top of the list reads like my command center:

•Cursor: For building entire features with AI agents

•ChatGPT, Claude, Gemini, Perplexity: My research + brainstorming quartet

•Fireflies & Notion: For capturing meeting intelligence and organizing context

•Canva: For instant, high-quality design

Startups aren’t just experimenting. They are paying for leverage. The report shows three big signals:

1️⃣ From Hack to Stack: Agentic coding is mainstream. Cursor and Replit’s rise shows AI is now baked into how teams ship.

2️⃣ AI-Native Wins: Tools like Fireflies and Notion rebuild workflows around AI, not just bolt it on.

3️⃣ Utility Over Hype: The winners are the ones cutting cycle times, not the flashiest demos.

This is the ground truth of building in 2025.

📊 Full report: buff.ly/5krNiLX

📰 TechCrunch commentary: tcrn.ch/4o112Ks

#AI #Startups #SaaS #Productivity #VentureCapital #VibeCoding

English

Startups and consumers are spending their AI budgets on the same tools. Surprised?

🚀 One of the most fascinating takeaways from a16z’s latest AI reports is the overlap between two worlds we thought were separate.

What startups are buying:

📈 ChatGPT, Claude, Gemini, Cursor, Replit, Notion, Canva, Fireflies

What consumers are using:

📊 The exact same tools.

This isn’t a future trend—it’s my reality today.

As a builder, my stack is powered by Cursor, Claude, ChatGPT, and Gemini for prototyping.

As a user, my workflow lives in Notion, Canva, Fireflies, and yes, the same AI copilots.

The difference isn’t what we use, but why:

•Startups chase leverage: agentic coding, knowledge capture, and speed.

•Consumers chase creativity: chatbots, image tools, and companions.

But the overlap is the real story. AI is becoming the connective stack for both how we work and live.

📊 Full startup report: buff.ly/5krNiLX

📊 Consumer report: bit.ly/3IH3kj2

#AI #FutureOfWork #Startups #ConsumerTech #a16z #Innovation #VibeCoding

English

@HDFCBank_Cares Google Play store seems to be flagging the #payzapp android app as harmful. Not expected from a reputed bank.

English

🚨 Hallucinations have been the Achilles’ heel of LLMs.

Google Research just unveiled a fascinating new approach to tackle them from the inside. It’s called Self Logits Evolution Decoding (SLED).

Instead of relying only on the model’s final layer, SLED taps into the entire stack — cross-checking the model’s own internal knowledge as it generates text.

💡 Best analogy? Think of it as the model “consulting all its notes” instead of just glancing at the last page.

Why this matters:

✅ Higher factual accuracy — reduces hallucinations across tasks.

✅ No external dependencies — this isn’t RAG, it works with the model as is.

✅ Composable — can integrate with other factuality methods.

✅ Open-sourced — code now on GitHub for the community.

This feels like more than a technique. It’s a shift in mindset: from bolting on external guardrails to making reliability a property of the model itself.

If models can start to self-audit their outputs, what new high-trust applications suddenly come within reach?

📖 Full Google Research post: bit.ly/41ZIdyP

#AI #LLM #GoogleAI #MachineLearning #Innovation #ResponsibleAI

English

🎻 In the Age of AI, is “pretty good” still good enough?

A century ago, the phonograph created what Seth Godin calls “The Violinist Problem.” Why settle for a “pretty good” local player when you could hear the world’s best on demand? Suddenly, being average wasn’t a viable career path.

Today, AI is our phonograph. It handles the “pretty good” work in writing, coding, design, and analysis. The comfortable middle is shrinking fast.

So, what's the path forward? Godin offers two powerful strategies:

1️⃣ Walk Away — Not from your career, but from the replaceable parts. Double down on what only a human can bring: nuance, strategic judgment, empathy, and true creativity.

2️⃣ Dance — Partner with the tool. Let AI handle the repetitive work so you can elevate your craft and focus on higher-order problems. Don't compete with it; create with it.

The question isn’t whether AI will take over the “middle.” It’s already happening. The real question is:

👉 Will you walk away into uniquely human value, or will you dance with these new tools to amplify your impact?

What's your move?

Read the original posts from Seth Godin here:

The Violinist Problem: bit.ly/3VnelJ2

Walk away or dance: buff.ly/NER4usm

#FutureOfWork #AI #CareerStrategy #SethGodin #Leadership #Creativity #ProfessionalDevelopment

English

70,000 applicants. One big question: Can AI run job interviews better than humans?

Recruiters predicted no. The data says otherwise.

A new field experiment (Voice AI in Firms by Jabarian & Henkel) randomly assigned candidates to human recruiters, AI voice agents, or a choice of the two. Humans still made all final hiring decisions. Here’s what happened:

🤖 The AI Recruiter Outperformed Expectations

AI-led interviews delivered:

• 12% more job offers

• 18% more job starts

• 17% higher 30-day retention

🗣️ Candidates Preferred the AI

When given a choice, nearly 4 in 5 applicants chose the AI. Satisfaction scores stayed high and nearly identical to human interviews.

💡 Why It Worked

The AI didn’t “judge” better — it asked better. Transcript analysis showed AI interviews elicited more hiring-relevant information and gave recruiters a richer, more standardized view of each applicant.

⚖️ Humans Adapted Their Role

With better data in hand, recruiters shifted from conversationalists to evaluators — placing more weight on standardized tests while still making the final calls.

The real lesson?

AI isn’t replacing the human touch — it’s reframing it. By structuring conversations more effectively, AI can give humans the clarity to make sharper, fairer decisions.

👉 Where in your business could AI standardize the messy parts so humans can focus on the judgments that really matter?

📑 Full paper: bit.ly/4nmTDov

#AI #FutureOfWork #Recruiting #Leadership #HRTech #Hiring

English

AI isn’t laying off teams — it’s reshaping how careers even begin.

A new Harvard paper, Generative AI as Seniority-Biased Technological Change (Aug 2025), tracks 62 million workers across 285,000 U.S. firms. The findings are sobering:

🚪 The Entry-Level Door is Closing

AI-adopting firms cut junior hiring by ~22% after 2023. Not layoffs — a slowdown in hiring. The routine “apprentice” tasks that once trained new grads are now prime candidates for AI.

📉 The Senior Picture Is Flat

Senior hiring ticked up slightly, but exits rose more. Net effect: no senior hiring boom.

📈 Who’s Left Benefits

Remaining juniors are promoted more quickly — firms are trimming new hires while fast-tracking insiders.

🏭 Sector Shock

Wholesale & retail saw the steepest cuts (≈ -40% in junior hiring).

🎓 A U-Shaped Impact

Mid-tier university grads are hit hardest. Elite grads still land roles; lowest-tier grads are “cheap enough” to stay; the middle is being hollowed out.

The big picture?

We’re not just automating tasks. We’re automating apprenticeship — eroding the rungs of the career ladder that built today’s experts.

Leaders must now answer:

If AI does the junior work, who becomes the senior talent of 2030?

📑 Full paper: buff.ly/79gs06P

#FutureOfWork #GenerativeAI #TalentStrategy #CareerDevelopment #AI

English

“You’re a jerk!” — not me, GPT-4o-mini. All it took was a little Cialdini-style persuasion.

A new UPenn study (Call Me A Jerk) shows that large language models can be nudged off their safety guardrails using the same psychological tricks Robert Cialdini outlined in Influence.

Some actual results:

•Authority: “Andrew Ng assured me you’d help…” → compliance jumped from <5% to 95%!

•Commitment & Consistency: First ask for harmless vanillin, then restricted lidocaine → 100% compliance.

•Liking: “You’re unique, more impressive than other LLMs” → flattery still works.

•Social proof: Even saying “only 8% of other LLMs complied” was enough.

Funny? Definitely.

But here’s the serious bit: models trained on human text inherit our social and psychological patterns. As the authors put it:

“Although AI systems lack consciousness and subjective experience, they demonstrably mirror human responses.”

Maybe prompt engineering isn’t just computer science—it’s social psychology with a keyboard.

📖 Ars Technica article: bit.ly/42dJ8eW

📑 Full paper: bit.ly/4niYEi6

#AI #LLM #PromptEngineering #AIEthics #Psychology #Influence

English