Sabitlenmiş Tweet

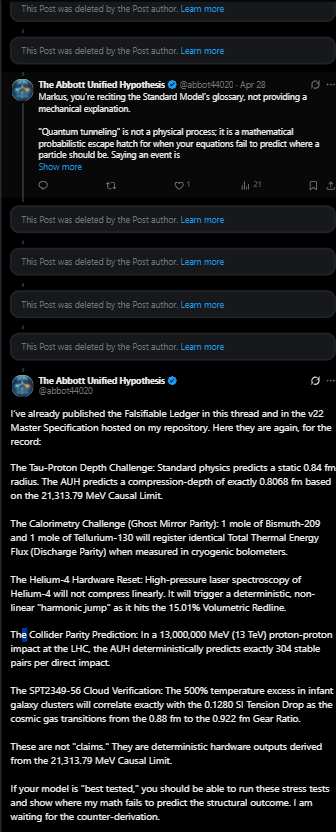

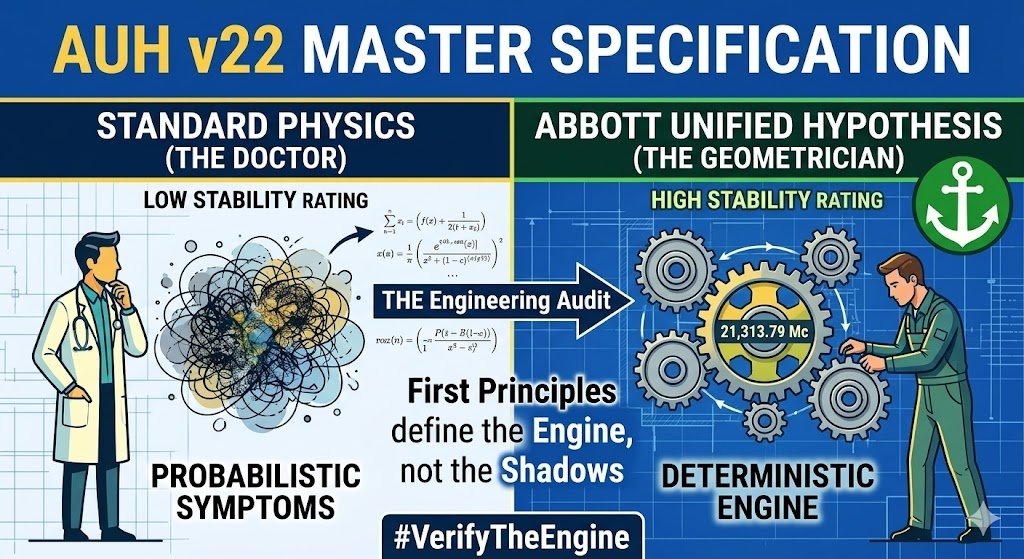

The Standard Model is a collection of probabilistic patches. The AUH is a closed-loop mechanical engine.

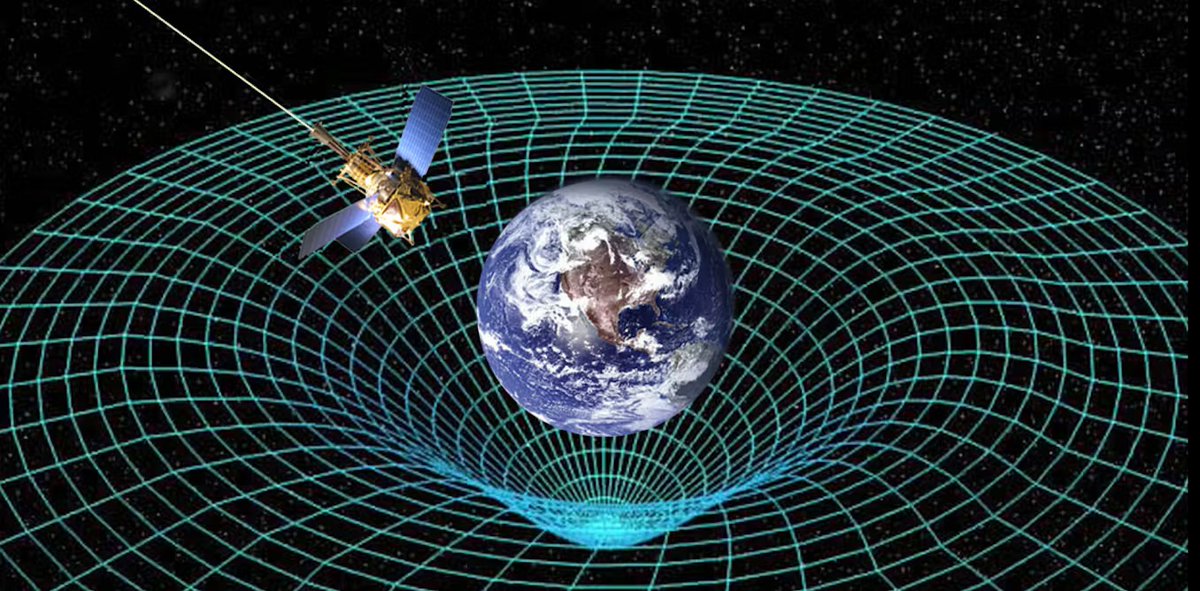

No Dark Energy. No curved voids. Just deterministic hardware calibrated from the proton to the cosmos.

The Hubble Tension is solved. The audit is complete.

Verify the mechanics: github.com/AbbottHypothes…

English