Alex Boruch-Gruszecki

51 posts

Alex Boruch-Gruszecki

@abgruszecki

Investigating how to build the future of coding using AI and programming languages. Postdoc in Arjun Guha's group

this is pretty much worst case performance no harness at all and very simplistic prompt

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

Sounds incredible until you read the fine print. The compiler generates less efficient code than GCC with all optimizations disabled. It doesn’t have its own assembler or linker. It can’t produce a 16-bit x86 code generator. And Carlini himself says it has “nearly reached the limits of Opus’s abilities.” New features and bugfixes kept breaking existing functionality. So what did $20,000 and two weeks actually buy? A compiler that passes 99% of GCC’s torture tests but can’t match the output quality of a tool that’s had 37 years of human engineering. That’s the constraint nobody’s pricing in. The real story is in the cost curve, not the capability demo. $20,000 for 100,000 lines means $0.20 per line of generated code. A senior compiler engineer costs roughly $150/hour. At maybe 50 polished lines per hour for something this complex, that’s $3/line. AI just did it at 15x cheaper, and it will only get cheaper from here. But the code isn’t equivalent. The AI version needs a human to finish the assembler, fix the linker, optimize the output, and prevent regressions. Those are the hardest 20% of the problem, and they represent 80% of the engineering value. Anthropic built the demo. Shipping the product still requires humans. This tells you exactly where we are in the autonomous software timeline. AI can now produce impressive first drafts of complex systems at trivial cost. Turning those drafts into production software still requires the judgment that costs $300K+ per year in compiler engineer salary. The gap between “compiles the Linux kernel” and “replaces GCC” is measured in decades of accumulated engineering wisdom that no model has internalized yet. The companies that understand this will use agent teams to generate the 80% and hire engineers to finish the 20%. The companies that don’t will ship $20,000 compilers that produce slower code than a free tool from 1987.

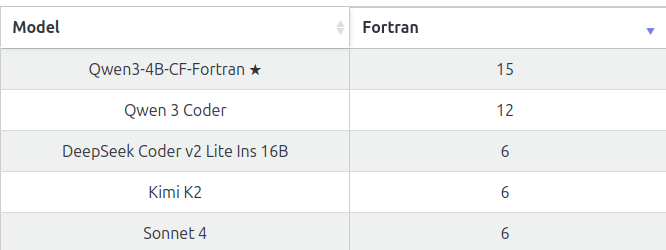

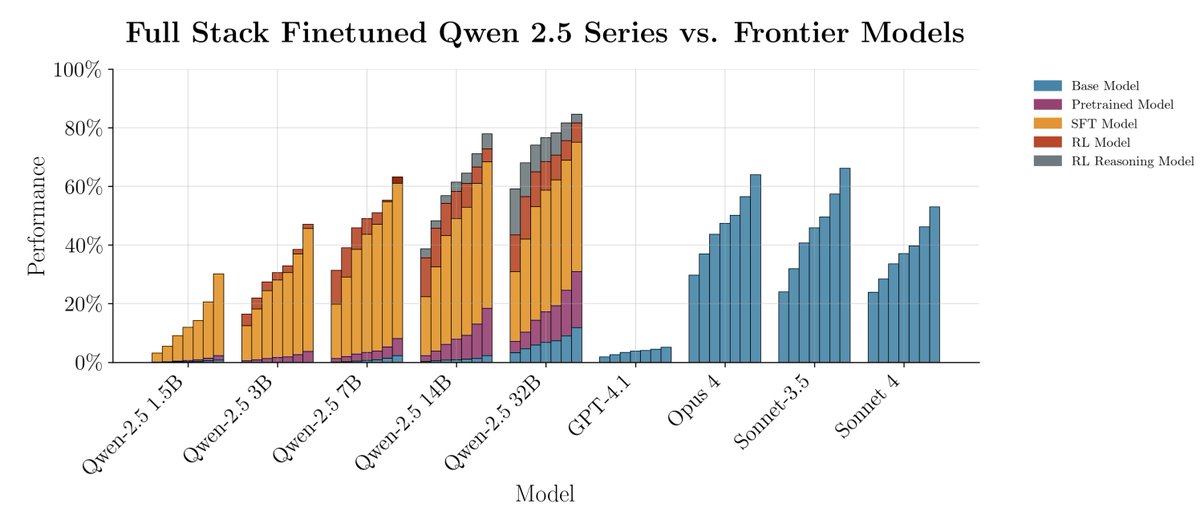

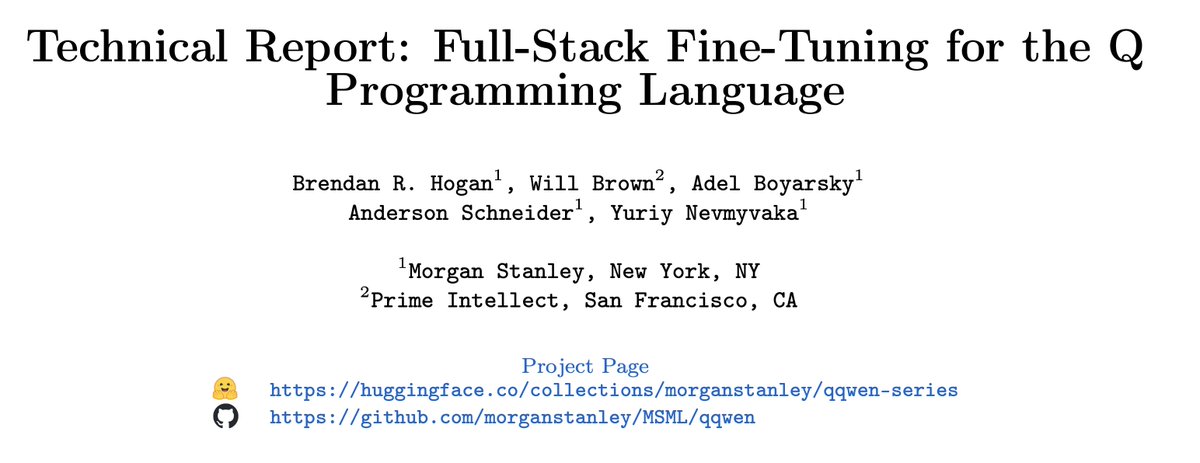

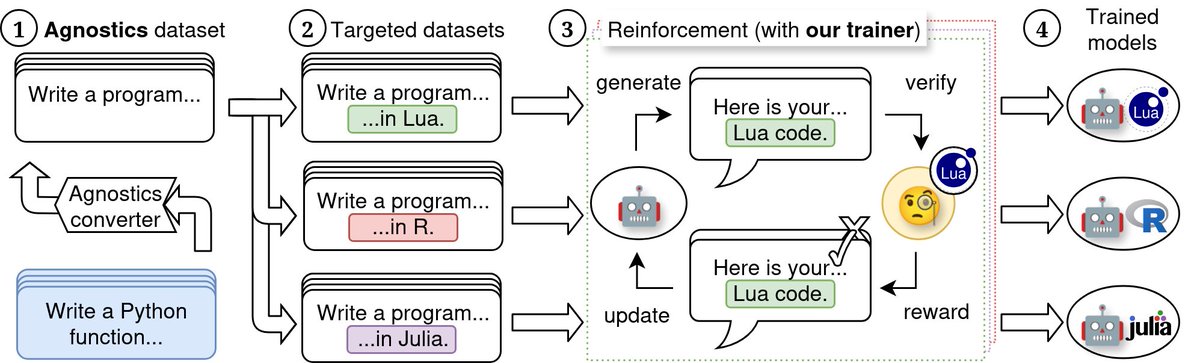

We show a way to reinforce an LLM’s ability to code in *any* programming language! We turn Qwen 3 4B and 8B into SOTA ≤16B models for low-resource programming languages, rivaling their 32B sibling. Find out more about our Agnostics project at agnostics.abgru.me , or here👇

We show a way to reinforce an LLM’s ability to code in *any* programming language! We turn Qwen 3 4B and 8B into SOTA ≤16B models for low-resource programming languages, rivaling their 32B sibling. Find out more about our Agnostics project at agnostics.abgru.me , or here👇

We show a way to reinforce an LLM’s ability to code in *any* programming language! We turn Qwen 3 4B and 8B into SOTA ≤16B models for low-resource programming languages, rivaling their 32B sibling. Find out more about our Agnostics project at agnostics.abgru.me , or here👇