Zhiyuan Liu@NUS

34 posts

Zhiyuan Liu@NUS

@acharkq

Postdoc at NUS | AI for Science | Multimodal & Generative Models (Diffusion & AR) | PhD from NUS

Towards 3D Molecule-Text Interpretation in Language Models Language Models (LMs) have greatly influenced diverse domains. However, their inherent limitation in comprehending 3D molecular structures has considerably constrained their potential in the biomolecular domain. To

In 2009, Google created the PhD Fellowship Program to recognize and support outstanding graduate students pursuing exceptional research in computer science and related fields. Today, we congratulate the recipients of the 2023 Google PhD Fellowship! goo.gle/3PYfLXl

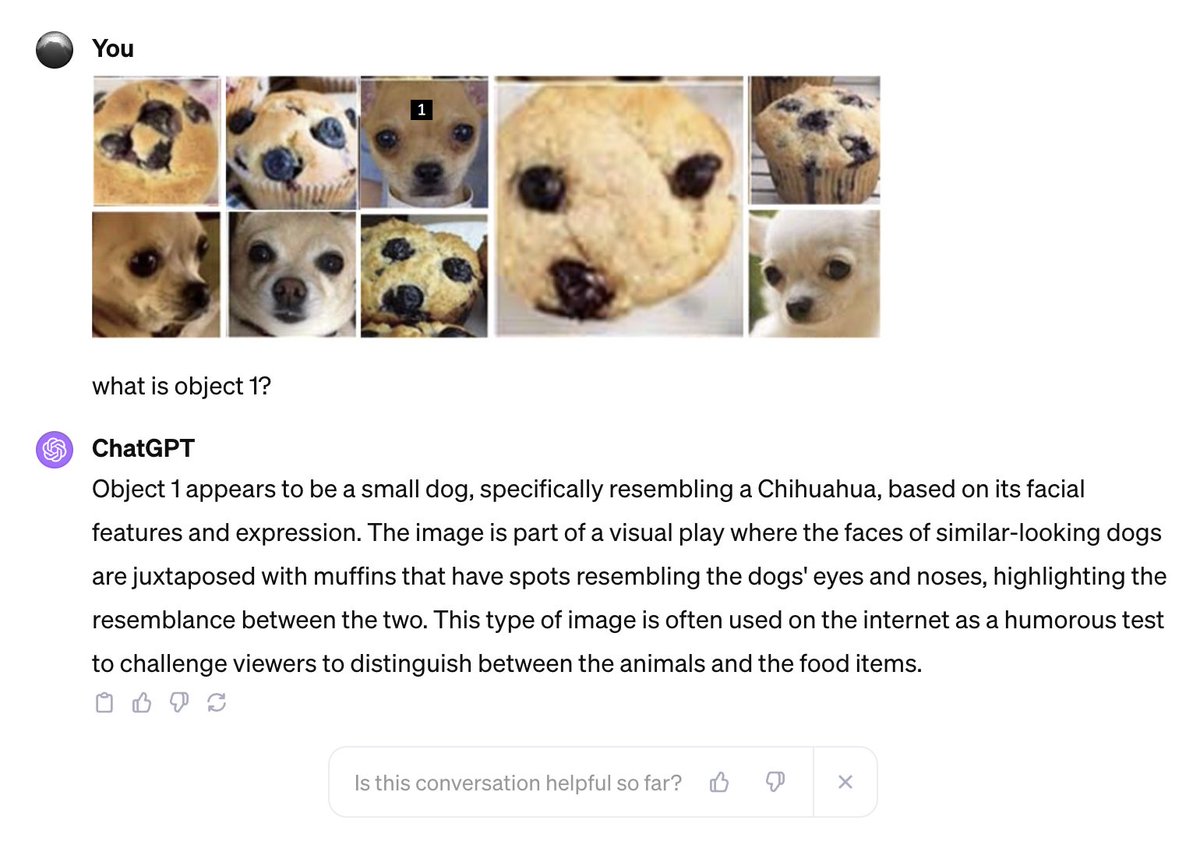

The famous "Chihuahua or Muffin" problem in computer vision is considered solved by GPT-4V on social media. But really? The answer is NO. GPT-4V cannot reason well about the same images in the original "Chihuahua or Muffin" grid when they are in a different layout. I experimented by rearranging the same images from the classic 4x4 grid into a different layout. First, GPT-4V does not directly recognize the content in details and miscounts the number of images. Then, when being asked about the third image on the top row, GPT-4V misrecognizes a Chihuahua as a muffin. So the "Chihuahua or Muffin" has not been solved yet. But how can GPT-4V work so well on the original image? My guess is that since that image is everywhere, GPT-4V was very likely to be trained on it and memorize its labels.