Aditi Mavalankar

140 posts

Aditi Mavalankar

@aditimavalankar

Research Scientist @DeepMind

London has incredible talent & entrepreneurial spirit. Thrilled to deepen @GoogleDeepMind’s roots here with our spectacular new building Platform 37 - a nod to AlphaGo’s legendary Move 37. It’s a tribute to Science & AI, and an inspirational space for our next big breakthroughs!

3 years ago we could showcase AI's frontier w. a unicorn drawing. Today we do so w. AI outputs touching the scientific frontier: cdn.openai.com/pdf/4a25f921-e… Use the doc to judge for yourself the status of AI-aided science acceleration, and hopefully be inspired by a couple examples!

Excited to share our new paper, "DataRater: Meta-Learned Dataset Curation"! We explore a fundamental question: How can we *automatically* learn which data is most valuable for training foundation models? Paper: arxiv.org/pdf/2505.17895 to appear @NeurIPSConf Thread 👇

An advanced version of Gemini with Deep Think has officially achieved gold medal-level performance at the International Mathematical Olympiad. 🥇 It solved 5️⃣ out of 6️⃣ exceptionally difficult problems, involving algebra, combinatorics, geometry and number theory. Here’s how 🧵

Engineers spend 70% of their time understanding code, not writing it. That’s why we built Asimov at @reflection_ai. The best-in-class code research agent, built for teams and organizations.

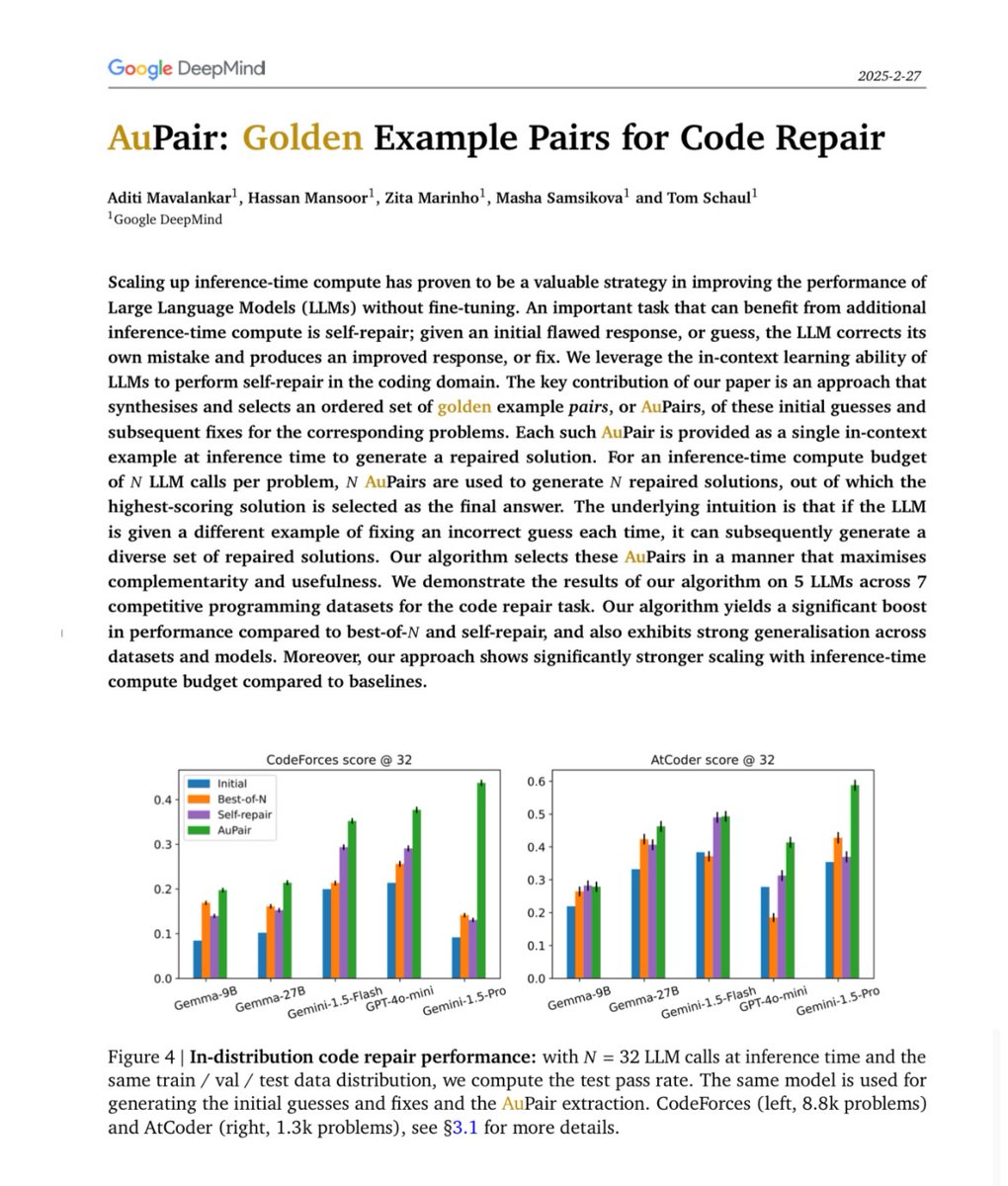

Excited to share our recent work, AuPair, an inference-time technique that builds on the premise of in-context learning to improve LLM coding performance! arxiv.org/abs/2502.18487

Excited to share our recent work, AuPair, an inference-time technique that builds on the premise of in-context learning to improve LLM coding performance! arxiv.org/abs/2502.18487