@Antrunt @BorisAKnyazev @ebelilov Thanks! It's the same as in standard optimizers: performance degrades smoothly as you deviate more from optimal LR. 1e-4 or 1/20x tuned AdamW LR are good starting points for Celo2.

More on LR sensitivity in appendix:

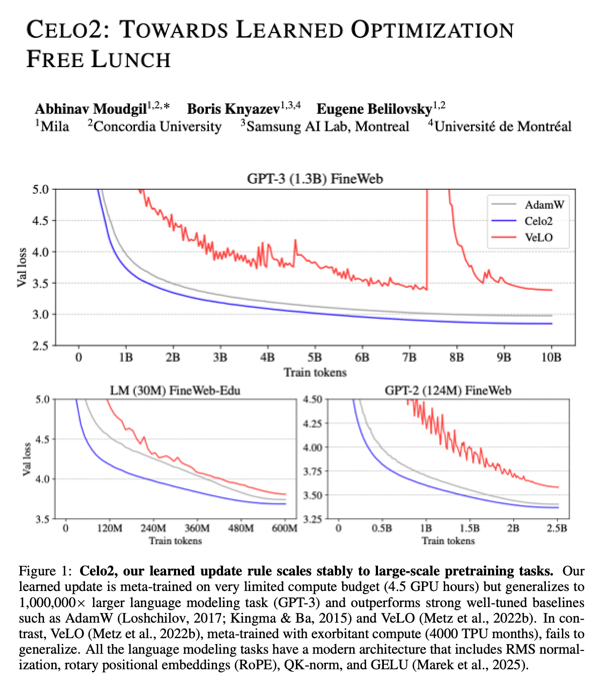

English