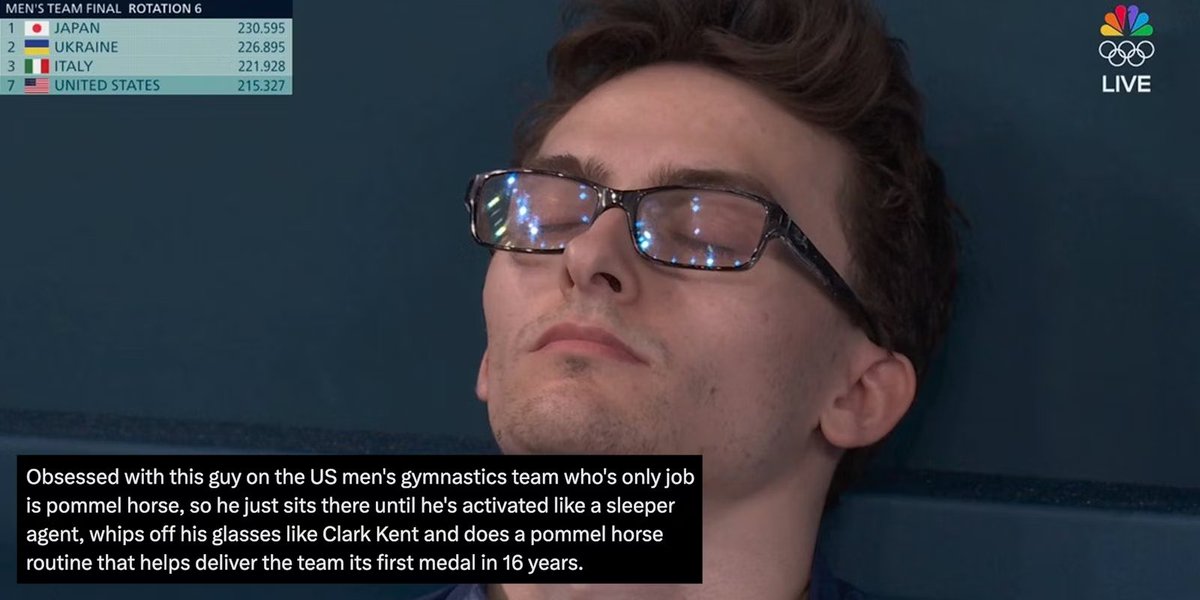

Jason Eisner

409 posts

Jason Eisner

@adveisner

Professor of CS at Johns Hopkins University, ACL Fellow. My tweets speak only for me.

We’re thrilled to announce the #HopkinsDSAI Postdoctoral Fellowship Program! We’re looking for candidates across all areas of data science and AI, including science, health, medicine, the humanities, engineering, policy, and ethics. Apply today! ai.jhu.edu/postdoctoral-f…

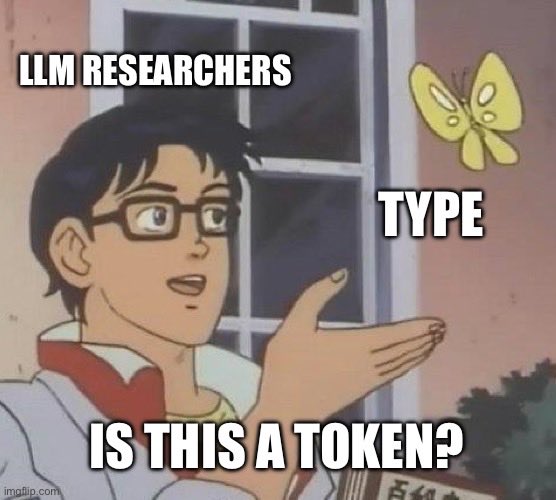

Used cryptocurrency to purchase one of my fave philosophical ideas: The Type/Token Distinction! My NFT certifies my Sole Ownership of the Platonic Idea. But it can't stop you from using the idea as many times as you want (0, 1, 2, …). SEE THE DISTINCTION? mintable.app/art/item/The-T…

@AaronSchein I’ve tried teaching this distinction many times and students’ eyes always glaze over. It’s just hard to motivate without personally experiencing a problem

Thanks @_akhaliq for sharing our work led by @BoshiWang2 from @osunlp, so let's chat about how LLMs should learn to use tools, a necessary capability of language agents. Tools are essential for LLMs to transcend the confines of their static parametric knowledge and text-in-text-out interface, empowering them to acquire up-to-date information, call upon external reasoners, and take consequential actions in external environments. But existing tool learning strategies for LLMs are not fully unleashing this potential––we find existing LLMs, including GPT-4 and ones specifically tuned for tools like ToolLLaMA, only achieve 30% to 60% correctness in tool use. Very far from the high level of accuracy needed for practical deployment in high-stakes scenarios. A closer look at existing methods reveals that people seem to be overly focused on rushing to add as many tools as possible or make it easy to add new tools, either through in-context learning or fine-tuning. Perhaps surprisingly, a critical aspect, how to make LLMs use existing tools as accurately as possible, is largely overlooked. How to Truly Master a Tool? We turn to successful precedents in the biological system such as humans, apes, and corvids. Learning to use a tool is a rather advanced cognitive function that depends on many other cognitive functions: > Trial and error is essential for tool learning. We do not master a tool solely by reading the ‘user manual’; rather, we explore different ways of using the tool, observe the outcome, and learn from both successes and failures. > Intelligent animals do not just do random trial and error—we proactively imagine or simulate plausible scenarios that are not currently available to perception for exploration > Finally, memory, both short-term and long-term, is instrumental for the progressive learning and recurrent use of tools So why should we expect an LLM to magically master a tool by just looking at the ‘user manual’ (API specification) or several examples? That's even hard for humans who are equipped with many cognitive substrates missing in LLMs! Simulated Trial and Error (STE) > STE is a biologically inspired method for tool-augmented LLMs that combines trial and error, imagination, and memory. > Given a tool, STE leverages an LLM to simulate, or ‘imagine’, plausible scenarios for using the tool. It then iteratively interacts with the API to fulfill the scenario by synthesizing, executing, and observing the feedback from API calls, and then reflects on the current trial. We devise memory mechanisms to improve the quality of the simulated instructions. A short-term memory facilitates deeper exploration in a single episode, while a long-term memory maintains progressive learning over a long horizon > STE allows an LLM to sufficiently explore and probe the capability boundry of a tool. With the tool use examples from STE, one can either use them for in-context learning or fine-tuning Result Highlights > GPT-4: 60.8 -> 76.3 📈 > Mistral-7B: 30.1 -> 76.8 📈📈 and outperforms GPT-4! > Llama-2-7B: 10.7 -> 73.3 📈📈📈 > Also substantially outperform existing tool-augmented LLMs like ToolLLaMa-v2 (37.3) But will the tool fine-tuning destroys the LLM's other capabilities? No worries, we got you covered. A simple experience replay strategy is enough to maintain an LLM's existing capabilities while learning new tools! 📌 Paper: arxiv.org/abs/2403.04746 📌 Code: github.com/microsoft/simu… (fairly easy to use. try it out on your own tools!)

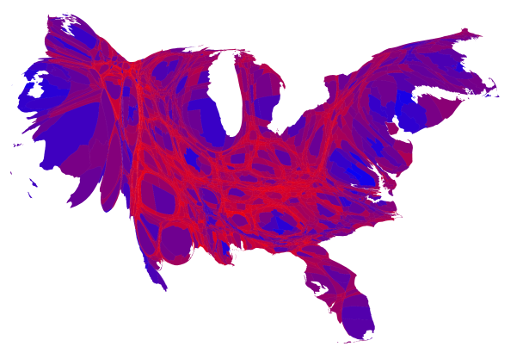

WHAT: "AI-Curated Democratic Discourse," a JSALT hackathon team this summer (Jun 10-Aug 2) GOAL: Redesign the social media UI to raise the quality of reading and posting, with the help of LLMs🤯 WHO: Looking for 1 more funded, in-person NLP PhD student! DM me with yr skillz.

WHAT: "AI-Curated Democratic Discourse," a JSALT hackathon team this summer (Jun 10-Aug 2) GOAL: Redesign the social media UI to raise the quality of reading and posting, with the help of LLMs🤯 WHO: Looking for 1 more funded, in-person NLP PhD student! DM me with yr skillz.