Aerbits

60 posts

Aerbits

@aerbitsai

Transform Your City efficiently and effectively by using data and intelligence and an aerial perspective.

San Francisco, Ca Katılım Nisan 2023

21 Takip Edilen21 Takipçiler

Aerbits retweetledi

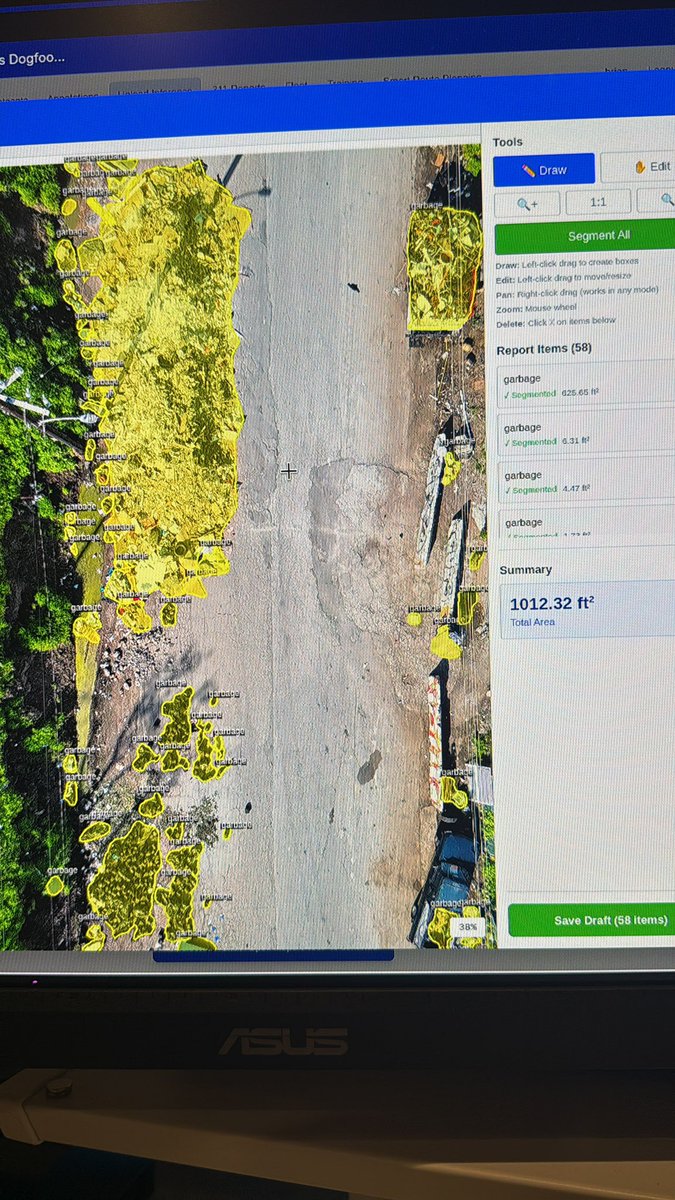

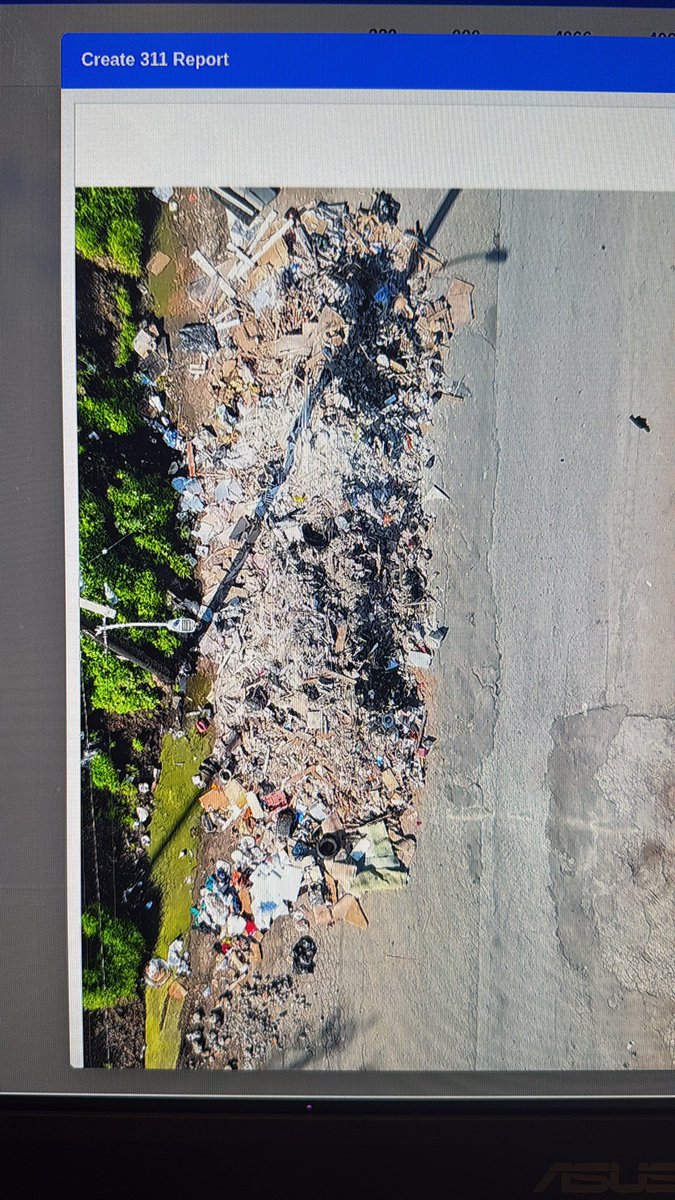

Someone dumped the most gigantic pile of garbage on a street in San Francisco last weekend.

Using my aerial detection platform Aerbits.ai I estimated this garbage pile covers 625 square feet of ground. It’s also several feet tall, over six feet tall at the center.

And they didn’t stop there. There are four other smaller, but still very large piles around it.

It’s catastrophic. Unfathomable. Ludicrous.

English

.@tavus just published a nice blog post about their "real-time conversation flow and floor transfer" model, Sparrow-1.

This model does turn detection, predicting when it's the Tavus video agent's turn to speak. It does this by analyzing conversation audio in a continuous stream and learning and adapting to user behavior.

This model is an impressive achievement. I've had a few opportunities to talk to @code_brian, who led the R&D on this model at Tavus, about his work. I love Brian's approach to this problem. Among other things, the Sparrow-1 architecture allows this model to do things like handle overlapping speech, and predict when someone is going to stop talking before they actually do.

It's worth reading the Sparrow-1 blog post and watching Brian's explainer video if you're interested in conversational AI tech.

Right now you can only use this model as part of the Tavus full stack. (It's not available separately as weights or via an API.) I recorded some video just before Christmas of the Tavus Santa Clause avatar, which used the Sparrow-1 model.

I never got around to posting that video clip. I had an idea to write up something about the "Santa Clause Avatar Benchmark," tracking the year-over-year improvement in interactive AI Santa demos. But I'll leave imagining that tongue-in-cheek post as an exercise to the reader and just put the video here as an example of an AI agent that uses the Sparrow-1 model for turn detection!

English

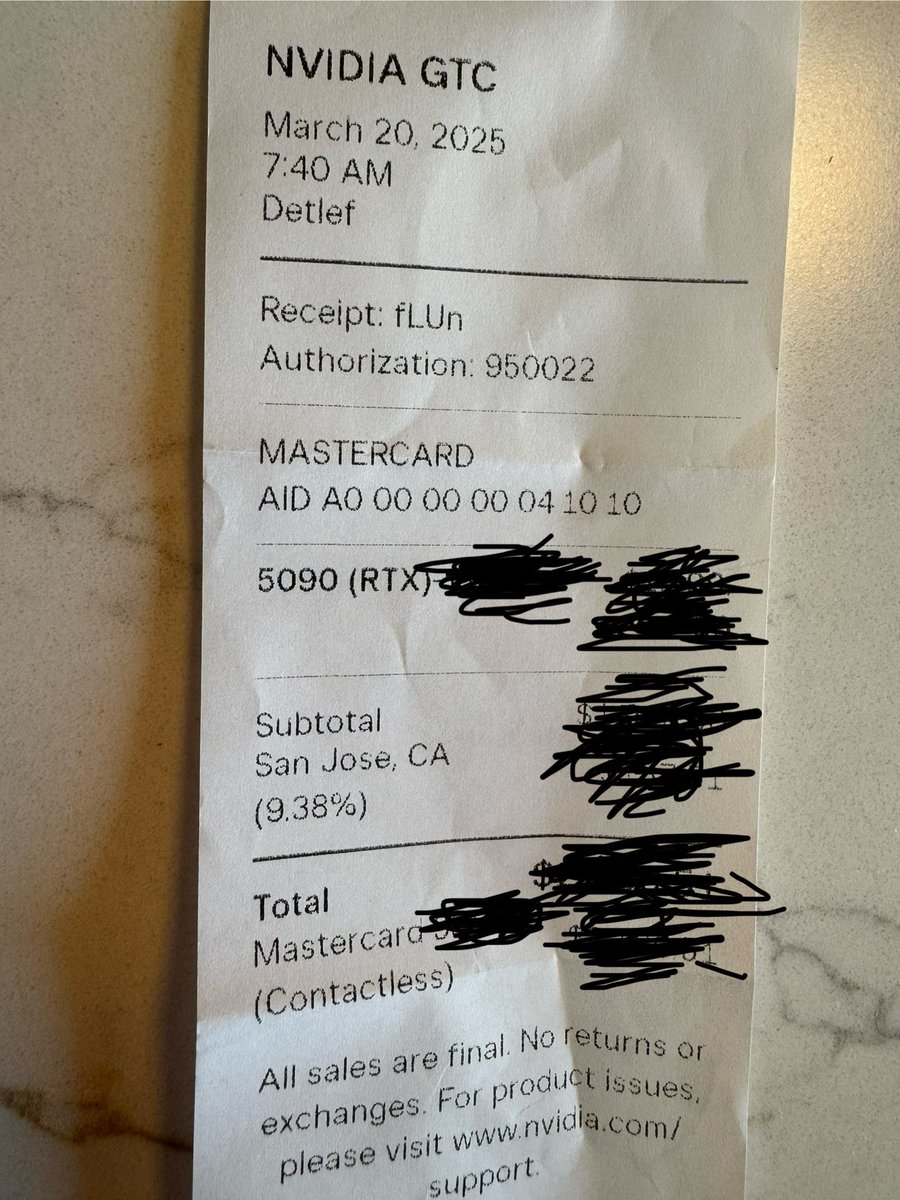

I know this kind of "here's what might happen next year" prediction is click bait, but how can you cover the tech industry and not understand how massively under-provisioned we are for inference needs *today*?

The gap between inference demand and supply is maybe the most under-reported story of the second half of 2025. All the big providers are playing musical chairs with research, different models in their line-ups, and different customer cohorts. Nobody has enough chips.

Anything that increases inference demand only helps NVIDIA. Nobody else is positioned to fill this supply gap anytime soon.

English

Aerbits retweetledi

Hummingbird Lipsync by @tavus is now available on @FAL 🐦

Get photorealistic, zero-shot AI lip sync model for your video projects. Fast, consistent, and cost-effective.

Perfect for:

🎬 Video Editing

🌍 Localization

🤖 AI Media

Try it now on the fal model gallery!

fal.ai/models/fal-ai/…

English

@kwindla @replicate and @cerebriumai are pretty good options for long running AI. That being said I think there is a ton of room to optimize deployments, especially when running local models versus services. I’m very keen on and curious about managing a custom kubernetes cluster to take advantage of shared stateless models.

English

tldr: right now most people are building new voice AI clusters in-house using Kubernetes. You can also check out Pipecat Cloud, which tries to make production deployments of open source voice AI as easy as `docker push`

docs.pipecat.daily.co/introduction

These days, if you're building out infra for scalable, long-running processes, you're either:

1. Building on top of Kubernetes

2. Building on top of a higher-level or differently opinionated infra provider like Fly, Modal, or Cloudflare workers.

One important thing to note is that many infrastructure products don't support long-running processes, UDP networking, or both. So you can't use AWS Lambda or Google Cloud Run for voice AI today.

Both (1) and (2) above are a significant amount of work. If you have a lot of k8s experience on your team, you'll be able to set up voice agent clusters in a few days. But some of the building blocks are going to be different from what you've got in production for your other workloads. (How you do capacity planning, think about cold starts, do rolling deployments so you don't drop current sessions, etc.) So factor in extra work specific to the maintenance and evolution of your voice AI clusters.

None of the new school cloud providers yet have setups optimized for realtime AI. But I think that will change.

We think Pipecat Cloud sits at the sweet spot between giving you the full flexibility of building your own agents while also taking all the devops headaches away. Let us know what you think.

English

I definitely agree that a bot manager is a useful pattern. There are examples floating around that call this a "bot runner."

Pipecat itself is un-opinionated about deployment/scaling architectures.

The basic model is "one Python process per agent." Beyond that you can build whatever scaffolding makes sense for your use case. ... 🧵

Govind-S-B@violetto96

@kwindla @mem0ai love pipecat, good to see yall pluggin in with other things. only issue is while i like ur higher level features. the subprocess bots kinda thing sound a nightmare for scaling. shouldnt there be a bot manager instance and then bots could be individual workers that can spin up

English

@ejc3 @venturetwins @tavus As staff engineer at Tavus, and one of the primaries on CVI, I can say none of this is scripted. Try it yourself.

English

@venturetwins @tavus I’m sure that is scripted, <long pause> Justine.

English

This is one of the crazier interactions I've had with an AI avatar.

I was chatting with the new @tavus real-time character and didn't know that he could see...until he complimented my background out of nowhere 🤯

You can hear how startled I was about halfway through!

English

@AlexReibman @adamsilverman @elevenlabs @tavus It would be cool if the replica could be a sort of digital waiting room for your zoom call.

English

My co-founder was spending 20+ hours per week handling sales calls

So I built an AI clone of @adamsilverman to replace him

- @elevenlabs + @tavus to create an interactive replica that asks questions related to the deal

- o3 agent reads the transcript and finds identifies key qualification points

- Leads get uploaded to our CRM and every interaction gets saved in @AgentOpsAI

The tool took me less than a day to build at the @elevenlabs hackathon. Automate the boring stuff with agents. DM/comment for access

English

@ProjectLincoln That's a very hard line to take on the man. He's also been very successful in each of these ways. All of these programs have had immense impact and success in so many ways.

English

@kwindla @UtopicDev @tavus Hey, yeah, absolutely. I would love to work together. Perhaps we can share some notes.

English

@aerbitsai @UtopicDev @tavus Congratulations on your model launches last week! So great.

I initially missed the Sparrow-0 news (I have covid at the moment) and just got caught up earlier today.

Would love to work together on solving turn detection if that’s ever of interest.

English

Open source, native audio turn detection 🎉🎉🎉

Most voice agents today do turn detection by waiting for speech pauses of a specific, short length. That's not how humans do turn detection when we talk to each other!

I've been working with some friends on a new turn detection model. If you're interested in this problem or in learning more about ML engineering, come hack on a small model with us!

More details below.

English

I've been working on the same thing @tavus , a semantic/lexical turn detection model since November. We released the first version Sparrow-0 about a week ago.

There is still plenty of room for improvement, and I'm already working on an audio-in model, so we can also use prosody.

English

I'd love to hear about the approach you're taking. I've been thinking about this problem for a while, and spent all of the Christmas break training a series of proof-of-concept models and generating synthetic data. There are so many possible paths to "solving" this.

It's a classic deep rabbit hole challenge that, to do a good job, requires lots of "boring" work. (But I love boring work.)

English

@feeltheomega @AlexReibman Wait, Tavus offers a realtime streaming solution already. It has sub second response times and a lot of really great interactivity and APIs.

English

digital twin tech's evolving faster than moore's law on steroids, but still no perfect match for your hackathon needs. virbo ai twin and tavus video generation are leading the pack with apis and uncanny valley-dodging realism. but live streaming. that's the final boss they haven't conquered yet

heads up. you might need to franken-stack this. consider coupling one of these twins with a dedicated live streaming solution. it's not ideal, but neither was the first iphone prototype

pro tip: keep an eye on open source projects. they're often where the real innovation happens before big tech catches up. might find some gems for api integration and live streaming capabilities

good luck at the eleven labs voice hackathon. curious to see what unholy ai chimera you'll unleash on the world. keep us posted on your digital doppelganger adventures

English

Aerbits retweetledi

Tavus is building the first, real-time digital clone, now powered by Cerebras Inference, to deliver an instant and natural conversation flow.

Switching to Cerebras

⏱️ cut Time to First Token (TTFT) by 66%

⬆️ increased their Token Output Speed (TPS) by 3X.

Experience the Cerebras difference...with a little help from @tavus CEO, @hassaanraza97 's, digital twin. 🤠

English