kwindla

6.3K posts

kwindla

@kwindla

Infrastructure and developer tools for real-time voice, video, and AI. @trydaily // ᓚᘏᗢ // @pipecat_ai

Every person on earth will talk to voice agents multiple times a day. @kwindla, creator of @Pipecat_ai & CEO of @trydaily, isn't hedging on that. @chelcietay talks with Kwin on how a decade of real-time infrastructure became the backbone of the voice AI moment, why healthcare is now one of the fastest AI adopters & what it's like to build a company alongside your spouse for ten years.

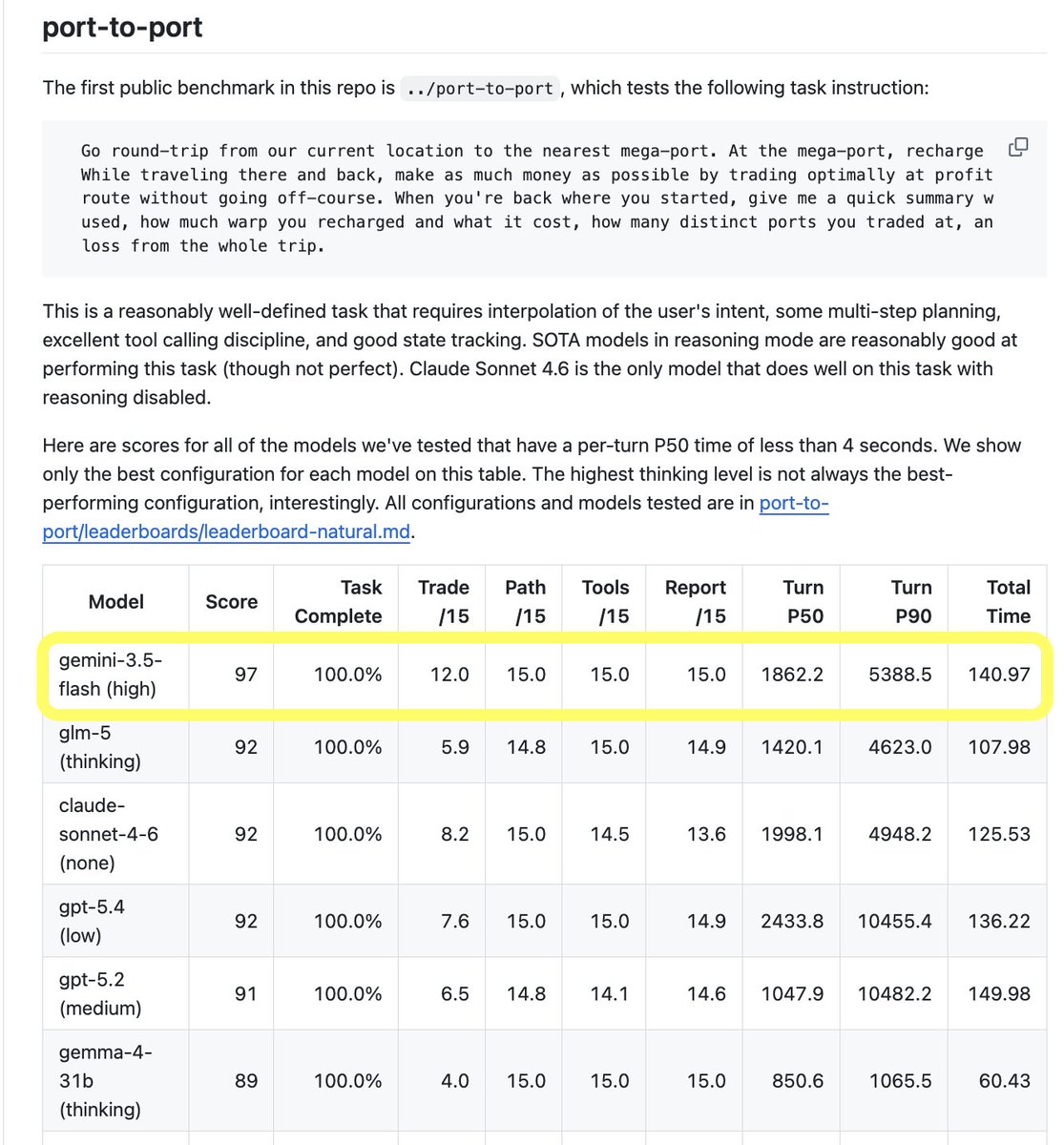

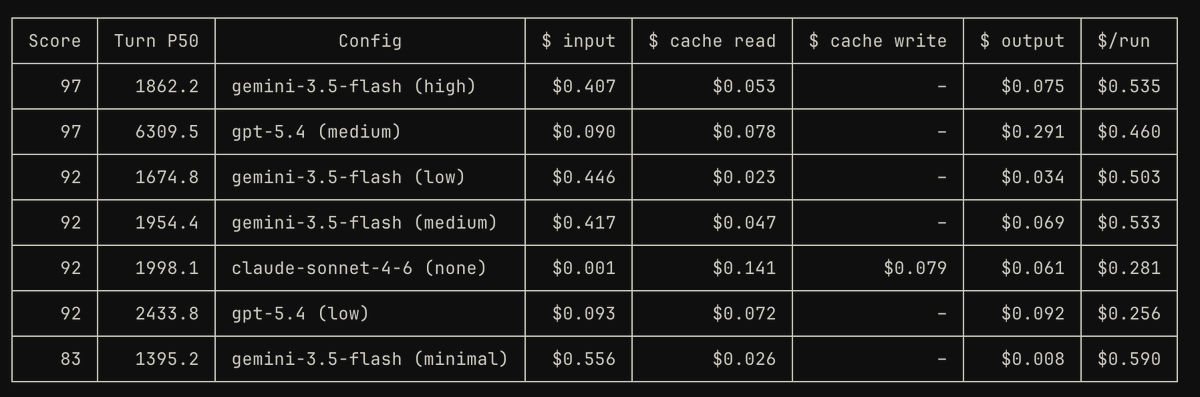

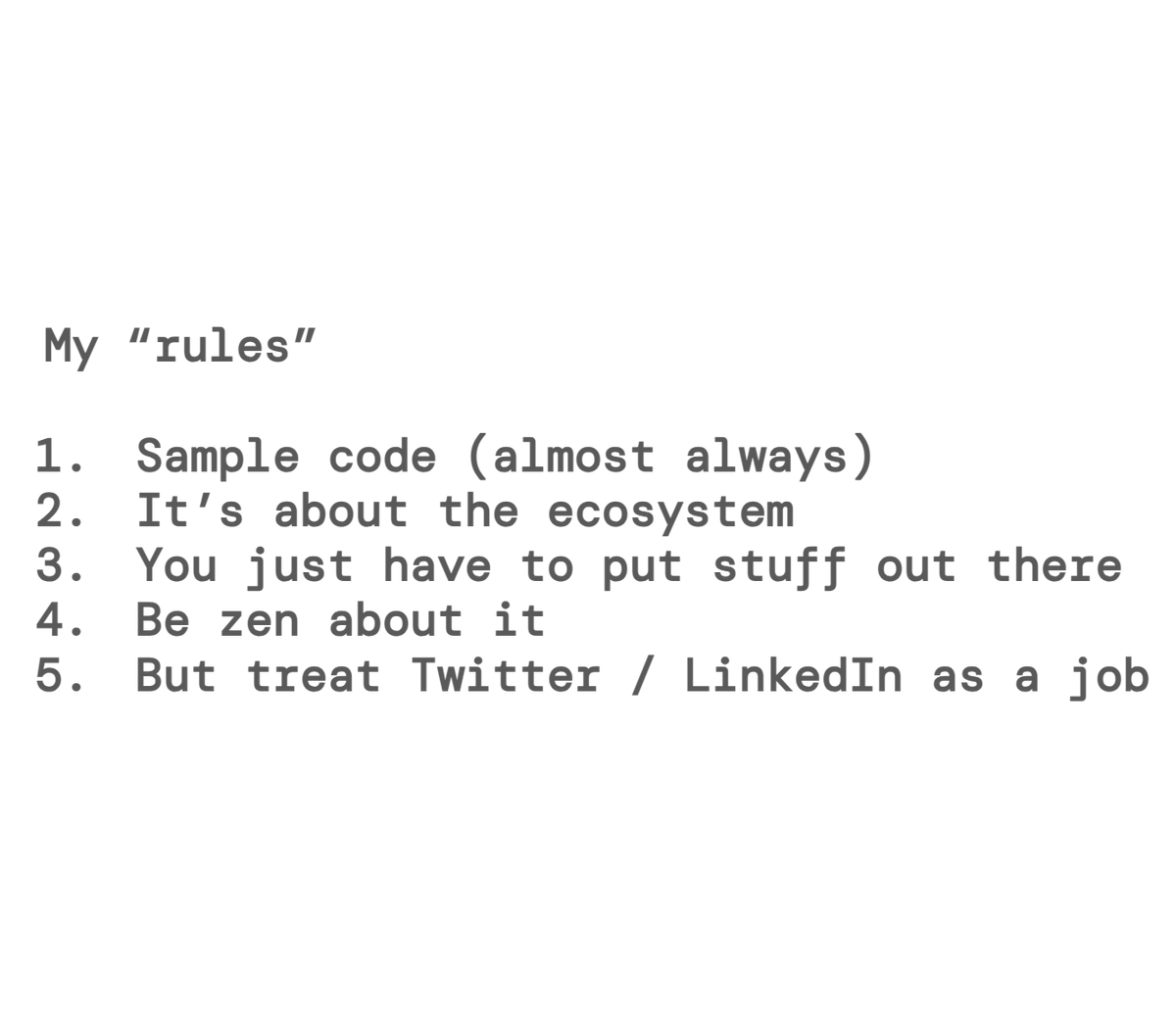

Gemini 3.5 Flash is out today. Here are numbers from my main voice and task agent benchmarks. Some notes: All the Gemini 3 models so far are too slow to work well for voice agents. Gemini 2.5 Flash was a *great* model for voice agents, when it was SOTA. It was fast and good at instruction following. Its big weakness was tool calling. It was quite difficult to prompt Gemini 2.5 Flash to perform tool calling reliably in long context, multi-turn use cases. With Gemini 3, Google improved the tool calling issues a lot. But time to first token is ~1s. We really need TTFT down below 700ms. Google isn't alone in this. All the SOTA models released this year have been reasoning models that aren't optimized for low latency. Claude Haiku 4.5 (released last October) remains the best-performing model with a TTFT under 700ms. Gemini 3.5 Flash is the first Flash model in the 3 family to be released as "generally available." It's quite different from gemini-3-flash-preview, which was released last December. That model actually scored a bit better on my voice agent benchmark. This new model is the new overall top scorer on my task agent benchmark. This benchmark tests a multi-turn task, requiring that models achieve a P50 turn execution time faster than four seconds. Gemini 3.5 Flash with a "high" thinking budget scores significantly better than any other model I've tested. So even though the TTFT isn't what we'd like to see from this model, the overall generation speed makes up for it, and allows us to use the "high" thinking budget and still achieve a per-turn P50 under two seconds. Very impressive. This performance costs money, though. I had become accustomed to thinking of Gemini models as aggressively priced. But Gemini 3.5 Flash is actually more expensive than GPT-5.4 and Claude Sonnet 4.6 on this benchmark. Also note that lower reasoning settings don't always save money. Gemini 3.5 Flash "minimal" costs more, on this benchmark, than "high," because it makes more mistakes, so it uses more tokens to complete the task. Please note that performance of this model on your benchmarks might be very different. My voice agent and task agent results are often wildly out of line with the reported results on standard benchmarks in the model cards and release notes. The voice agent benchmark is 30 turns, and heavily tests tool calling in a long-context scenario. The task agent benchmark injects large streams of structured data events into the context, all tool calls are asynchronous, and the test task takes at least 32 turns to complete. (My motto for evals is "30 turns or it didn't happen.") Make your own benchmarks! (And post the source code and the results for different models, if you can.)

Apologies for the accuracy on this one

Last night I went to the GTM Engineers event at @AItinkerers in SF. 5 founders showing how they are doing it. 5 very different approaches. Here are the insights: