Anastasis Germanidis

538 posts

Anastasis Germanidis

@agermanidis

Simple ideas, pursued maximally. Co-Founder & Co-CEO @runwayml.

Runway Characters are now available in the Runway iOS app. Have a conversation on the go. Or point your camera at the world and talk to a character that can actually see what you see. Try it today at the link below.

Today we're also introducing the Runway Fund, an investment vehicle dedicated to backing the next generation of companies building across AI, media and world simulation. For the past several years, we've been investing quietly with this fund, backing a range of companies, including @cartesia, @lancedb and @tamarindbio.

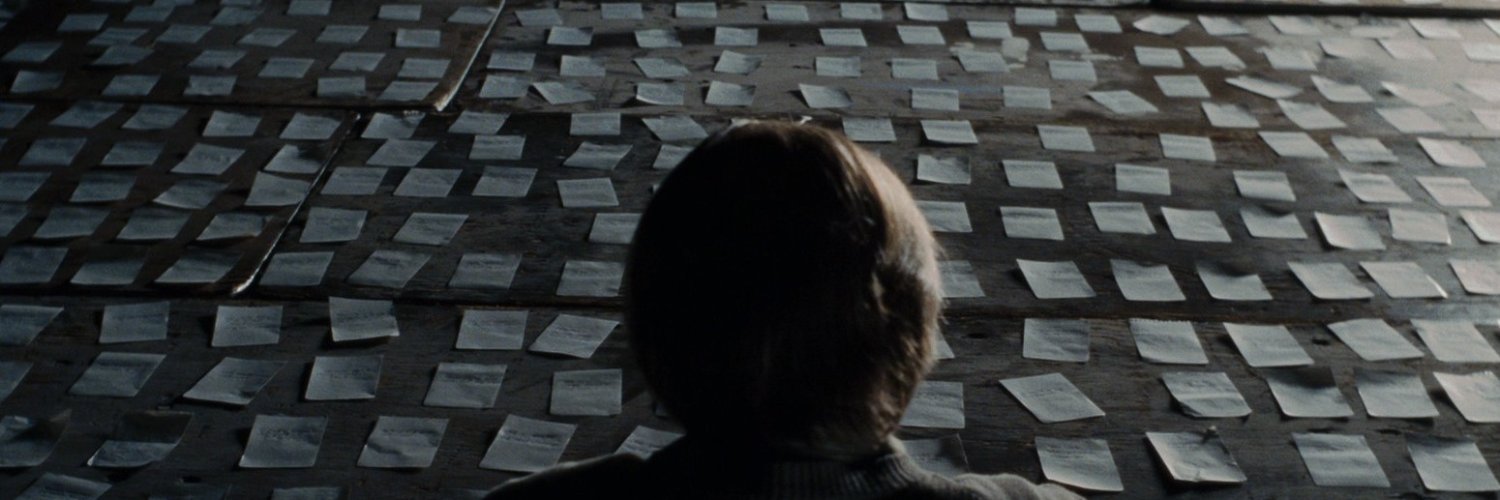

Generate videos with nothing but words. If you can say it, now you can see it. Introducing, Text to Video. With Gen-2. Learn more at research.runwayml.com/gen2

AMI (Yann LeCun) is modeling physics. World Labs (Fei Fei Li) is modeling the physics objects of the world. Genie is modeling both. Really good analysis.

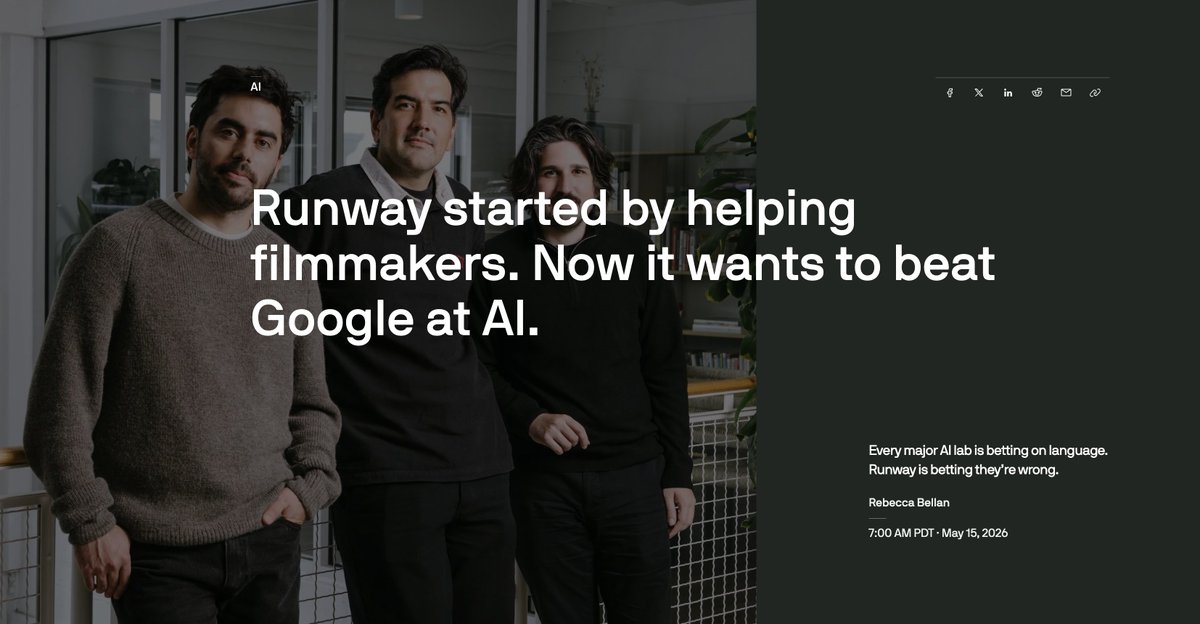

Today we are introducing Runway Labs, a generative AI incubator led by our co-founder and Chief Innovation Officer, Alejandro Matamala Ortiz. Runway Labs will focus on broadly exploring the transformative power of AI video and General Worlds Models across all industries. From film and television to healthcare and education, gaming, advertising, real estate and more. We will be partnering with creators, enterprises, institutions and foundations to find new applications for these technologies and opportunities across industries. Learn more at the link below.

haha the @runwayml real-time characters is great. i just spent 2 minutes pitching a ghibli version of myself a bladeless blender startup it's only a 2 min demo, but the questions and tone are pretty solid. one question even threw me off a bit (the cleaning). asking which round was unnecessary. it got a little weird at the end right before it cut off, which is due to a 2 min limit when testing on site(which seems unnecessary since they're charging me for the api - why not let me talk longer?) at current pricing, this would be roughly ~$6 for a 30 min call or $12 an hour. this doesn't include the cost of running an extraction prompt in the background to store this data, but llm tokens are so cheap. very expensive compared to mean vc (free, since it's in gpt marketplace) or even a self-hosted chat agent, but it's way cheaper than an associate. while it has downsides like not being a real person, there's also benefits like having consistent and unbiased information gathering, being available 24/7, being able to talk to founders in parallel, and consistent note taking. and it will likely get cheaper (sorry my audio volume is low, i'm testing while everyone else in the house is asleep)