aiplanner retweetledi

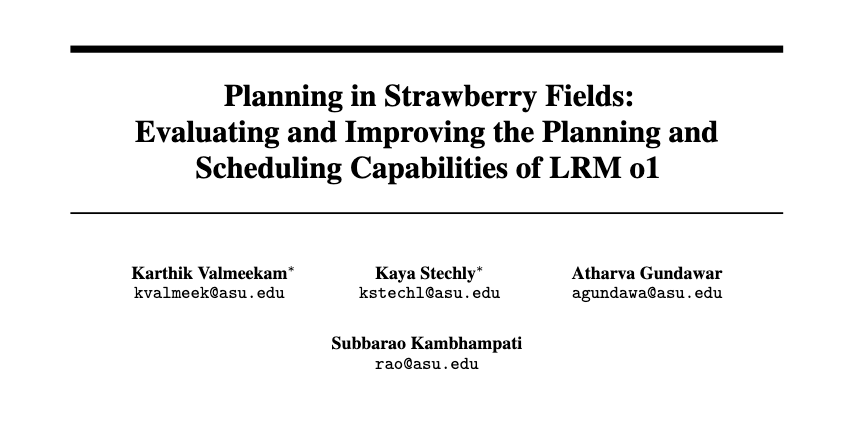

Proceedings of the Fortieth AAAI Conference on Artificial Intelligence are now available to review online. The proceedings have been published in 48 consecutive issues which are all available here: aaai.org/proceeding/aaa…

English