Ajay Sridhar

49 posts

Ajay Sridhar

@ajaysridhar0

cs phd student @StanfordAILab

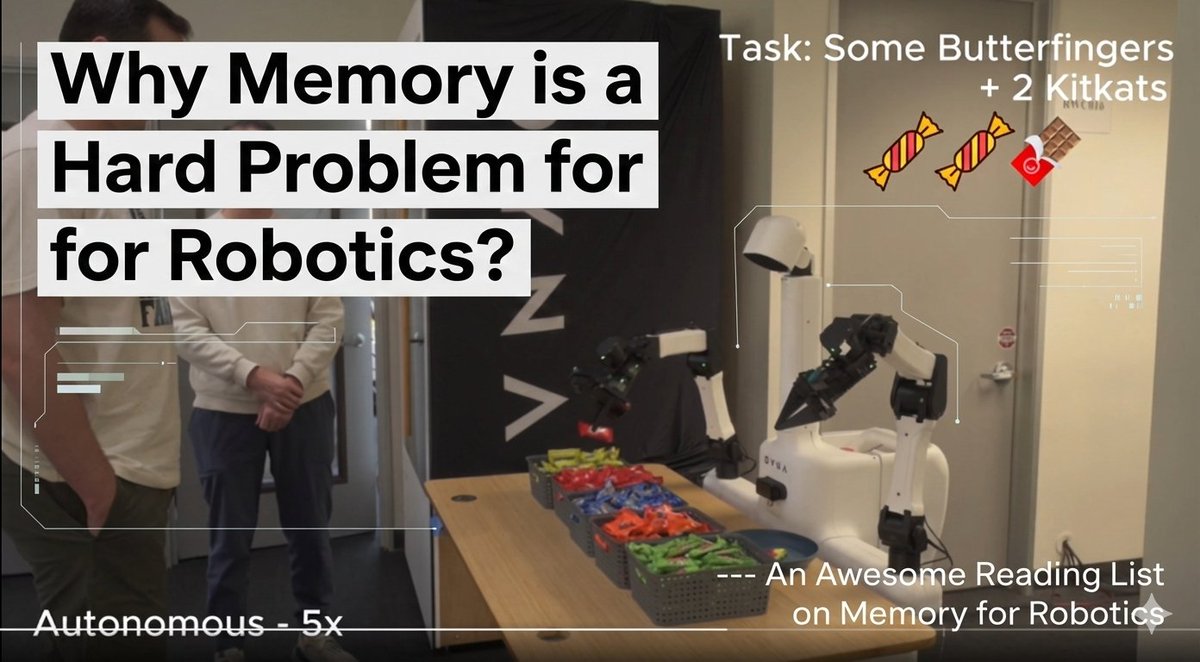

VLAs are great, but most lack long-term memory humans use for everyday tasks. This is a critical gap for solving complex, long-horizon problems. Introducing MemER: Scaling Up Memory for Robot Control via Experience Retrieval. A thread 🧵 (1/8)

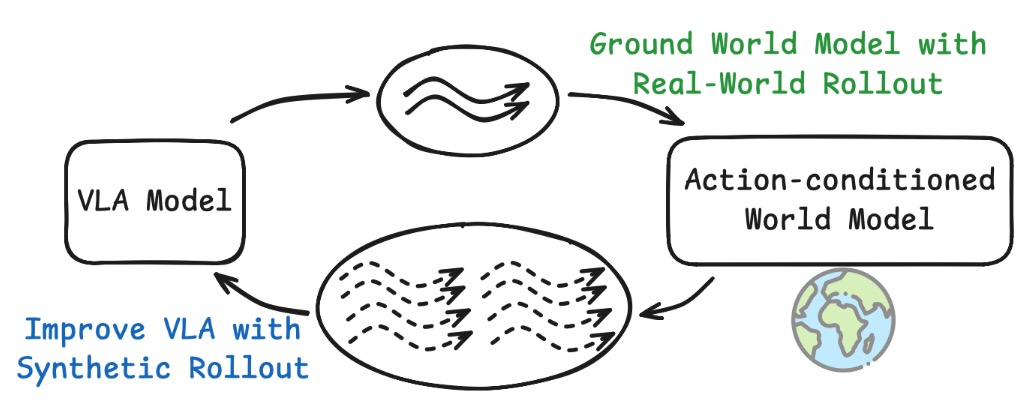

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

Most robot policies today still largely lack memory: they make all their decisions based on what they can see right now. MemER aims to change that by learning which frames are important; this lets it deal with tasks like object search. @ajaysridhar0, @jenpan_, and @satviks107Sharma tell us about how to achieve this fundamental capability for long-horizon task execution. Watch Episode #54 of RoboPapers with @micoolcho and @chris_j_paxton to learn more!