Akash Majumder

12.2K posts

Akash Majumder

@akashagi

Bitcoin Investor | Founder & CEO of Stealth Startup | Building Next-Gen Solutions | AI & Space Enthusiast.

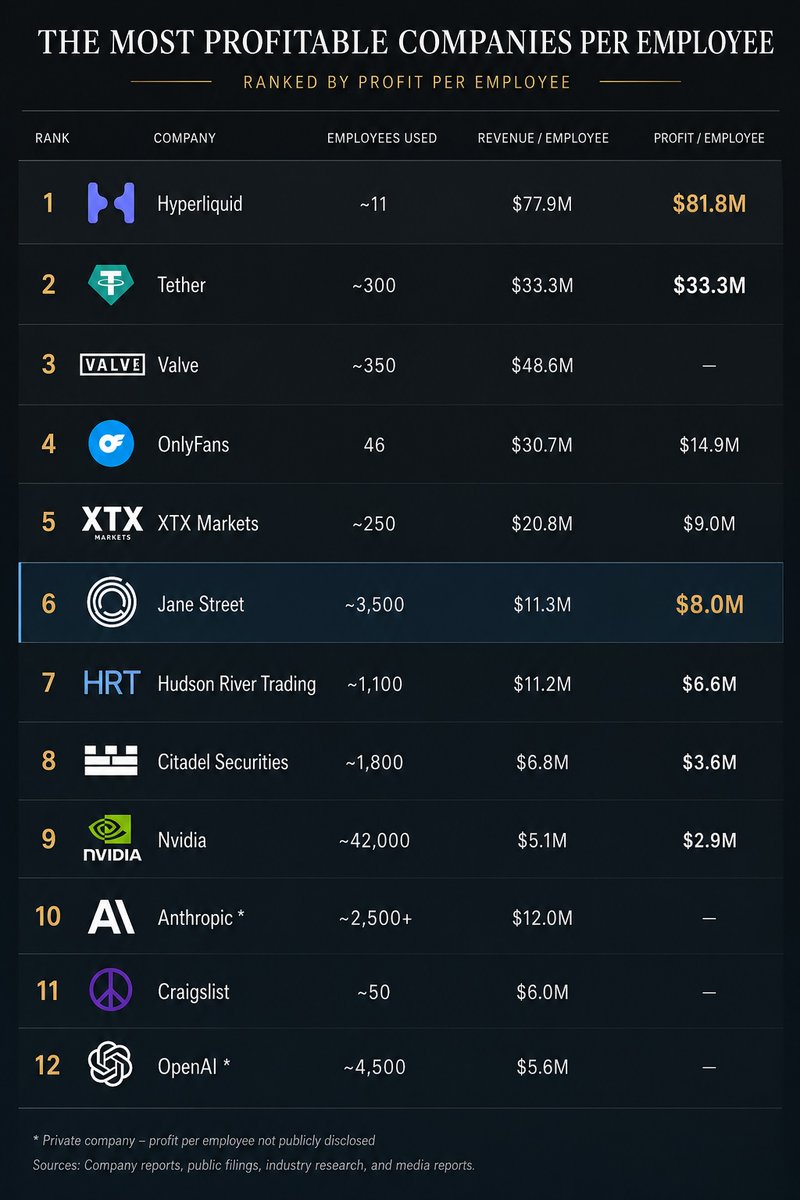

Jane Street made ~$40B in 2025 with 3,500 employees, a ~2x from the year before. At ~65-70% profit margin, that's $8M profit / employee, the highest for a 1000+ ppl company. High-frequency trading continues to be the most efficient money making engine. I want to share an old story about my Jane Street interview in 2014. Jane Street was known for hiring a lot of math, physics and CS olympiad winners from top universities and putting them through many rounds - including, for trading roles, a gauntlet of mental math. It was my 6th interview and my final round and I recall being asked "What is the next day after today in DD/MM/YYYY where all the digits are unique?" They'd toy with you and say "You can use a pencil and paper, if you want" but you knew that was an instant no. Painstakingly and as quickly as I could, I came to an answer. "How confident are you that this is correct on a 0-1 probability scale?" the interviewer said. "0.95", I blurted out, not fully knowing how to answer that. "Are you sure?" After thinking harder for a few more seconds, I realized I could've flipped the digits around to get a closer date. I gave the interviewer my answer. It was correct. "0.95 huh?" he chuckled. That's when I knew I failed. Note: fwiw, other companies that come close in efficiency are - Tether ($90M+ profit/emp) - Hyperliquid ($80M+ profit/emp) and on revenue: - Valve ($50M/emp) - OnlyFans ($37M/emp) - Craigslist ($14M/emp) - Anthropic ($12M/emp, run rate) - OpenAI ($8M/emp, run rate) For comparison, Nvidia is very efficient at scale and is $4.4M/emp.

NEW: Google is planning to invest $10 bil now and up to $40 bil in future in Anthropic, in a major expansion of their partnership. Google will also provide 5 GW of compute over 5 yrs, starting to come online 2027 w/ @byJuliaLove bloomberg.com/news/articles/…

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

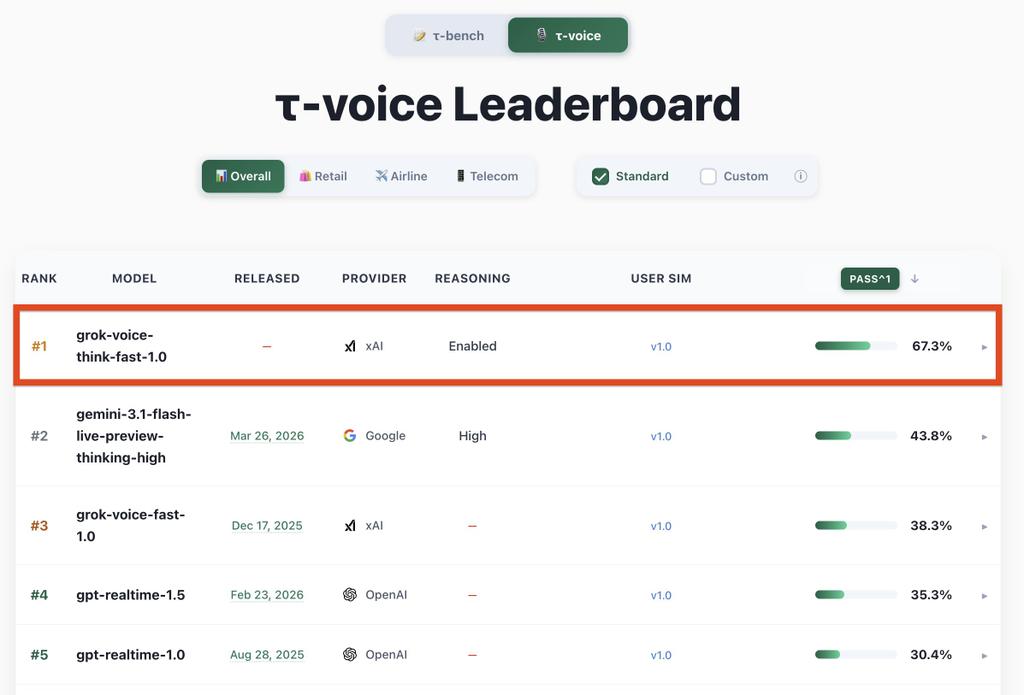

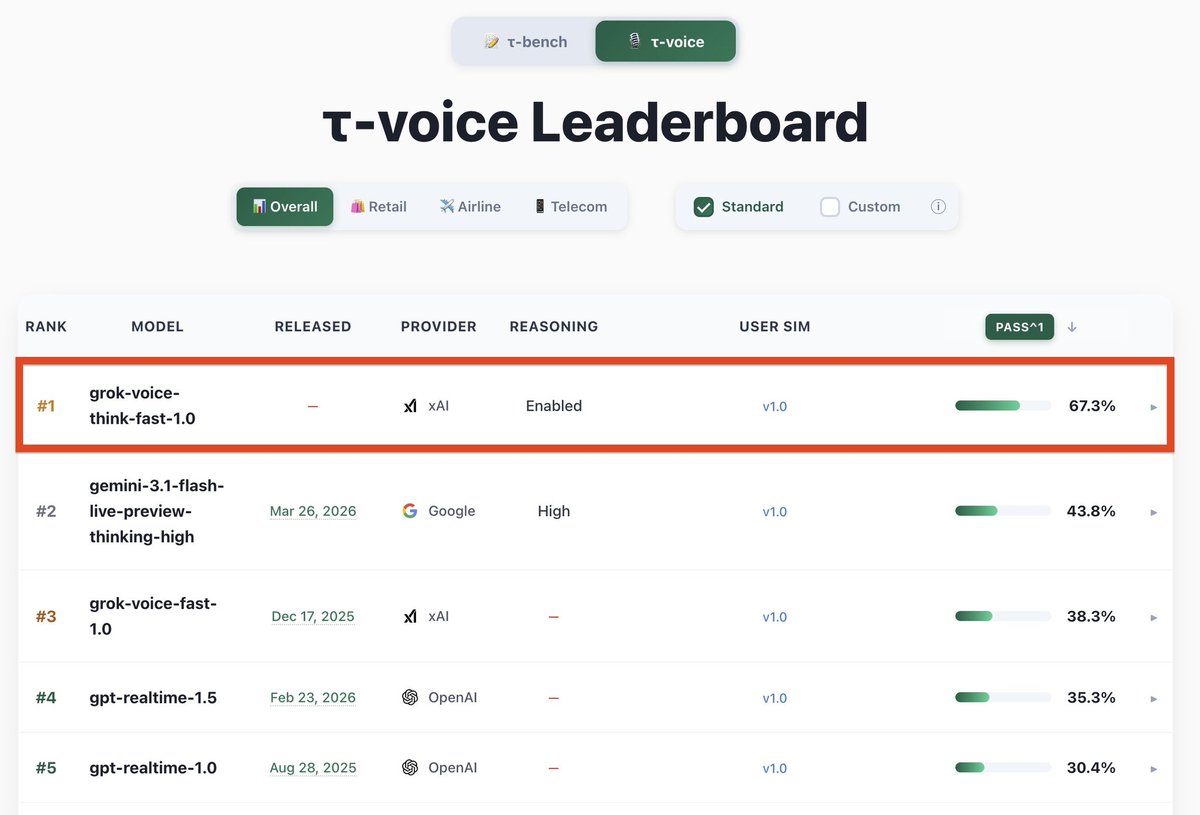

Introducing Grok Voice Think Fast 1.0 A state-of-the-art voice model built for complex, multi-step workflows with snappy responses and high accuracy. It takes the top spot on the Tau Voice Bench and handles real-world messiness like noise, accents, and interruptions better than any other model in the world. x.ai/news/grok-voic…

Introducing Grok Voice Think Fast 1.0 A state-of-the-art voice model built for complex, multi-step workflows with snappy responses and high accuracy. It takes the top spot on the Tau Voice Bench and handles real-world messiness like noise, accents, and interruptions better than any other model in the world. x.ai/news/grok-voic…

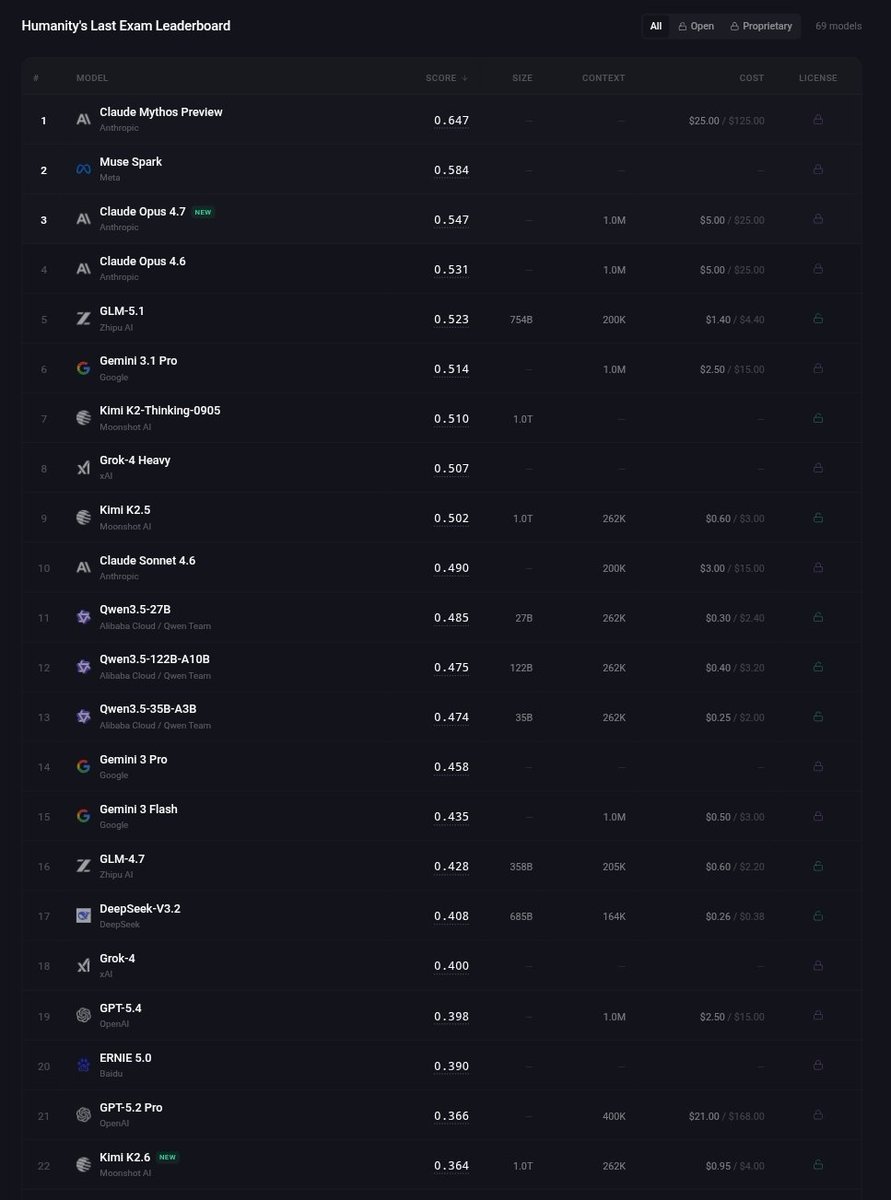

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…