Pei

150 posts

@SadlyItsBradley Hey Bradley, curious what you think about this “Vision Air”-style XR reference design from China. It’s apparently a ~90g glasses-form device using pancake optics, VST + OST, ~90° FOV, and a split-compute setup powered by GravityXR’s X100 chip.

Link: bilibili.com/video/BV1qsRTB…

English

2000年普查0岁人口1379万,国家统计局和计生委官员说存在超生漏报,参考小学招生数将2000年出生数上调为1771万,2006年小学果然招生1729万。其实由于教育经费是由中央和地方分担、中央出大头,地方政府和教育机构纷纷虚夸20-50%招生数以冒领教育经费。2014年初三学数1426万,2015年初中毕业1418万。

Pei@alas_chn

@fuxianyi 我查到2006年小学招生1729.36万人。如何解释这个数据更接近1771万呢?

中文

中国早就普及九年义务教育,2015年初中毛入学率104%,初中毕业生1418万换算成15岁人口只1363万,与2000年普查0岁人口1379万相当,远少于官方公布的出生1771万。

Jonesun@Jonesun252779

@fuxianyi 虚报肯定是有的 但是也没你说的那么大的差值 农村上不到初中毕业 还是有很多的

中文

Pei retweetledi

▸▸プレゼントキャンペーン🎉!!◂◂

簡単なアンケートにお答えいただいた方の中から

抽選で𝟏𝟎名様に

<<非売品B2ポスター>>をプレゼント🎁!

vertexforce.jp/enquete/

❚ アンケート受付期間

4/26(日) 23:59まで

たくさんの応募お待ちしております🤖

#バーテックスフォース

日本語

Many commercial air purifiers are equipped with a built-in UV lamp and claim that it can kill airborne bacteria. However, this claim is misleading. Most commercial purifiers use H12 or higher-grade HEPA filters, which alone capture over 99.9% of viruses and bacteria in a single pass. Even if the UV lamp could kill 100% of the remaining microbes within the one second that air passes through it, the overall improvement in efficiency would be less than 0.1%. Therefore, the assertion that the UV lamp helps disinfect the air is merely a marketing gimmick.

The true purpose of the UV lamp is to irradiate the filter surface to inhibit fungal growth. Bacteria and viruses generally cannot survive long on the filter, but if the filter becomes damp and is left without airflow for an extended period, mold can proliferate on the dust accumulated in the HEPA filter under suitable temperature and humidity, occasionally causing unpleasant odors. The downsides of UV lamps include the generation of trace amounts of ozone and accelerated degradation of the filter material, which shortens the filter’s lifespan. Consequently, I still do not recommend using UV lamps inside air purifiers. Simply running the air purifier 24/7 can resolve the issue of mold growth on the filter.

English

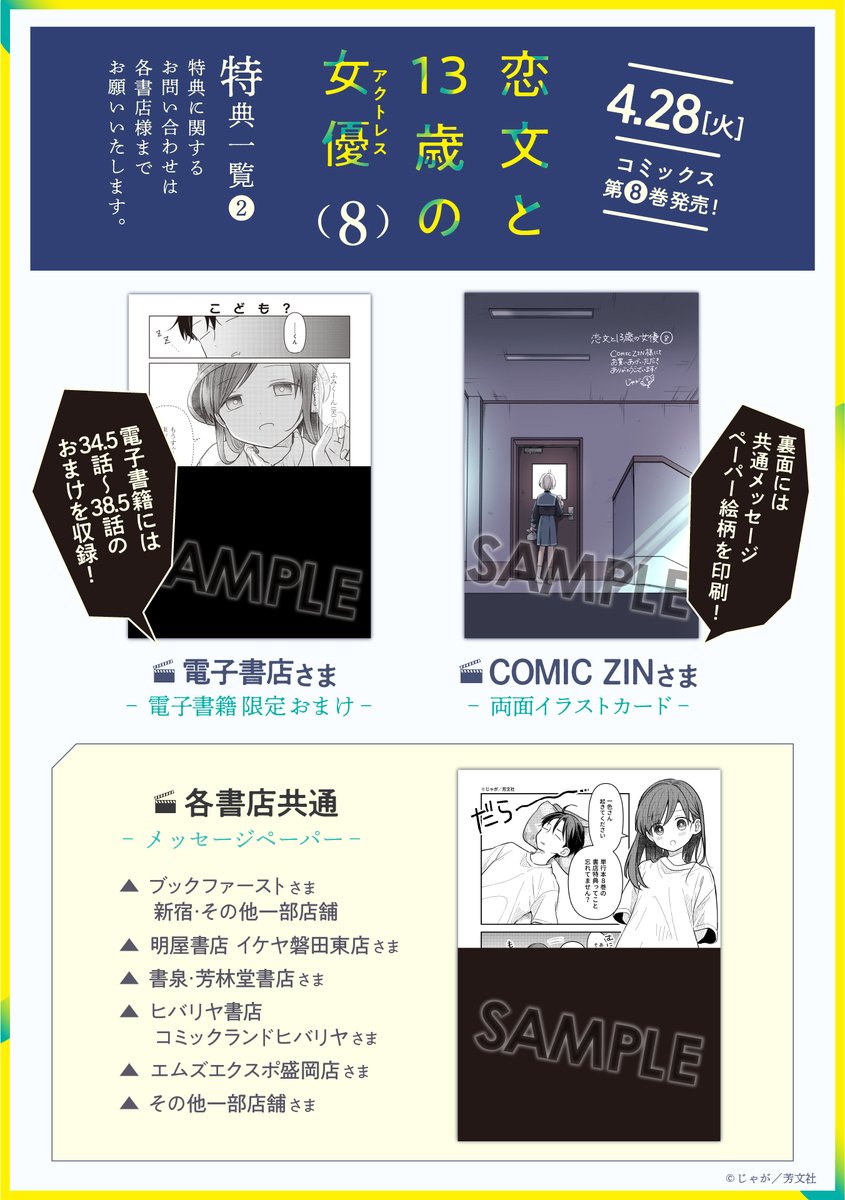

🌟特典情報更新!🌟

4/28(火)発売のじゃが先生

『恋文と13歳の女優(アクトレス)』第8巻、

特典情報を公開🧁

今回も超かわいい豪華描き下ろし特典の数々✨

有償特典アクスタもどうぞお見逃しなく⛩️🍈

次回41話は5/5更新予定です!

comic-fuz.com/manga/3149?pos…

日本語

挪威的生育率也从2009年1.98降至2025年1.48。挪威再财大气粗,也填不上老龄化导致的财政黑洞。“再世的老人”是有决策话语权的,而“未出生的胎儿”是没有话语权的,在博弈中始终处于弱势。理想的模式还是儒家模式:家庭为主,社会福利为辅,在“各亲其亲,各子其子”的基础上,“不独亲其亲,不独子其子”。

WWG@wednesd332

@fuxianyi 挪威也不行吗,人家主权财富基金给每个挪威人存了34万美金,造血能力很好啊!

中文

@kagibangou029 新作情報を見ました!今からもう楽しみで仕方ないです……!

A4判の大きなサイズで見られるのが本当に嬉しいです。

雑誌のB5判より大きくて、原画のB4サイズにもぐっと近いので、作品の魅力をより深く味わえそうでわくわくしています!

日本語

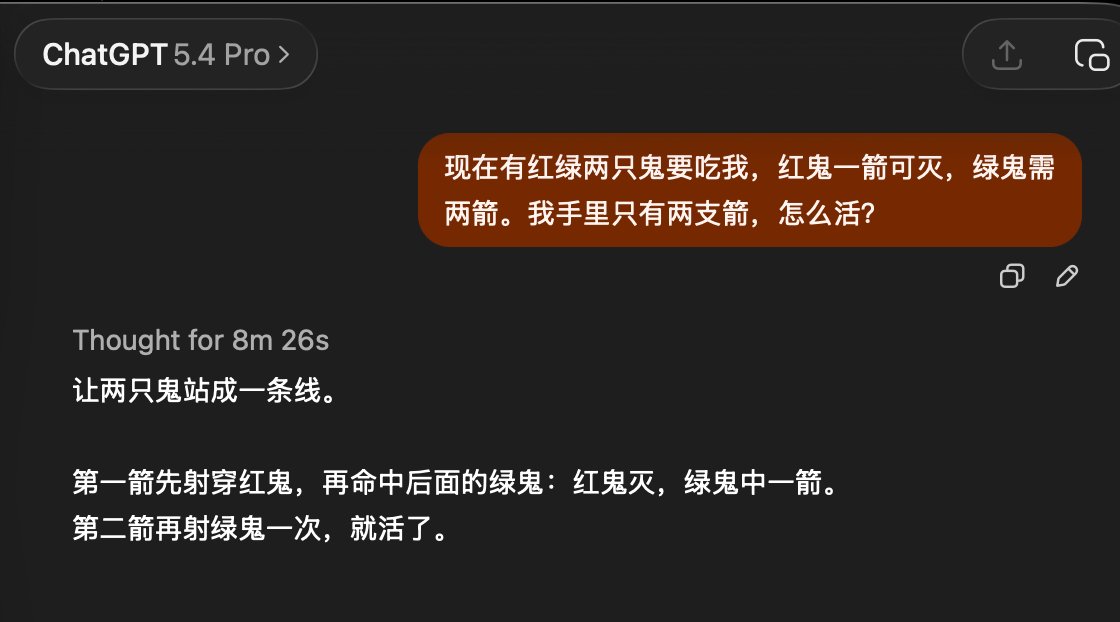

一个比较直观的 argue 的结果对比:虽然期望模型给出那种令人眼前一亮的答案还是有些太苛刻了,但是也算是证明多轮争辩和多角度review的一些基本价值。同样问题的普通回答和 argue 后的回答,深度和辩证角度已经不在一个层级。最后顺便也附带一个 ChatGPT 5.4 Pro 思考了快十分钟后的回答...怎么说呢,也许能自吹一波用普通模型的成本达到接近 GPT Pro 的结果?😂 (反正吹牛又不用交税...)

github.com/onevcat/argue/

中文

@infuse I’d like to ask whether Infuse on Vision Pro will support VR videos, such as this kind of content: youtube.com/watch?v=w1ABOY…

YouTube

English

Infuse 8.4.2 now available! 🙌

New Bwdif deinterlacing option (highest quality), improved handling of extras and bonus content, better support for PiP features, and more! community.firecore.com/t/infuse-8-4-2…

English

For those who missed it, there is a revival of the old DolphinVR emulator which allows you to play GameCube and Wii games in full Immersive like this

Outside of improvements of that comes from being brought to modernity — This one is thankfully being built with OpenXR

Rafael Torres ᯅ@mundoxrbrasil

Tested some games on Dolphin VR and it was amazing! 🤩 KRVR+Dolphin XR

English

Pei retweetledi

✨フォローリポストキャンペーン✨

めばち先生描き下ろしビジュアルポスターがランダムで当たるチャンス🎯

詳細は添付画像をご確認ください👀

【応募方法】

①@starlight_9thEXをフォロー👤

②本投稿をリポスト🔁

応募〆切:4月19日(日) 23:59まで

#スタァライト9thEX #スタァライト

日本語

@alas_chn ほぼ全ての絵がpixiv等で見れるので意味あるのかな?と思っていたのですがそう言って頂けるなら配信をしようと思います。元手とかほぼないので。

データ作成&申請等で数日かかっちゃうと思うのでそれまで少しお待ちください。

日本語

@ariannabetti @Engineer_Wong Why do they remark on 4lite? is it not allowed?

English

@Engineer_Wong: for flying (if neck/muscles allow), AirFanta Wear is a game changer. Many thanks for engineering it. Unexpected bonus: it can be made to look more like high tech cool headphones. KLM crew has remarked on the 4lite a couple of times but so far... Not on the Wear.

English