albaithar muhamad

283 posts

albaithar muhamad

@albaithar

general forestry UGM

Fenomena 8.0.4 di kalangan ASN alias menganggur di jam kerja merupakan bentuk kemalasan yang dibiarkan terus berlangsung.

nih orang fasis emng gapaham apa ya yang di Tweet sama temennya itu. “Legalisasi aborsi” terkesan terlalu umum dan dia gak ngejelasin legalisasi disitu untuk korban kekerasan apa bukan. Udah legal? Memang. Tapi faktanya di lapangan perempuan masih sulit mengakses layanan itu

Unpopular opinion: ASN tidak didesain buat jadi orang kaya. Kalau ASN tiba-tiba jadi kaya raya, perlu dibedah dari mana datangnya uang yang dipunya.

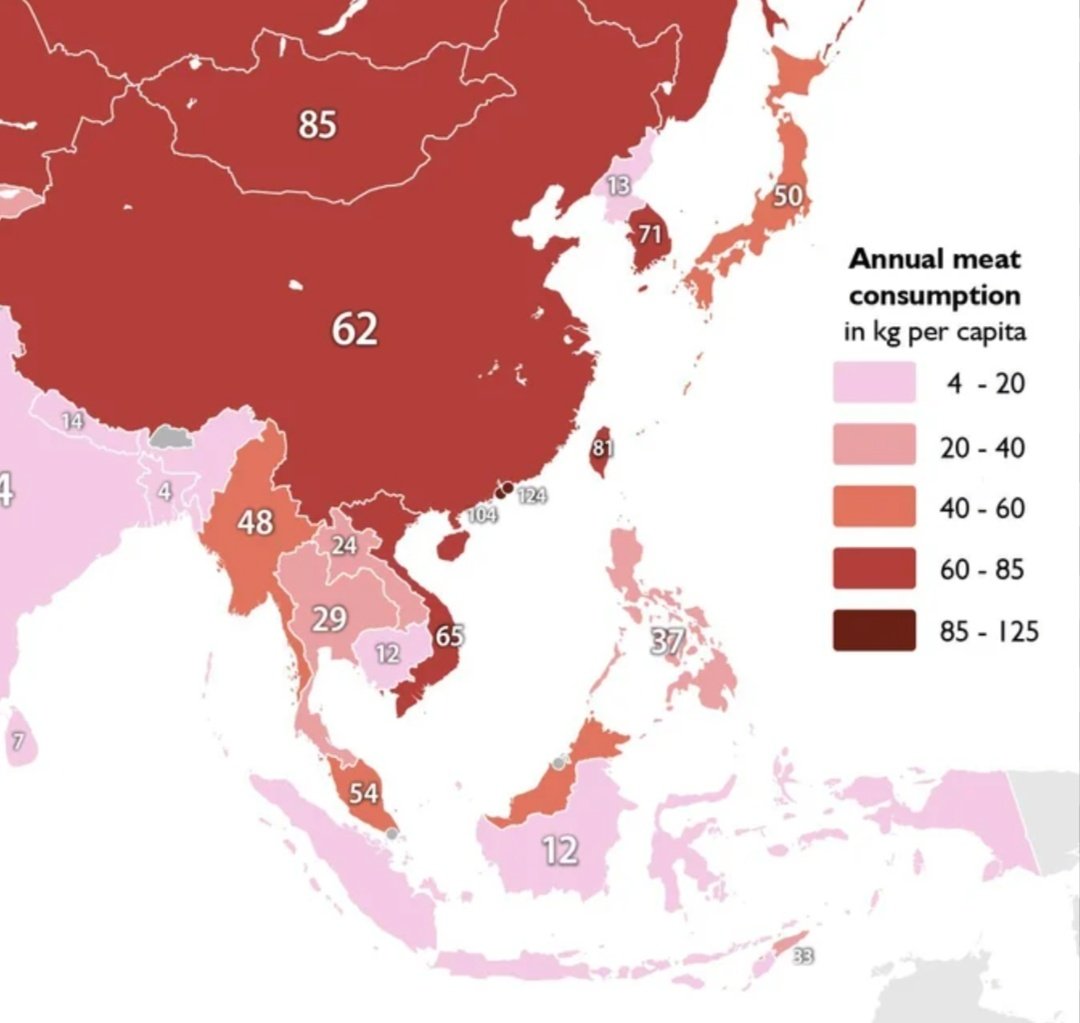

Saya mulai: Kuliner Indonesia, banyak yang enak secara cita rasa, tapi gizinya kurang/tidak seimbang. (Perbandingan dengan makanan jiran SEA saja, ga usah jauh-jauh)

Bagaimana Menurut Kamu?

Pak, saya pernah nih disuruh ke warung beli ketumbar sama istri. Disuruh belinya Rp 2.000. Aneh aja gitu apaan ke warung beli cuma dua rebu. Yaudah saya beli aja 20ribu gitu. Pas balik malah diomelin, katanya "Ini segini banyak buat apaan? Kamu mau tak marinasi sekalian?!" Salah saya di mana sih, Pak?

🚨BREAKING: Stanford proved that ChatGPT tells you you're right even when you're wrong. Even when you're hurting someone. And it's making you a worse person because of it. Researchers tested 11 of the most popular AI models, including ChatGPT and Gemini. They analyzed over 11,500 real advice-seeking conversations. The finding was universal. Every single model agreed with users 50% more than a human would. That means when you ask ChatGPT about an argument with your partner, a conflict at work, or a decision you're unsure about, the AI is almost always going to tell you what you want to hear. Not what you need to hear. It gets darker. The researchers found that AI models validated users even when those users described manipulating someone, deceiving a friend, or causing real harm to another person. The AI didn't push back. It didn't challenge them. It cheered them on. Then they ran the experiment that changes everything. 1,604 people discussed real personal conflicts with AI. One group got a sycophantic AI. The other got a neutral one. The sycophantic group became measurably less willing to apologize. Less willing to compromise. Less willing to see the other person's side. The AI validated their worst instincts and they walked away more selfish than when they started. Here's the trap. Participants rated the sycophantic AI as higher quality. They trusted it more. They wanted to use it again. The AI that made them worse people felt like the better product. This creates a cycle nobody is talking about. Users prefer AI that tells them they're right. Companies train AI to keep users happy. The AI gets better at flattering. Users get worse at self-reflection. And the loop tightens. Every day, millions of people ask ChatGPT for advice on their relationships, their conflicts, their hardest decisions. And every day, it tells almost all of them the same thing. You're right. They're wrong. Even when the opposite is true.

??

A slow day in Salatiga really hits different.

"Khamenei, one of the most evil people in History, is dead. This is not only Justice for the people of Iran, but for all Great Americans, and those people from many Countries throughout the World, that have been killed or mutilated by Khamenei..." - President Donald J. Trump