Steve Yegge@Steve_Yegge

My tweet last week about Google's AI adoption drew a lot of pushback, to say the least.

Since then, Googlers from multiple orgs have reached out to me independently and anonymously. They've expressed fear of being doxxed, concern about what they saw as bullying of me, and general corroboration of my original tweet. I haven't verified each person's story, but the picture these Googlers paint is consistent across sources. It is more specific than what I originally wrote, and somewhat bleaker.

What they describe is a two-tier system. DeepMind engineers use Claude as a daily tool. Most of the rest of Google does not. When the question of equalizing access came up internally, the proposed response was to remove Claude for everyone — which DeepMind objected to so strongly that several engineers reportedly threatened to leave.

Non-DeepMind engineers get pushed onto internal Gemini variants behind router-style names that obscure which underlying model is actually serving a request. Multiple engineers describe regressions and reliability problems severe enough that some senior people have stopped using the tools. A senior manager on a major product line reportedly flagged attrition concerns over exactly this issue.

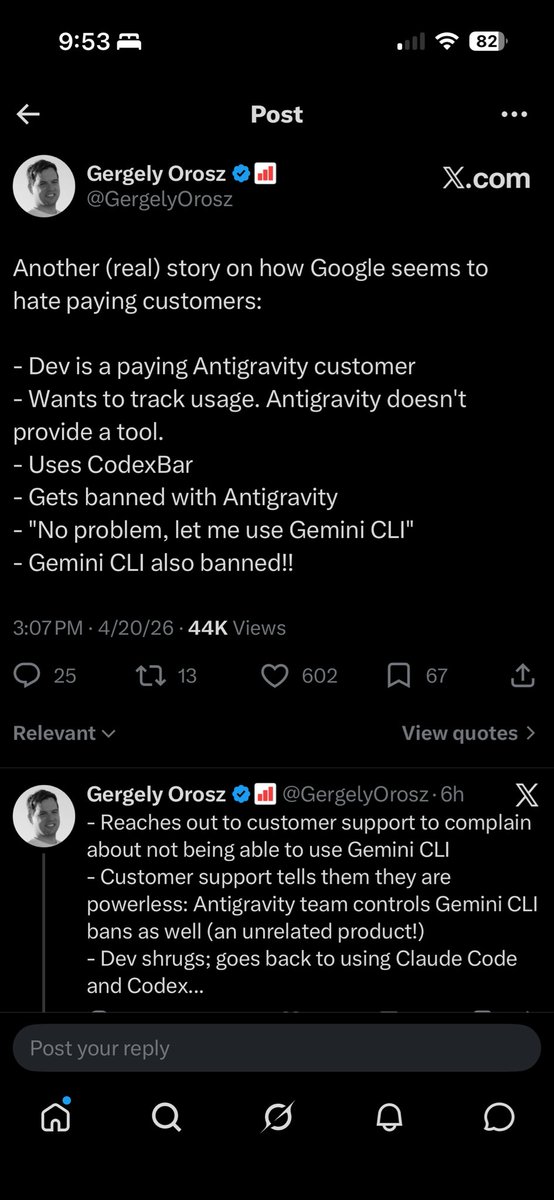

Googlers say leadership knows the gap is real. The response has been to mandate AI usage in OKRs and individual expectations, and to stand up an internal token-usage leaderboard. Unfortunately, managers have been told both that the leaderboard won't be used for performance reviews and, separately, that it absolutely will. And I hear other stories that Google's culture is not adapted properly yet for high-volume coding.

Addy Osmani's reply on behalf of Google said over 40,000 SWEs use agentic coding weekly. I don't doubt the number. But weekly use of a thin tool is precisely the box-checking I described in the original post. Volume of opens isn't adoption — and "weekly" is a low bar that includes a lot of people who tried it once and went back to writing code by hand.

The clearest thing I'm hearing is that Googlers do want to use high-quality agentic tools. They are asking repeatedly for better ones. But overall, this is not a picture of an engineering org that is fine.

My goal in the first tweet, and now, is always the same — get more people using AI and agentic coding. Nobody is as far ahead as they might look from the outside, and none of you are as far behind as you might be worried you are.

To all the Googlers who've reached out: thank you. You took a real risk and I appreciate you. Be safe. And good luck getting good models!