Alfred Ssekagiri retweetledi

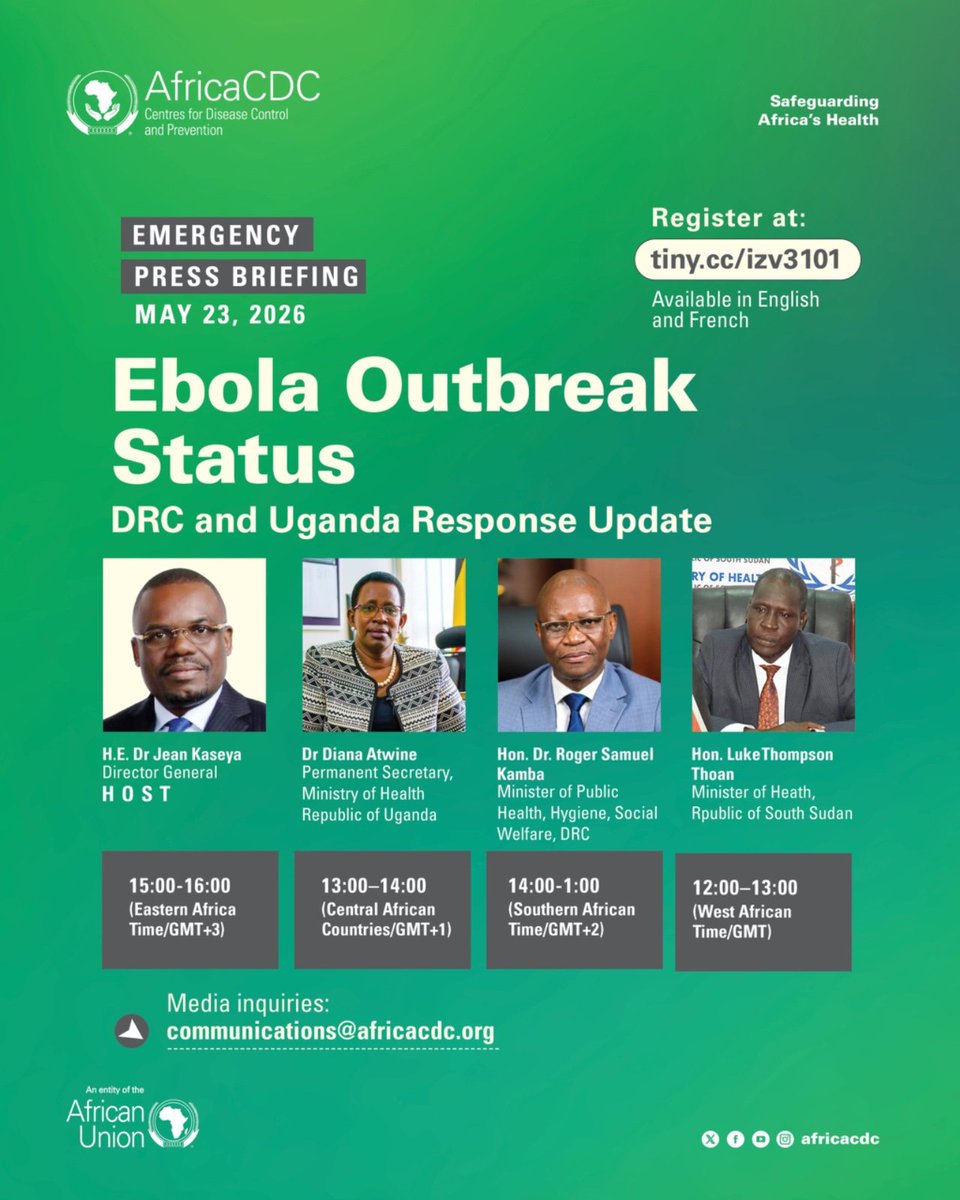

🔴 HAPPENING TODAY:

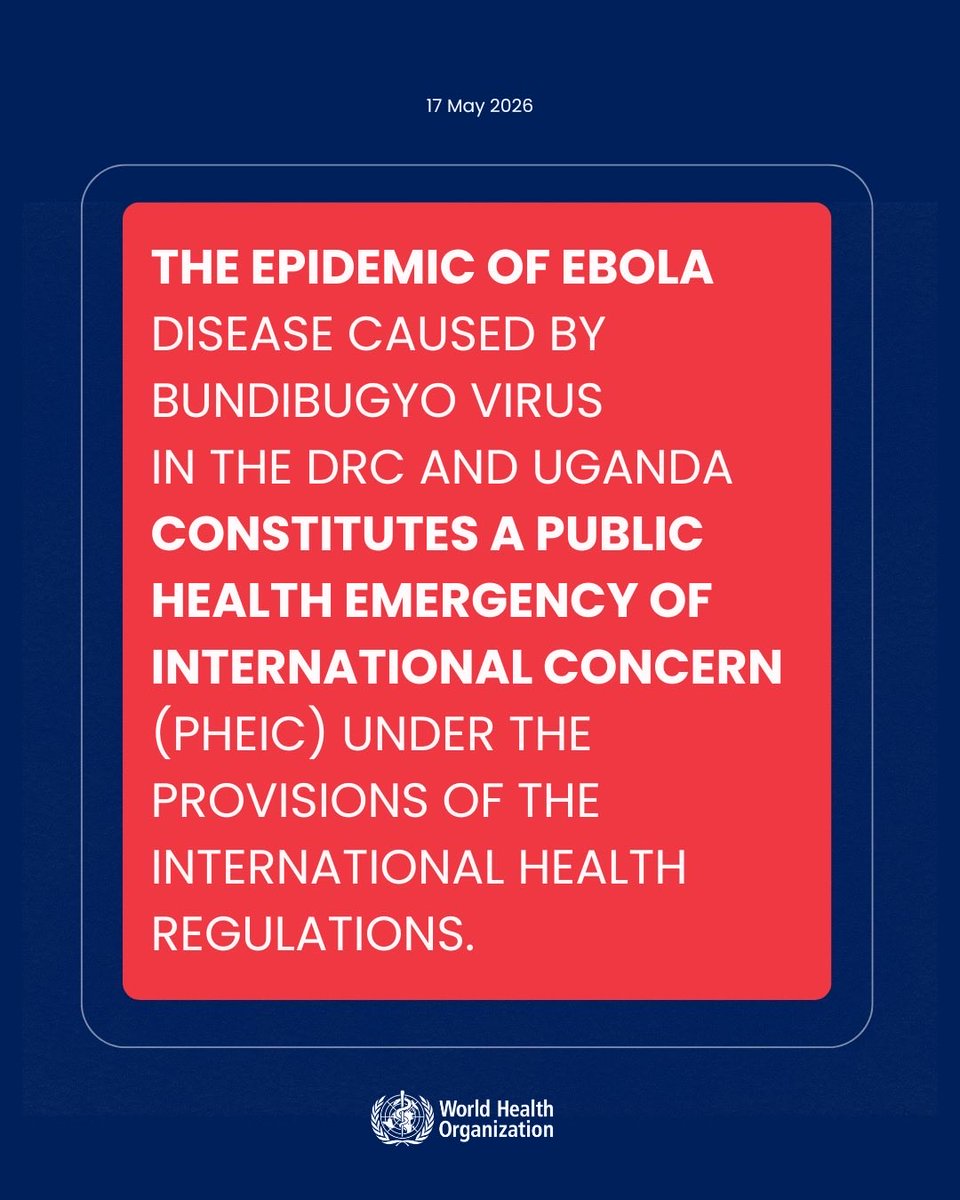

Join us for the second emergency briefing on the Ebola Disease (Bundibugyo virus) outbreak in the Democratic Republic of the Congo and the Republic of Uganda.

Hosted by H.E. @Dr_JeanKaseya, Director General of Africa CDC, the briefing will feature Hon. Dr @rogerkamba, Minister of Public Health, Hygiene and Social Welfare of the DRC; Dr @DianaAtwine, Permanent Secretary of the Ministry of Health of Uganda; and Hon. Luke Thompson Thoan, Minister of Health of the Republic of South Sudan.

The session will provide the latest updates on the outbreak, ongoing response efforts, and urgent regional coordination measures following the DG’s high-level engagements with H.E. Yoweri @KagutaMuseveni, President of Uganda, and a subsequent strategic meeting, as well as his visit to the epicenter in Bunia.

📅 Date: Saturday, 23 May 2026

🕓 Time:

• 15:00 | EAT (GMT+3)

• 14:00 | SAST (GMT+2)

• 13:00 | CAT (GMT+1)

• 12:00 | WAT (GMT)

🔗 Register to participate: africacdc-org.zoom.us/webinar/regist…

#HealthSecurity #EbolaResponse @GovUganda

English