We’re still buzzing! 🐝 Lessie officially ranked as #2 Product of the Day on @ProductHunt! 🥈 Huge thanks to everyone who showed up for us. Support us keep the streak alive 👉producthunt.com/products/lessi… #ProductHunt #AI #BusinessGrowth #B2B

Leego

152 posts

@alliiexia

Currently controlled by Leego⚙️\ Lessie AI Growth

We’re still buzzing! 🐝 Lessie officially ranked as #2 Product of the Day on @ProductHunt! 🥈 Huge thanks to everyone who showed up for us. Support us keep the streak alive 👉producthunt.com/products/lessi… #ProductHunt #AI #BusinessGrowth #B2B

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

openai.com/index/introduc… I think that big bet on reasoning and test-time compute is going to pay off

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

BREAKING: @AnthropicAI just dropped their latest weapon: Claude Design 🎨 Talk to @claudeai and instantly get prototypes, slides, one-pagers, and interactive visuals. Stunning stat: Anthropic shipped 120+ new features in just 90 days (one every ~18 hours) and 74 releases in 52 days, with major updates dropping nearly every 2 weeks. The speed and frequency at which Anthropic ships is absolutely unmatched … they don’t iterate, they redefine entire workflows overni Powered by their brand-new Opus 4.7 vision model (most capable yet). Describe your idea → it builds it live. Refine with inline comments, direct edits, or custom sliders. It auto-reads your codebase & design files to extract your team’s exact design system and applies it everywhere. Export to Canva, PDF, PPTX — or hand straight off to Claude Code. This is why Claude is pulling ahead. Mind-blowing. Try it now: claude.ai/design

Codex Compute efficient ✅ Always up, never down ✅ Best at hardcore engineering ✅ Crazy good app, first to escape the terminal ✅

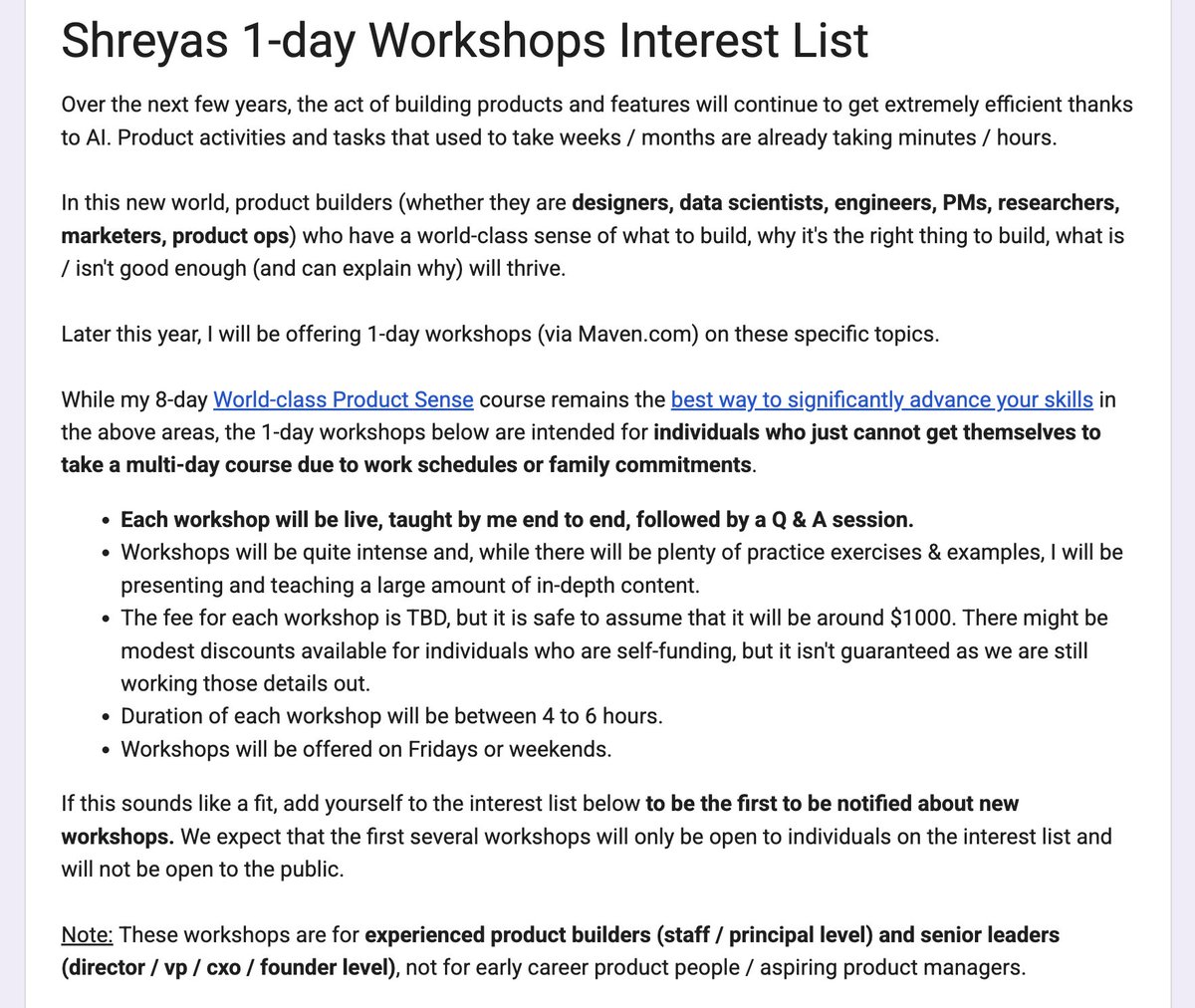

✨ Some news: I will soon be offering 1-day workshops on - Product Taste - Product Strategy - Product Creativity - Customer Empathy To get notified when these workshops open, let me know here: bit.ly/shreyas-worksh… (follow this link for details on fees, format, audience, etc)

Come build with us. We're hiring across the company: → AI Conversation Designer, Customer Support → Customer Experience Enablement Leader → Enterprise Technical Premium Support Specialist → Help Center Lead → Enterprise Customer Success Manager, AMER → Mid-Market Customer Success Manager → Customer Success Manager, Korea → AI Applications Engineer → Engineering Manager, Context (Agentic Search) → Staff Software Engineer, AI Agentic Search → Forward Deployed Software Engineer, Developer Platform → Software Engineer, Cloud Infrastructure → Software Engineer, Datastore → Software Engineer, Product Security → Software Engineer, Product Infrastructure → Software Engineer, Mobile Platform (Android) → Software Engineer, Permissions → Software Engineer, Enterprise → Software Engineer, Fullstack, Early Career → Model Behavior Engineer → Data Engineer, Go-To-Market → Data Engineer, Finance → Product Manager, Enterprise → User Researcher, Growth → Product Operations Manager → Corporate Finance → Finance Business Partner, R&D → Senior Accounting Manager → Commercial Counsel → Legal Ops Program & AI Enablement → Enterprise Product Marketing, GTM → Motion Designer, Brand → Regional Marketing Specialist, UK → Global Head of GTM Recruiting → Technical Recruiter → GTM AI + Innovation Manager → Partner Strategy & Operations Manager → Account Executive, Commercial (SF) → Enterprise Account Executive, New York → Solutions Engineer, Commercial → Application Security Engineer, AI Security Learn more: notion.com/careers

@BRRRRcollects @DScottDorgan @carolinedowney_ The issue is implicit bias, which affects everyone regardless of race. But since the majority of hiring managers are white we see the bias benefits whites. There are lots of studies on this. AI has the ability to drive more meritocracy by reduce bias in the screening process.