Iñigo Alonso

33 posts

Iñigo Alonso

@alonsonlp

Research Associate at @EdinburghNLP | Former Ph.D. student at @Hitz_zentroa | NLP | Multimodality | Table Understanding

Excited to present StructSum 📊🧠 at #ACL2024 with Andreea Marzoca & Francesco Piccinno @nopper! Come and say hi 🤝 if you'd like to chat about our paper, LLMs for generating structured outputs, or context-heavy NLP tasks. See you there!✨✨ arxiv.org/abs/2401.06837

New @hitz_zentroa paper! Accepted @aclmeeting 2024 on "Argument Mining in Data Scarce Settings: Cross-lingual Transfer and Few-shot Techniques"; joint work with @ragerri and @jiporanm #ACL2024NLP Paper: arxiv.org/abs/2407.03748 Data code and models: github.com/anaryegen/few_…

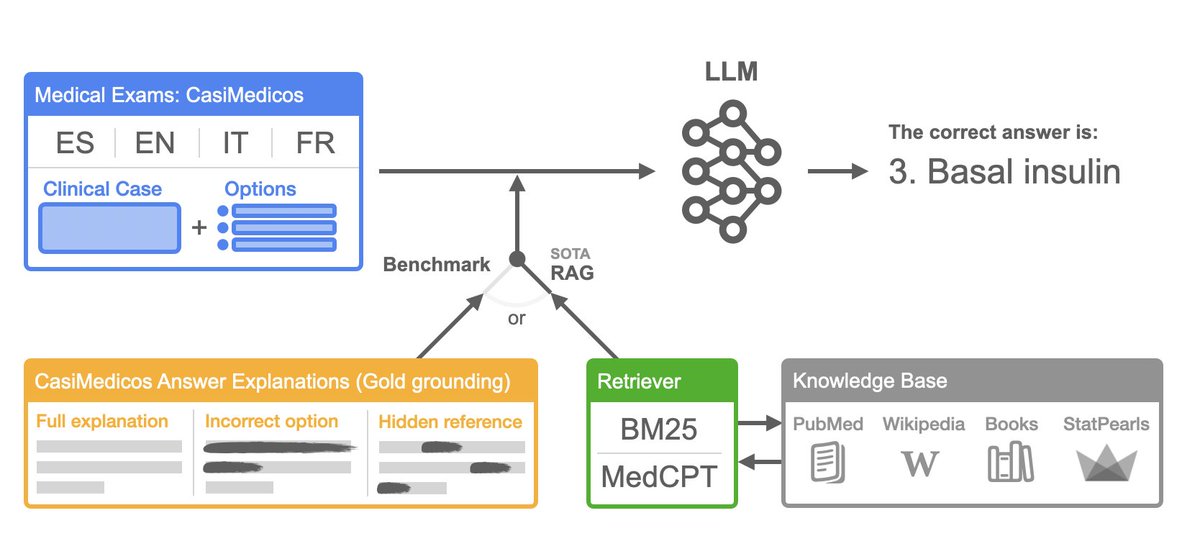

In our new paper, we introduce Latxa, a family of LLMs for Basque from 7 to 70B parameters that outperform open models and GPT3.5. Models and datasets @huggingface hf.co/collections/Hi… Code: github.com/hitz-zentroa/l… Blog: hitz.eus/en/node/343 Paper: arxiv.org/abs/2403.20266

Reimagining table representation! In our new #ACL2024NLP paper we introduce PixT3: a family of image-based Table-to-Text Generation models that scale better at generating text from large tables, outperforming traditional text-based baselines. arxiv.org/abs/2311.09808

WE ARE HIRING!! 🥳The HiTZ Center for Language Technology (hitz.eus) at the University of the Basque Country (UPV/EHU) invites applications for predoctoral and postdoctoral positions in Natural Language and Speech Processing. CHECK IT HERE!! hitz.eus/job-offers