Aaron Mueller

314 posts

Aaron Mueller

@amuuueller

Asst. Prof. in CS at @BU_Tweets ≡ {Mechanistic, causal} {interpretability, NLP}

1/ Introducing NanoGPT Slowrun 🐢: an open repo for state-of-the-art data-efficient learning algorithms. It's built for the crazy ideas that speedruns filter out -- expensive optimizers, heavy regularization, SGD replacements like evolutionary search.

Can steering remove LLM shortcuts without breaking legitimate LLM capabilities? In our @eaclmeeting paper, we show that conceptual bias is separable from concept detection; this means inference-time debiasing is possible with minimal capability loss.

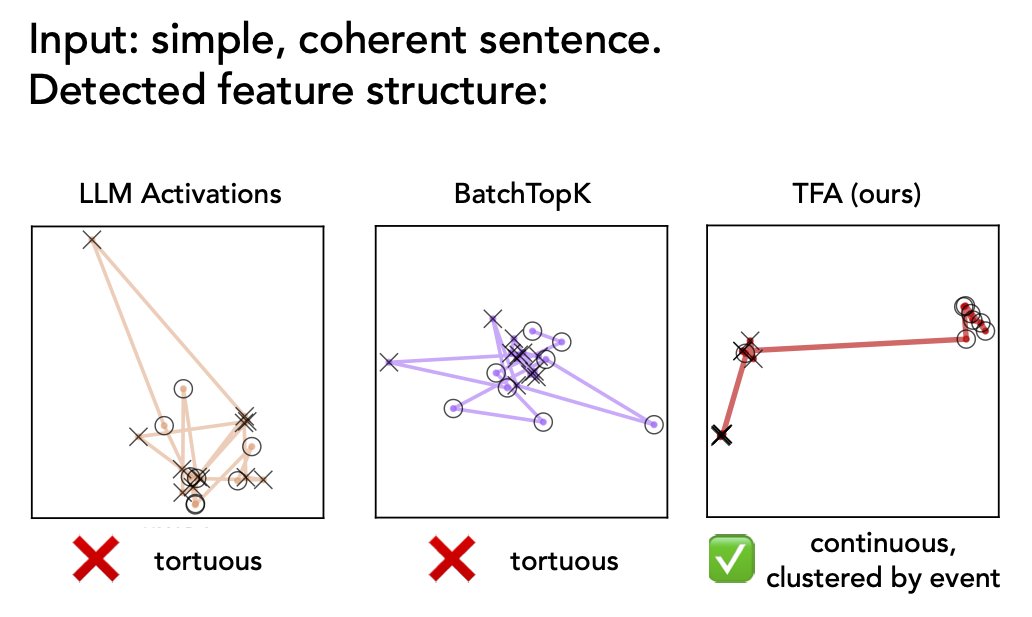

New paper! Language has rich, multiscale temporal structure, but sparse autoencoders assume features are *static* directions in activations. To address this, we propose Temporal Feature Analysis: a predictive coding protocol that models dynamics in LLM activations! (1/14)