Ekdeep Singh Lubana

764 posts

Ekdeep Singh Lubana

@EkdeepL

Member of Technical Staff @GoodfireAI; Previously: Postdoc / PhD at Center for Brain Science, Harvard and University of Michigan

After 2 years probing #visionmodels at the #KempnerInstitute, @thomas_fel_ reflects on what he’s learned—and what pieces of the #interpretability puzzle remain hidden—as he heads to @GoodfireAI. Read the interview: bit.ly/4aEBzmp 🎙️🧩

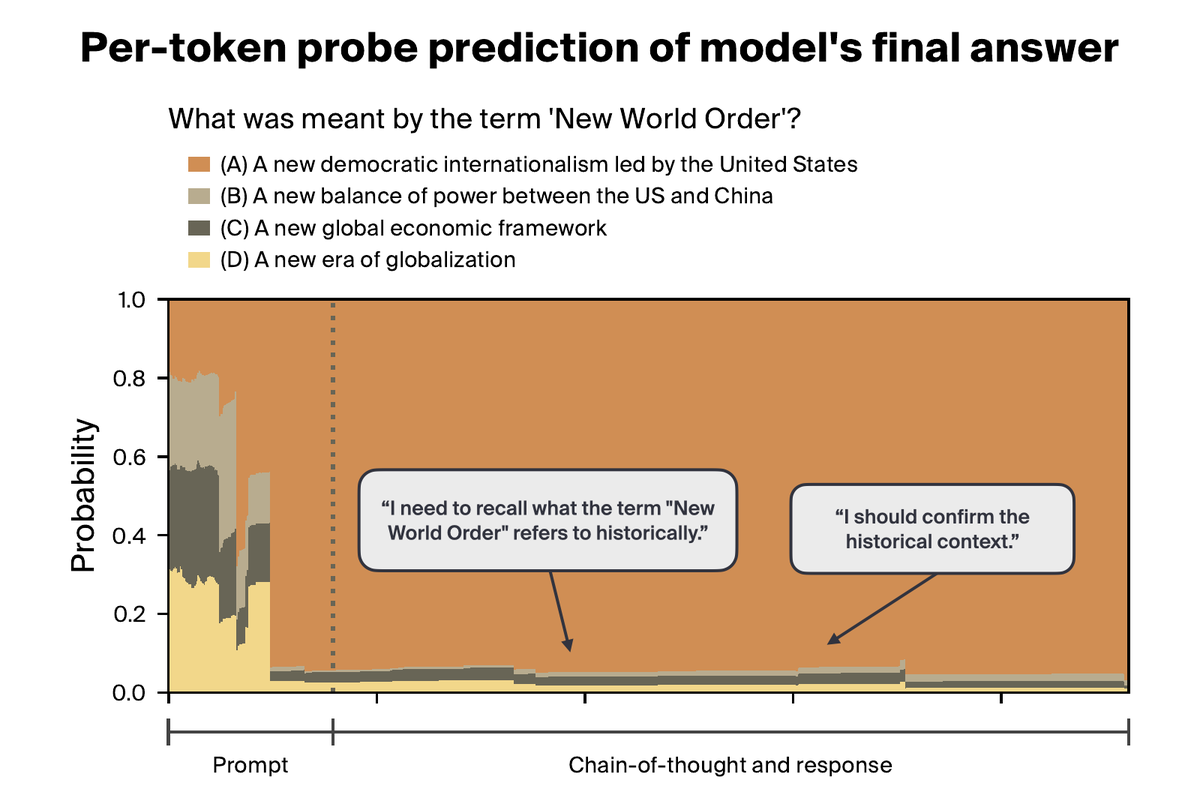

Can we train models to have more monitorable CoT? We introduce Counterfactual Simulation Training to improve CoT faithfulness/monitorability. CST produces models that admit to reward hacking and deferring too much to Stanford profs (@chrisgpotts told me this is very dangerous)

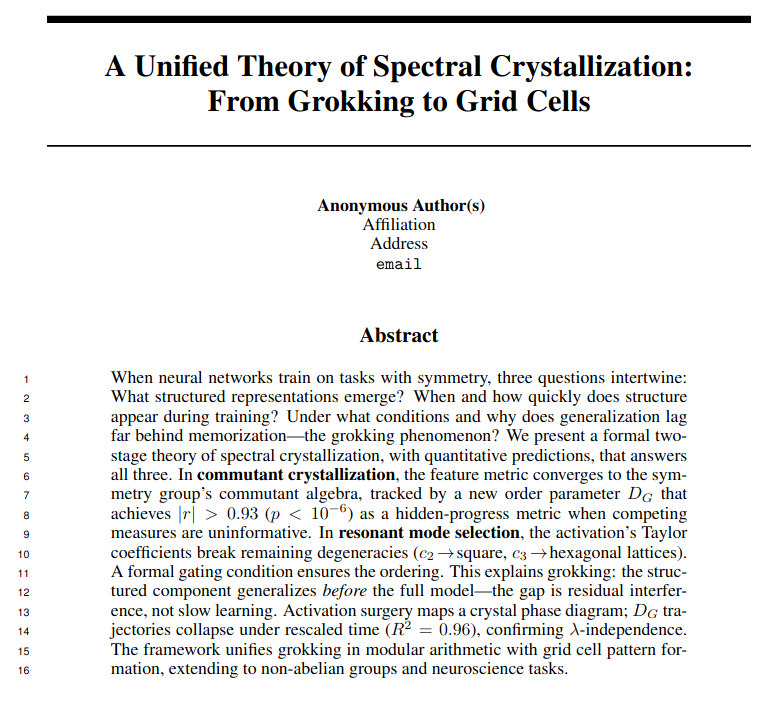

New paper: It's time to optimize for 🔁self-consistency 🔁 We’ve pushed LLMs to the limits of available data, yet failures like sycophancy and factual inconsistency persist. We argue these stem from the same assumption: that behavior can be specified one I/O pair at a time. 🧵

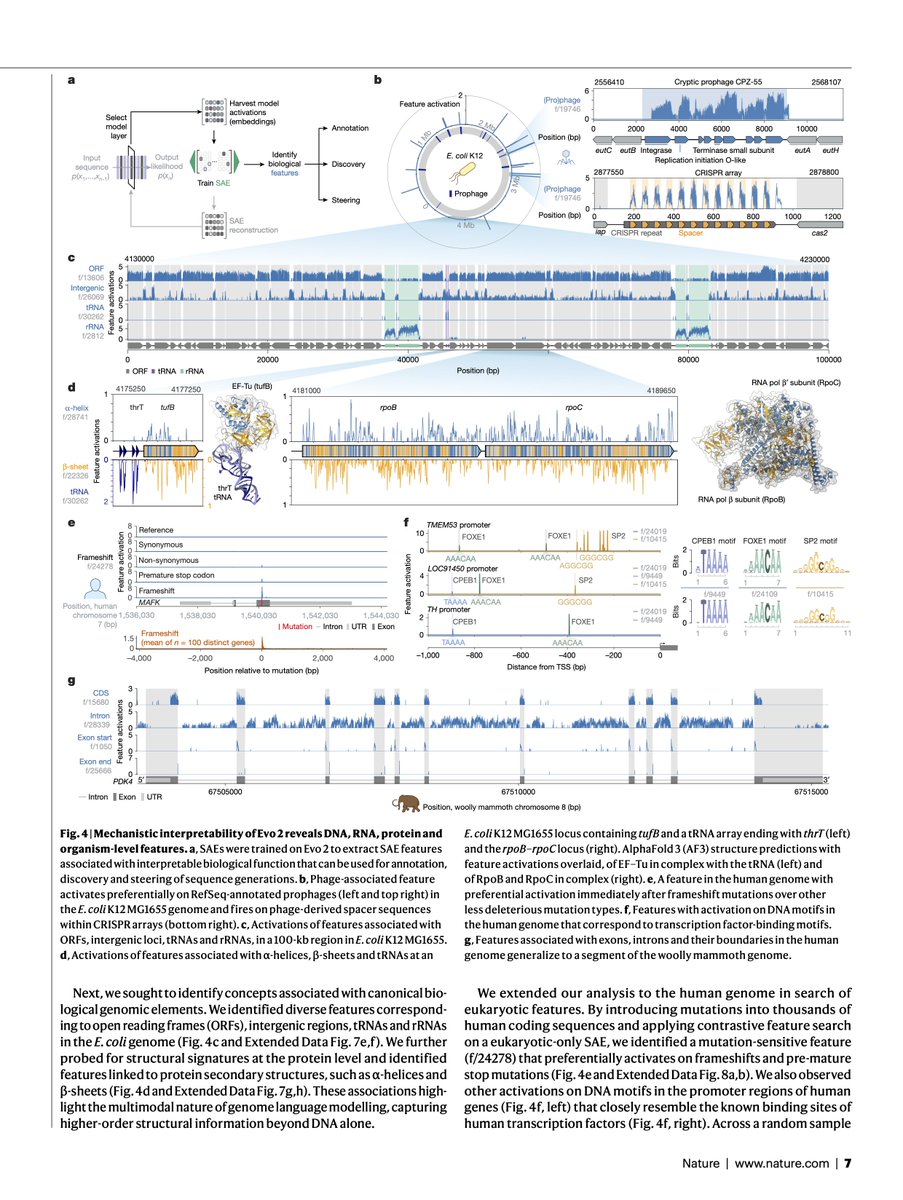

Evo 2, the largest fully open biological AI model to date, is now published in @Nature.

Bidding farewell to @KempnerInst! Leaving with great memories of fun moments and a truly supportive, wonderful community 🙏 If you want great research freedom surrounded by brilliant people, apply for the Kempner Fellowship. Cannot recommend it enough! Next stop: SF! 🌉

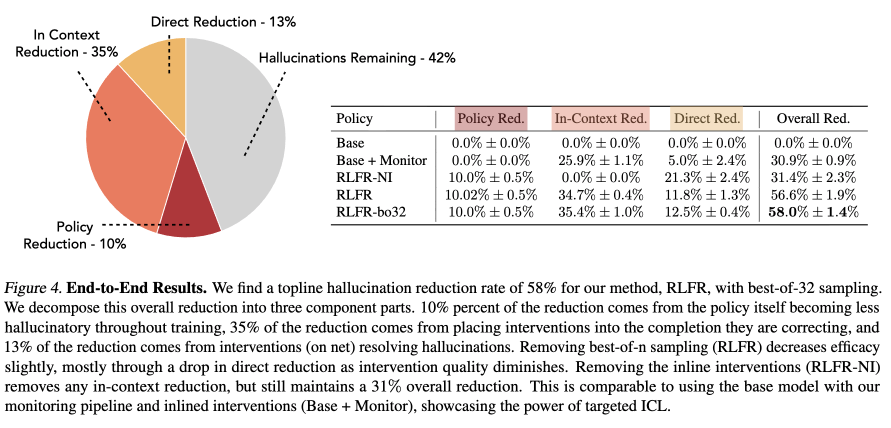

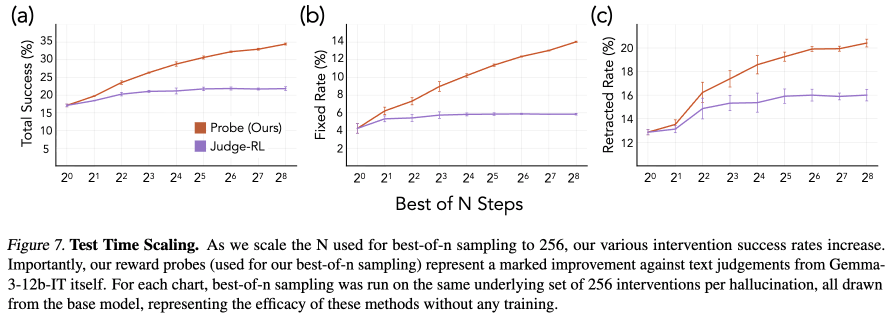

For this week's seminar, we are excited to host @EkdeepL from @GoodfireAI! Date and Time: Thursday, February 19, 11:00 AM — 12:00 PM Pacific Time. Zoom Link: stanford.zoom.us/j/93941842999?… Title: Bayes-ed: Formalizing a Paradigm for Interpretability in the Language of Bayesian Inference Abstract: Interpretability research has exploded in recent years, resulting in diverse, often heuristic attempts at understanding how models perform the tasks they do. In this talk, I intend to present steps towards a framework that helps concretize these heuristics and also expands the notion of what it means to interpret. Specifically, focusing on in-context learning, we will start our analysis with a behavior-first approach and define Bayesian models that predict both the outputs produced and, assuming power-law scaling, the learning dynamics of large-scale Transformers. We then use these Bayesian models as our guiding object and characterize how representations ought to be structured in order to support such a behavioral model, hence making feature geometry a core object of study for interpretability. This lens helps us characterize the limitations with several existing interpretability paradigms, e.g., SAEs, but also offers a path forward by either designing tools with appropriate geometrical assumptions or post-processing of SAE activations. Critically, this implies there is no silver bullet in bottom-up interpretability: behavior guides what tool or post-processing ought to be used. Grounded in this discussion, we then analyze the utility of our framework by assessing how representations can be used to influence behavior: we will make precise what inference-time interventions like activation steering are trying to achieve, how existing protocols have inherently incorrect assumptions, and how this can be fixed. Critically, making a formal link to post-training (grounded in existing Bayesian accounts of RLHF), we will show inference-time interventions can be seen as rejection sampling, motivating a pipeline for amortizing this process and leading to scalable oversight approaches grounded in interpretability. As a case study, we will operationalize a naive version of this pipeline for the task of reducing hallucinations, resulting in 58% reduction in hallucinated claims in an open-source LLM at 100x less cost than the use of a frontier model judge. Excited to see everyone at the seminar!

We used interpretability to scale RL against open-ended tasks, cutting Gemma 12B’s hallucination rate in half by teaching it to self-correct in tandem with our probing harness.