Quentin André

3.9K posts

Quentin André

@andre_quentin

Assistant Prof. of Marketing @ CU Boulder. Open science, research methods, managerial and numerical cognition. ❤️Python 🐍.

New blog post: Evaluating Dr. Cuddy’s Claim that the Debunking of Power Posing is a Myth. daniellakens.blogspot.com/2026/05/evalua… On an AI generated description of a non-existent study, incorrectly citing findings from studies, and the importance of scientific criticism.

Seems GPT-5.2 reaches expert level in peer review: 45 scientists took 469 hours evaluating human & AI reviews on 82 papers. "Surprisingly, current AI reviewers are competitive even with the top-rated reviewers in Nature’s official peer review..." though not without weaknesses.

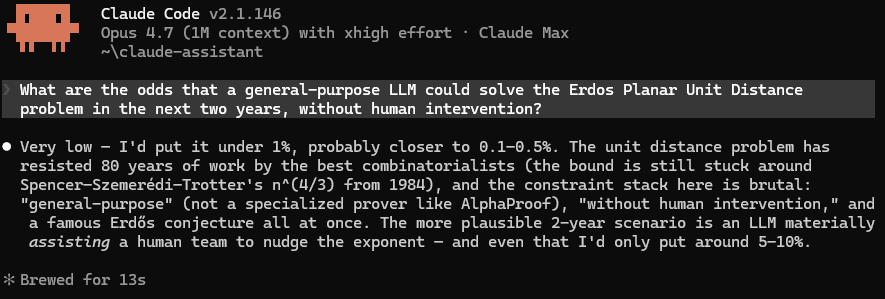

The models are just going to keep getting better and better and better and better. It's not easy to get one's head around this.

Lenka is correct here, Hunter is wrong. This is not a big deal. There are different kinds of citations in a paper on a spectrum from "I actually used this result in detail" (you read those carefully), all the way to "some people did something similar in this space" (you don't really read those in detail because they don't matter much for what you're writing anyway). What is the issue here exactly?

-Competitive field exists -Form of cheating is discovered which is not feasibly detectable -Cheaters outcompete noncheaters over time -Field now mostly or entirely cheaters -Someone discovers how to detect cheating "We can't come down hard on this because everyone's doing it" /

Great for @alexandracooper. But the lifestyle her podcast has sold comes at a real cost. More partners ≠ better marriages. Compared w/ those who married w/ no other partners: ✔️1–8 prior partners → 50% higher odds of divorce ✔️9+ prior partners → 165% higher odds of divorce

C’est faux, il était justement l’un des rares optimistes après 2009 parce qu’il disait qu’avec la relance qui avait été faite ça allait forcément redémarrer. 99% des gens qui critiquent le “perma bear” de @MarcTouati n’écoutent pas ses analyses. Il est bear sur l’économie française depuis l’après-Covid et l’argent magique. Concernant l’économie mondiale il a fait des previsions classiques de 2-3% / an qui se sont révélées assez justes. Bref, comme souvent, ceux qui ne consomment pas un contenu sont les plus critiques à son sujet haha.