Sabitlenmiş Tweet

André Faria Gomes

6.2K posts

André Faria Gomes

@andrefaria

CEO @ bluesoftbr | Board Advisor | Angel Investor | 25+ Years Scaling B2B SaaS | Helping Retail Thrive with Technology 🚀

São Paulo, Brazil Katılım Temmuz 2008

3K Takip Edilen2.3K Takipçiler

André Faria Gomes retweetledi

André Faria Gomes retweetledi

a good writeup about Muse Spark on a few complex queries (multimodal, stock analysis, coding):

riteshkhanna.com/blog/muse-spar…

English

André Faria Gomes retweetledi

Demorou, mas chegou.

AI at Meta@AIatMeta

Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at meta.ai and the Meta AI app. We’re also making it available in private preview via API to select partners, and we hope to open-source future versions of the model. Learn more: go.meta.me/43ea00

Português

André Faria Gomes retweetledi

The Linux Foundation is putting Claude Mythos preview to work for defensive security purposes as part of a new initiative with partners including @AnthropicAI, @awscloud, @Apple, @Broadcom, @Cisco, @CrowdStrike, @Google, @jpmorgan, @Microsoft, @NVIDIA, and @PaloAltoNtwks. Together, we will secure the world’s most critical software in a new AI era for open source maintainers.

Read more here: linuxfoundation.org/blog/project-g…

#OpenSource #AI

English

André Faria Gomes retweetledi

Google is partnering with the Brazilian government to track deforestation and protect the future of our forests.

A new high-resolution satellite imagery map of the country’s landscape is 6x more precise than ever before, giving authorities a baseline to monitor land use and accelerate conservation efforts.

English

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

André Faria Gomes retweetledi

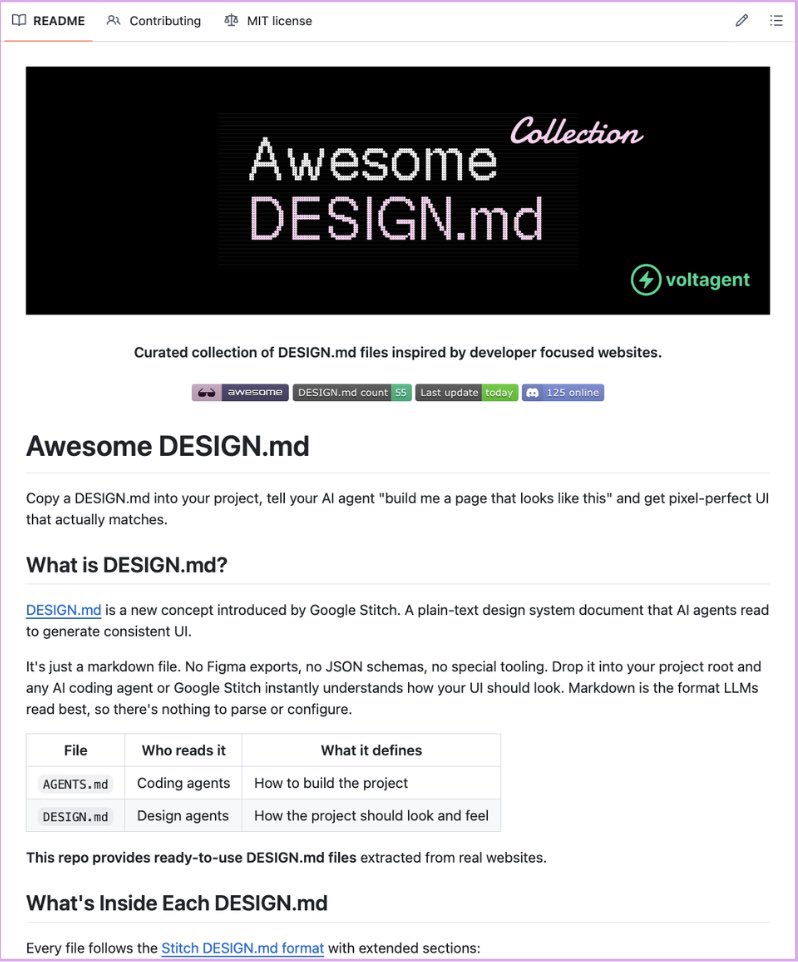

Google Stitch has released 𝗗𝗘𝗦𝗜𝗚𝗡.𝗺𝗱 🤯

One markdown file that teaches your AI coding agent your entire design system.

→ No Figma exports

→ No JSON schemas

→ Nothing to configure

The part that saves the most time:

A free collection of 40+ pre-built files already exists, extracted from real products. Stripe, Vercel, Linear, Notion, Lovable, Claude, ElevenLabs, Cursor, Warp, Zapier, and more.

Drop it in your project root. Claude Code, Cursor, Gemini CLI, and GitHub Copilot all read it natively.

100% Free and Open-Source.

English

André Faria Gomes retweetledi

André Faria Gomes retweetledi

There will be no “jobs apocalypse” due to AI — but there will be job chaos.

Our 2025 AI Job Impacts Analysis found that starting in 2028-2029, AI will create more jobs than it eliminates. Yet, each year, over 32 million jobs will be significantly transformed.

Explore and plan for the four scenarios for human workers in the age of AI: gtnr.it/4to0Zvc

#AI #Jobs #ArtificialIntelligence

English

André Faria Gomes retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

André Faria Gomes retweetledi

ChatGPT is now available in CarPlay.

The voice mode you know, now available on-the-go.

Rolling out to iPhone users running iOS 26.4+ where CarPlay is supported.

Gui Ferreira@gsferreira

ChatGPT voice mode should be available on Apple CarPlay

English

André Faria Gomes retweetledi