anish chhaparwal

23 posts

After basically ignoring every trend in Python tooling for the last ten years, I've recently become a full-blown uv convert. Here's an unreasonably long write-up on why, and how you can adopt it in your own projects: saaspegasus.com/guides/uv-deep…

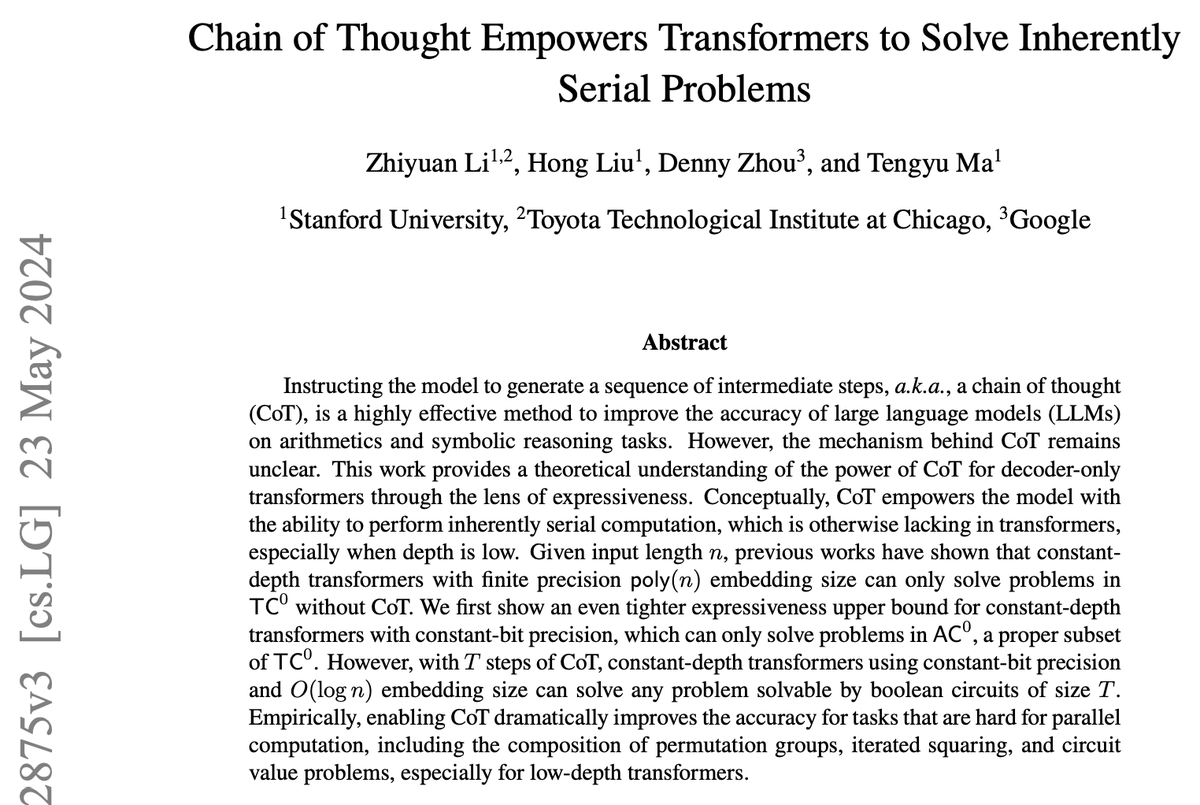

What is the performance limit when scaling LLM inference? Sky's the limit. We have mathematically proven that transformers can solve any problem, provided they are allowed to generate as many intermediate reasoning tokens as needed. Remarkably, constant depth is sufficient. arxiv.org/abs/2402.12875 (ICLR 2024)

Connected my MacBook Pro GPU to my Linux laptop NVIDIA GPU using @exolabs_ Running really large AI models with 193 TFLOPs of compute, combining the M3 GPU + RTX 4090

@AarthiScans is the first Pan-India Diagnostic Chain to provide #AI powered triaging CT Reports in all their 36 radiology branches.