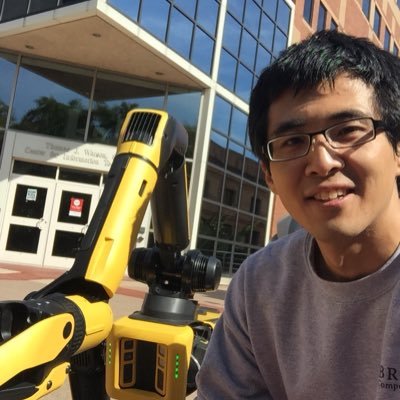

Ankit Shah

155 posts

@ankitjs

Research Scientist at The Boston Dynamics AI Institute. Prev at Brown CS, Ph.D. from MIT. Making robots easy to program and deploy. https://t.co/MjcDjhu9pd

@GaryMarcus @JonnyCoook @silviasapora @aahmadian_ @akbirkhan @_rockt @j_foerst @ParshinShojaee @i_mirzadeh @MFarajtabar @nouhadziri The programs we look at are quite simple and all represent novel combinations of familiar operations. We also find lower performance for more complex programs, especially for the compositions. Also, I have a sense that LLMs can handle OOD problems easier when represented in code

I still haven’t read that Apple paper but I see a lot of people complaining about it. To me, the complaints I’ve seen seem ill founded given what I understand about it, but obviously it’s hard for me to judge without having read the paper. What are the most reasonable complaints?

Which company has been the best for the world on net?

How do robots understand natural language? #IJCAI2024 survey paper on robotic language grounding We situated papers into a spectrum w/ two poles, grounding language to symbols and high-dimensional embeddings. We discussed tradeoffs, open problems & exciting future directions!