Ankur Banerjee

11.5K posts

Ankur Banerjee

@ankurb

Senior Product Director @zama. Co-founder @cheqd_io. Co-chair of Technical SteerCo @DecentralizedID. Ex @FinTechLabLDN, @inside_r3, @Accenture

JUST IN 🧵 1/TokenOps is joining @zama, to roll out confidential and fully compliant token distributions, airdrops and vesting across public blockchains. Public blockchains just became viable for institutional capital.

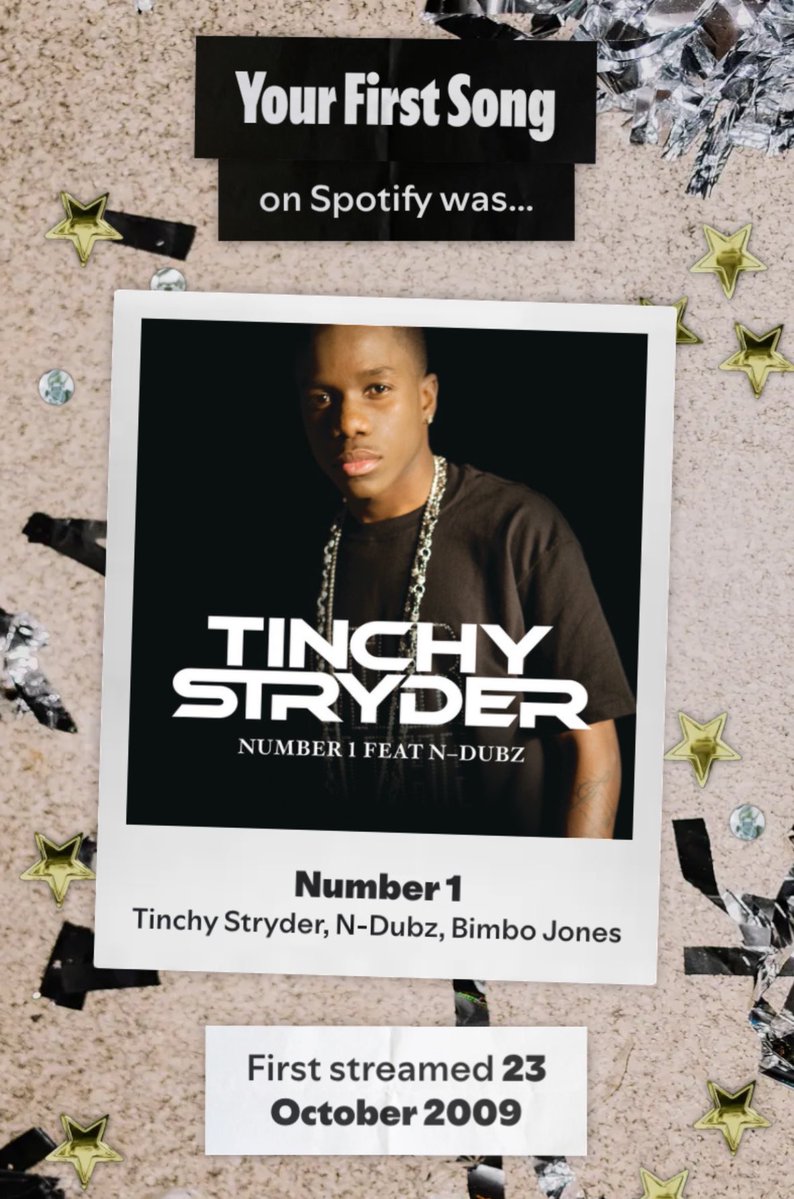

Hard to believe my first song ever on Spotify was Shania Twain 😂

For developers: building confidential dApps just got easier. Introducing the new Zama SDK: a TypeScript SDK that abstracts FHE complexity behind familiar ERC-20-style interfaces. Build from 0 to a working confidential transfer in < 5mins. Quick start: docs.zama.org/protocol/sdk/g…

For developers: building confidential dApps just got easier. Introducing the new Zama SDK: a TypeScript SDK that abstracts FHE complexity behind familiar ERC-20-style interfaces. Build from 0 to a working confidential transfer in < 5mins. Quick start: docs.zama.org/protocol/sdk/g…

We tracking almost all privacy sector projects Listened to the Shielded quarterly report yesterday from the ZAMA team Really pro and a lot of alpha on that call Price following fundamentals it seems ⚡️

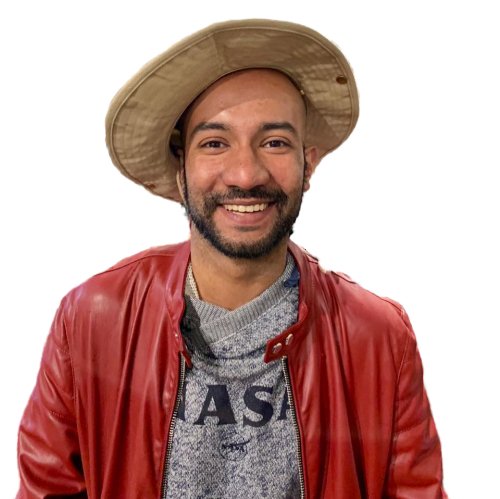

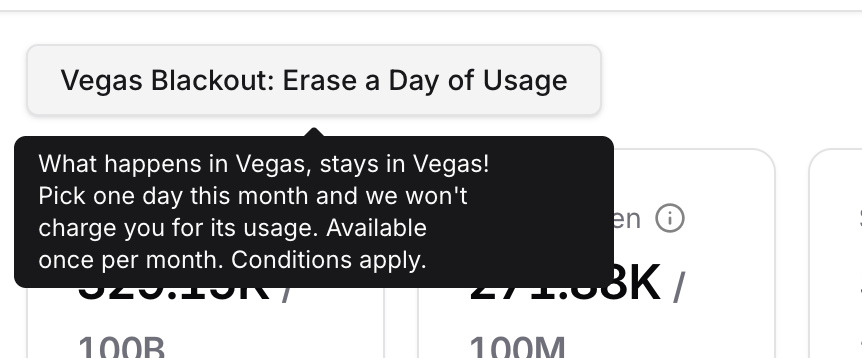

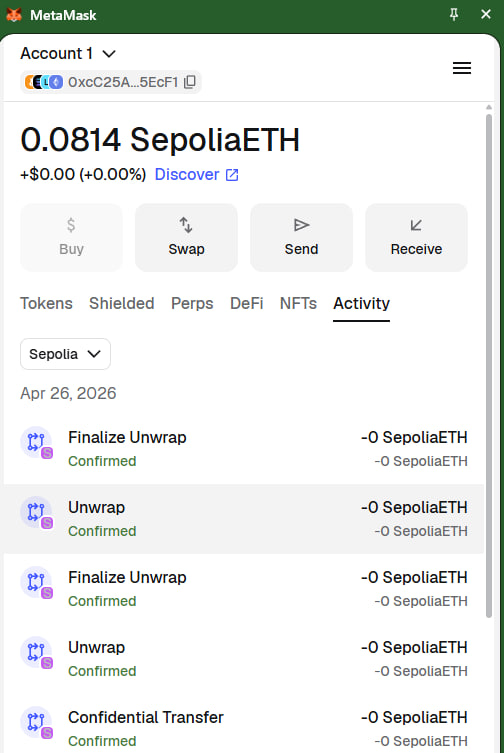

🦊 Confidential Tokens (ERC-7984) live in MetaMask. I’ve officially implemented native ERC-7984 support directly inside the @MetaMask extension... running on Mainnet. What’s live: ✅ Confidential Balance Tracking (View all your cTokens without switching tabs) ✅ Confidential Sends ✅ Shielding ✅ User Decryption Flow 🔃 Unwrapping: 90% done (Coming next) This is powered by the @zama protocol. I am once again the first to ship this natively. The Strategy: While my zWallet remains a great experimental tool, I know that for mass adoption, we need these features inside the wallets people already trust. This is an unofficial build, but it serves as a proof-of-concept: I’m showcasing just how easy it is to bring FHE-native privacy to established wallets. Building what doesn't exist yet.... that’s the mission. $ZAMA