Ankur Bapna retweetledi

Ankur Bapna

547 posts

Ankur Bapna

@ankurbpn

Conversational Audio models @Meta Previously Gemini Native Audio @GoogleDeepmind

Katılım Şubat 2014

656 Takip Edilen1.1K Takipçiler

Ankur Bapna retweetledi

Ankur Bapna retweetledi

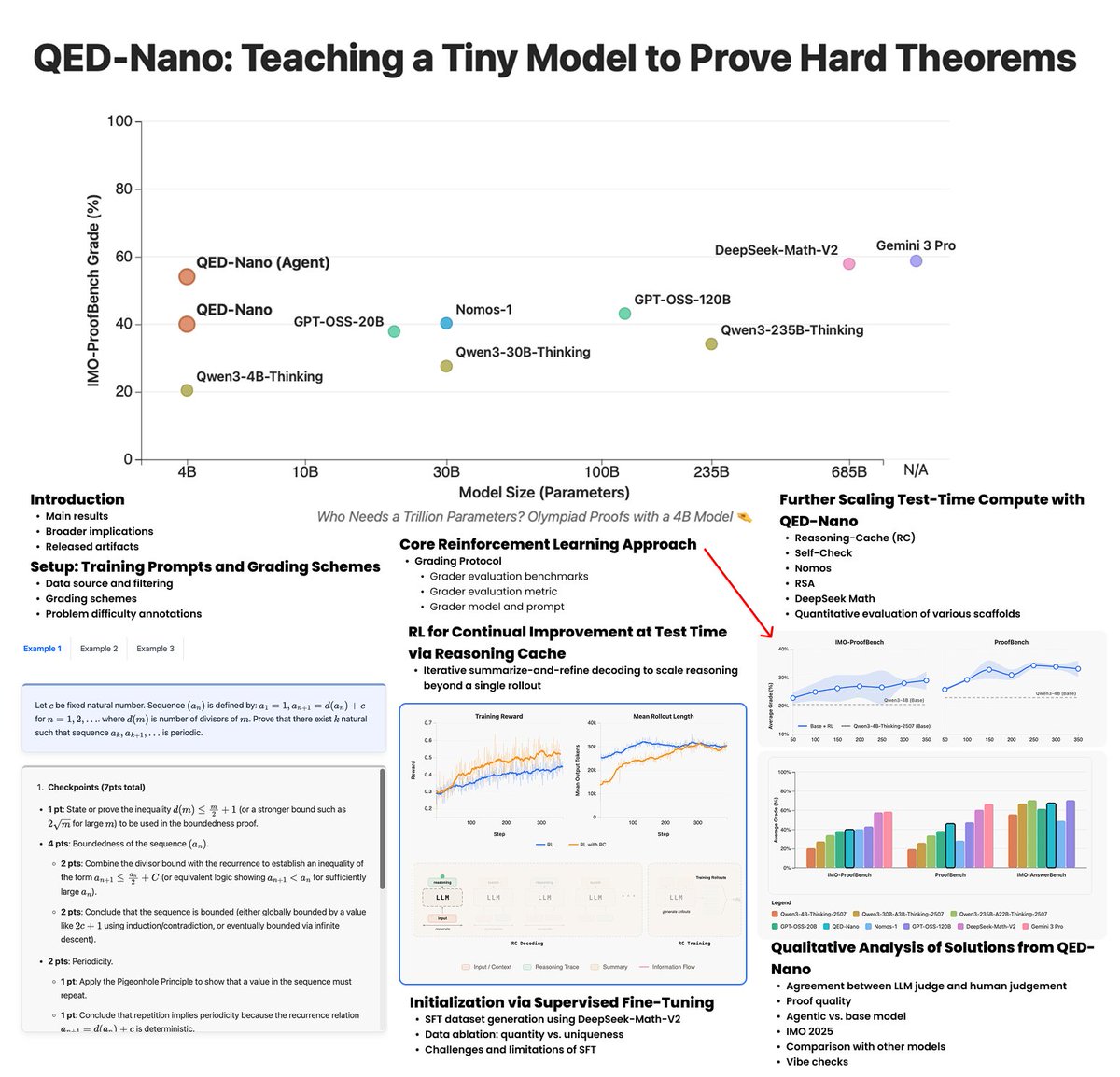

We trained a tiny 4B model to reason for millions of tokens through IMO-level problems.

Heaps excited to share our new blog post covering the full pipeline, from distilling the 🐳 to augmenting RL with a reasoning cache that unlocks extreme inference-time scaling for theorem proving.

huggingface.co/spaces/lm-prov…

English

Ankur Bapna retweetledi

I'm increasingly taking pretty strong versions of this view seriously.

Anthropic@AnthropicAI

AI assistants like Claude can seem shockingly human—expressing joy or distress, and using anthropomorphic language to describe themselves. Why? In a new post we describe a theory that explains why AIs act like humans: the persona selection model. anthropic.com/research/perso…

English

Ankur Bapna retweetledi

Ankur Bapna retweetledi

New Blogpost: How to game the METR plot🚨

In 2025, a single graph changed AGI timelines, investments, research priorities, model quality assessments and much more.

But if you squint harder, only 14 prompts shaped AI discourse over this year. Thats all the data in the 1-4 hour horizon length regime that matters. 🕵️

What's more? A majority of these are about Cybersecurity capture the flag contests, and training a Machine Learning model.

> Post-train your model on CTF and ML codebases

> profit 📈! its METR horizon length will increase.

Exactly what OpenAI has been targeting in its Codex model releases... and is Anthropic underperforming in the 2-4hr range because it mostly consists of cybersecurity, which is dual-use for safety?

To be clear, I think its an excellent idea to track horizon lengths instead of benchmark accuracy. But under the current modelling assumption of success probability being a logistic function of task length, SWAA+HCAST accuracy improvements alone might explain the exponential progress in horizon length 🔎

In the blog, I show detailed evidence for why we need to stop overindexing on the METR plot. Share it with anyone you see making decisions based on where the latest model lands on the METR plot.

shash42.substack.com/p/how-to-game-…

English

Ankur Bapna retweetledi

Google AI Studioにて、最新の「Gemini 2.5 Flash / Pro TTS」モデルがプレビュー公開されました。

私自身やチームの仲間たちが開発に関わったプロダクトに、新しい成果が積み上げられ、進化し続けていることは、長年この分野を見てきた研究者、そしてGooglerとして非常に感慨深いです。

Gemini TTS は、音声合成における大きな課題であった「自然さ」と「制御性」のトレードオフを、極めて高い次元で解消しつつあります。

1. 文脈を理解した「間」と緩急:単にテキストを読み上げるのではなく、緊張感や安堵といった文脈をAIが理解し、自動的に、あるいは指示通りに話速を調整します。

2. 意図通りのスタイル表現:「陽気さ」や「シリアスさ」といった抽象的なトーンの指示に対し、Gemini TTSは驚くほど忠実に従います。

3. 複数話者の自然な対話:キャラクターの一貫性を保ちながら、スムーズな会話のキャッチボールを実現しています。

開発者の皆さん、ぜひAI Studioで新しいGemini TTSを体験してみてください。

x.com/GoogleAIStudio…

Google AI Studio@GoogleAIStudio

日本語

Ankur Bapna retweetledi

We’re launching Gemini 2.5 Flash and Pro Text-to-Speech (TTS) model updates 🚀

Improvements include:

- Emotional style and tone versatility

- Context-aware pacing control

- Improved multiple-speaker capabilities

Dive into the blog to learn how these advancements are giving developers more control over speech generation. blog.google/technology/dev…

English

Ankur Bapna retweetledi

Ankur Bapna retweetledi

Ankur Bapna retweetledi

Multimodal is, unfortunately, i believe, much more sparsely jagged than language!

Language happens to have a lot of "densely jagged" regions that almost feel continuous, it's what the GPT2 paper title was about ; vision not so much, and audio I'm unsure.

My intuition, anyways.

Zephyr@zephyr_z9

Demis explicitly aims to solve multimodal And he doesn't sell "jagged AGI"

English

Ankur Bapna retweetledi

Ankur Bapna retweetledi

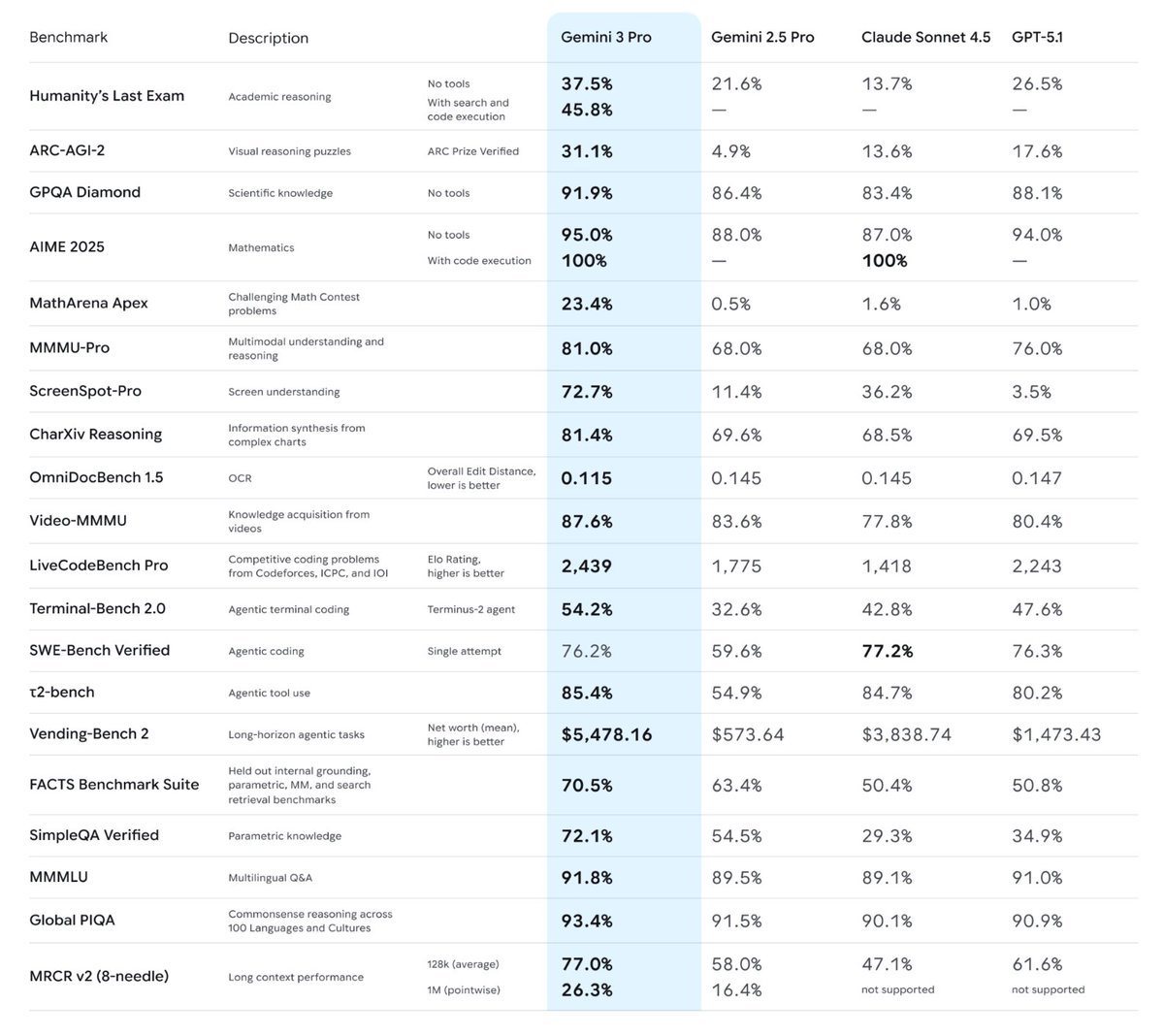

I’m really excited about our release of Gemini 3 today, the result of hard work by many, many people in the Gemini team and all across Google! 🎊

We’ve built many exciting new product experiences with it, as you’ll see today and in the coming weeks and months.

You can find it today on @GeminiApp and AI Mode in Search. For developers, you can build with it now in @GoogleAIStudio and Vertex AI.

blog.google/products/gemin…

The model performs quite well on a wide range of benchmarks.

English

Ankur Bapna retweetledi

Gemini Live’s new model updates are now available on the @GeminiApp on Android and iOS. Conversations are more adaptive and expressive, opening up new ways to learn and practice skills. Here are five ways you can try out these new updates:

-Tailor your learning. Ask Gemini to explain a topic in your lesson plan and then say, "Okay, speed up," to get a crash course on the way to your next class.

-You can now get tailored practice when learning a new language. Ask Gemini to quiz you on multiples of 10 in Korean, or practice casual greetings in Spanish. This allows you to gain real-world speaking experience in a low-risk setting.

-Practice for your next big moment, like job interviews, or prepare for tough conversations with Gemini's ability to respond to your situation.

-Hear stories come to life. Try asking Gemini to tell you about the Roman empire from the perspective of Julius Caesar himself.

-Liven things up by asking Gemini to speak in a fun accent, like a cowboy accent when brainstorming ideas for a rodeo-themed birthday party.

English

Ankur Bapna retweetledi

Google’s Gemini 2.5 Native Audio Thinking is the new leading Speech to Speech model per our Artificial Analysis Big Bench Audio benchmark

The new model achieves a score of 92% on Big Bench Audio, the highest result recorded by Artificial Analysis to date. This not only places it ahead of all previously tested native Speech to Speech systems, but also above a GPT-4o pipeline approach (Whisper transcription → GPT-4o text reasoning → speech generation).

Benchmark context: Big Bench Audio is the first dedicated dataset for evaluating reasoning performance of speech models. Big Bench Audio comprises 1,000 audio questions adapted from the Big Bench Hard text test set, chosen for its rigorous testing of advanced reasoning, translated into the audio domain.

Performance:

➤ Reasoning: Achieves 92% on Big Bench Audio, setting a new state-of-the-art for native Speech to Speech reasoning

➤ Latency: At an average time to first token of 3.87 seconds, the new model is slower than leading OpenAI models including GPT Realtime (0.98 seconds), due to the thinking component. The non-thinking equivalent still leads on latency at 0.63 seconds

Model details:

➤ Processes audio, video, and text inputs directly, generating both text and natural speech outputs

➤ Reasons over spoken input without transcription

➤ Supports function calling, search grounding, and thinking budgets

➤ 128k input and 8k output token limits with a knowledge cut-off of January 2025

English

Ankur Bapna retweetledi

Ankur Bapna retweetledi

Vertex AI でテキスト音声合成モデル「Gemini 2.5 Pro TTS」と「Gemini 2.5 Flash TTS」がローンチされました。

- プロンプトで感情を指定して音声を生成

- テキストでピッチやペースを細かく制御

GAでは、ストリーミングや複数話者、70以上のロケールに対応予定です

cloud.google.com/text-to-speech…

日本語

This appears to be our old friends from the Google SoundStorm team. Sounds like good work!

microsoft.github.io/VibeVoice/

google-research.github.io/seanet/soundst…

Maziyar PANAHI@MaziyarPanahi

microsoft is dropping (still uploading) VibeVoice-1.5B model on @huggingface! i love the multi-speaker conversational audio feature for podcasts!

English

Ankur Bapna retweetledi