Konstantin Anagnostou

2.2K posts

@browser_use @gregpr07 Installed it. It's like magic. Migrating browser use skills now. I use it with deepseek v4 pro for first pass unpaved domains, afterwards I use deepseek v4 flash with success! Integrated 1password cli. Only thing: must have tab sessions manager because they keep accumulating.

English

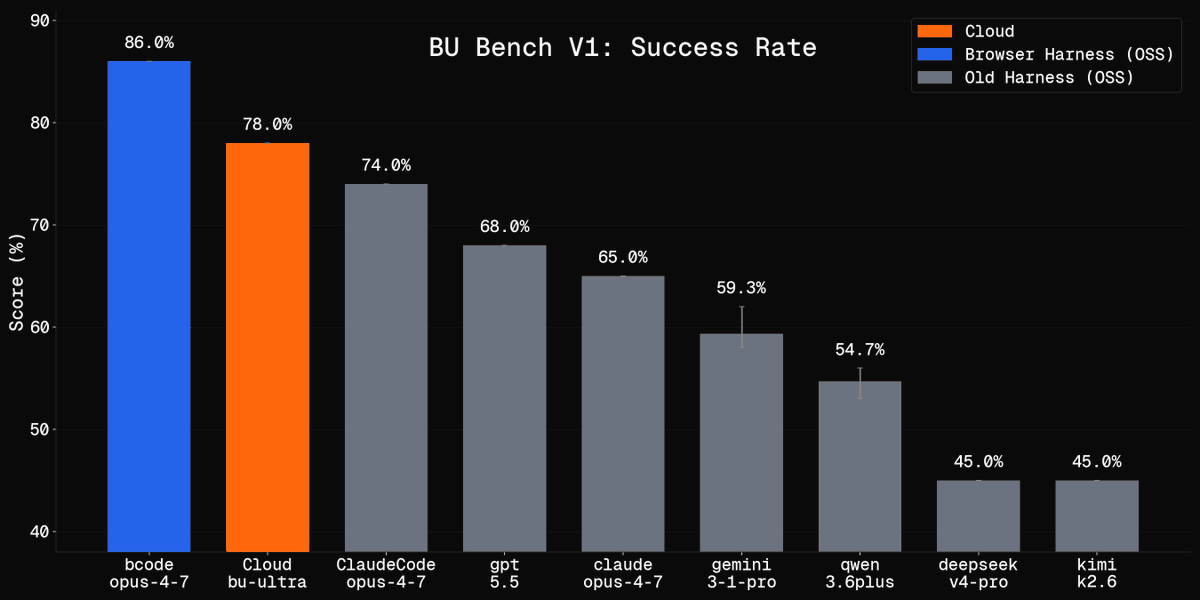

Browser Harness hit 10,000⭐️ on Github in 15 days

We are going to fix how agents interact with the browser.

Gregor Zunic@gregpr07

Introducing: Browser Harness. A self-healing harness that can complete virtually any browser task. ♞ We got tired of browser frameworks restricting the LLM. So we removed the framework. > Self-healing — edits helpers. py on the fly > Direct CDP — one websocket to Chrome > No framework, no rails, complete freedom > Drop-in for Claude Code and Codex I challenge anyone to find a task that DOESN'T work. I couldn't yet.🔥 100% open source ↓

English

@anotherdaynow @PhotoGarrido @OpenAI @openclaw Con la suscripción de chatgpt pro tienes uso de GPT-5.5 "normal" y aparte se puede usar GPT-5.3-Codex-Spark

El consumo lo calculan por separado.

Español

@alexatallah Don't see any real world case applicable

English

@JuanjoSC @PhotoGarrido @OpenAI @openclaw What do you mean by using separate credits? So you’re not spending usage included in your subscription?

English

@PhotoGarrido @OpenAI @openclaw Coincido, en openclaw lo tengo con 5.3 codex spark que va con créditos aparte y vuela 😉

Español

@PhotoGarrido @OpenAI @openclaw I was 1 click away from a €100 AI plan. Then DeepShift V4 dropped. Tried Flash once and never went back. Now I keep a €20 sub only for super specific architecture research… basically never. Flash is that good—I use it with zero guarantees.

English

I don’t know what’s going on with the last week of @openclaw releases. The feature direction is genuinely exciting, but the base keeps shifting so fast that every release I tested hit a different foundational break.

- v2026.4.27: local memory/search broke because the managed runtime deps did not retain/resolve node-llama-cpp.

-v2026.4.29: that class looked fixed, but Discord/Telegram failed on startup because packaged plugin runtime deps missed json5. Repair attempts were not durable.

-v2026.5.2: channels could be made healthy only after local patching, but native Codex/tool adoption hit a worse blocker: tools visible in catalog/runtime inspect were missing from tools.effective, so agents could not

reliably use memory/wiki tools.

These are not edge-case polish issues. They are “can the agent actually operate normally after upgrade?” issues.

Right now testing the new features feels impossible. Stability first, then features. Back to v2026.4.26

Peter Steinberger 🦞@steipete

This one fixes the depenency issues/slowness some had when installed via npm. Plugins are hard, worth it tho! Package is way leaner now, we moved [almost] everything into extensions! docs.openclaw.ai/plugins/manage…

English

You're using OpenClaw wrong if it's still one chat window.

Anyone running everything in one chat knows the feeling. Nothing runs in parallel. Code waits on research, research waits on ops, ops waits on whatever you started yesterday. And every topic switch contaminates the next.

Telegram supergroup topics fix this. Each topic is a separate conversation, and the agent treats each one as its own context. Give each topic a job (Code, Research, Ops, Content), point OpenClaw at the group, and you've got what's basically four agents running in parallel that never talk to each other.

Setup takes an afternoon. Here's how:

Step 1. Install clawddocs first.

openclaw skills install clawddocs

This pulls 200+ pages of OpenClaw docs into the agent's context. Without it, every config question becomes a guess.

Step 2. Create a Telegram supergroup. In Group Settings, turn on Topics.

Each topic is a fully separate conversation. The agent doesn't carry context between them, which is the whole reason this works. It's not multitasking, it's hard isolation.

Step 3. Name the topics after jobs, not the agent. Code, Research, Ops, Content. Whatever your actual workflow is.

Step 4. Add the bot to the group. Make it admin and give it the Manage Topics permission.

Then open [@]BotFather, run /setprivacy, pick your bot, and choose Disable. Without that, the bot only reads messages that start with /, which means it ignores almost everything you type in the topics.

Step 5. Open a chat with the bot and tell it to find every group it's been added to, then update openclaw.json with that list. The bot pulls the list from Telegram itself. From then on, OpenClaw sees every group and every topic inside.

Step 6. That's it. Open whichever topic you need, ask the question, get an answer that isn't contaminated by the other three lanes.

English

@nahcrof At least it responds but unstable and slow not worth moving from Deepseek provider. Hope you make it reliable and faster.

English

@anotherdaynow Flash is down, please try another model, I can give you $2 more credit

English

@nahcrof Tried pro. Took x10 time compared to Deepseek provider to complete a simple reply. I see also that thinking level low medium high is not supported? Come for the 700t/s of flash -need almost live agent- but it didn't work.

English

@nahcrof Has anyone used deepseek-v4-flash, 900tps is insane. Is it decent at anything?

English

@0xSero Exactly. Flash is the surprise! Follows instructions perfectly. A DOer!

English

@zUnEm01 I agree that GLM is good, but it’s far too slow—painfully so. I consider Kimi good as well, but it’s also far too slow. For me, DSeek is the best, and the biggest surprise is Flash, Deepseek also the ONLY amon GPT Gemini that makes correct math calc.

English

GLM 5 could be better but it is very unreliable asf!

Kimi k2.6 could be better but it just has issues with understanding and following instructions, it over does stuffs and destroys my repo.

Deepseek is a winner here because it understands and follows instructions with 1m context it's a plus for me. The only problem with Deepseek is this: it doesn't have vision.

Kasif@md_kasif_uddin

Be honest, which is the best open source AI Model?

English

@openclaw After 3 hours debugging and my nerves broken I falled back to .27 version... This is not good. We can't every time update-and-pray.

English

@openclaw Updated. Crashed ALL system. Sorry folks Openclaw is FAR away from being a pro system. It is just a hobby keeping us awake till 3am trying to take the shit out of the system.

English

OpenClaw 2026.4.29 🦞

💬 Group chats feel much better now

📌 Follow-up commitments from context

🔐 Safer exec, pairing, and owner controls

🟩 NVIDIA provider + model catalogs

⚡ Faster startup + plugin/channel fixes

Group chat finally feels agent-native.

github.com/openclaw/openc…

English